Overview

This is a list of everything I know about machine learning and camera traps, which is presumably just a subset of what’s out there… email me with updates, or submit pull requests. Help me keep this page up to date! And tell me what I got wrong about your software and your papers!

Maintained by Dan Morris. Disclosure of what I work on: I contribute to several projects on ML for camera traps (particularly, MegaDetector, SpeciesNet, and Wildlife Insights) and an open repository for conservation data (lila.science). And since I’ve disclosed that, I can say that although I don’t filter papers for this list based on whether they use stuff I’ve worked on, I do use this list as a way of tracking how those systems are being used, so in the “papers” sections, you will see tags for a few things I want to track.

Table of Contents

Camera trap systems using ML

Systems in active development

Systems that appear to be less active

Systems that appear not to exist any more

OSS repos about ML for camera traps

Active repos

Less active repos

Publicly-available ML models for camera traps

Smart camera traps

Manual labeling tools people use for camera traps

Review papers about labeling tools

Tools in active development

Tools that appear to be less active

Non-camera-trap-specific labeling tools that people use for camera trap data

Post-hoc analysis tools people use for camera trap data

Camera trap ML papers

Papers with summaries

Papers I haven’t read yet

Papers that use LILA data but mostly aren’t about camera traps

Data sources for camera trap ML

Metadata standards for camera trap data

Further reading

Places to chat about this stuff

Camera trap systems using ML

I’ve broken this category out into “systems that look like they’re being actively developed” and “systems that are less active”. This assessment is based on visiting links and searching the Web; if I’ve incorrectly put something in the latter category, please let me know!

Systems that appear to be in active development

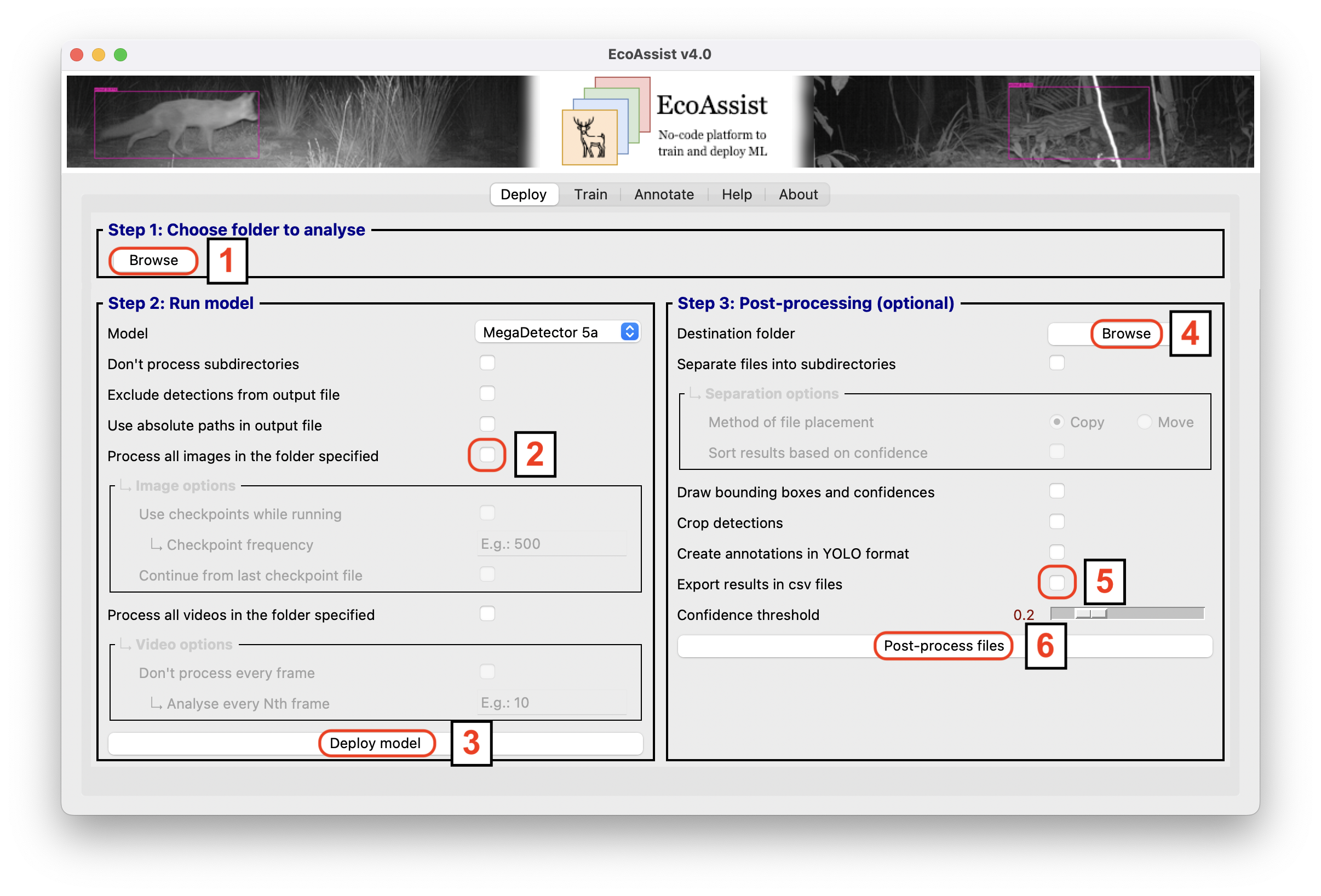

AddaxAI

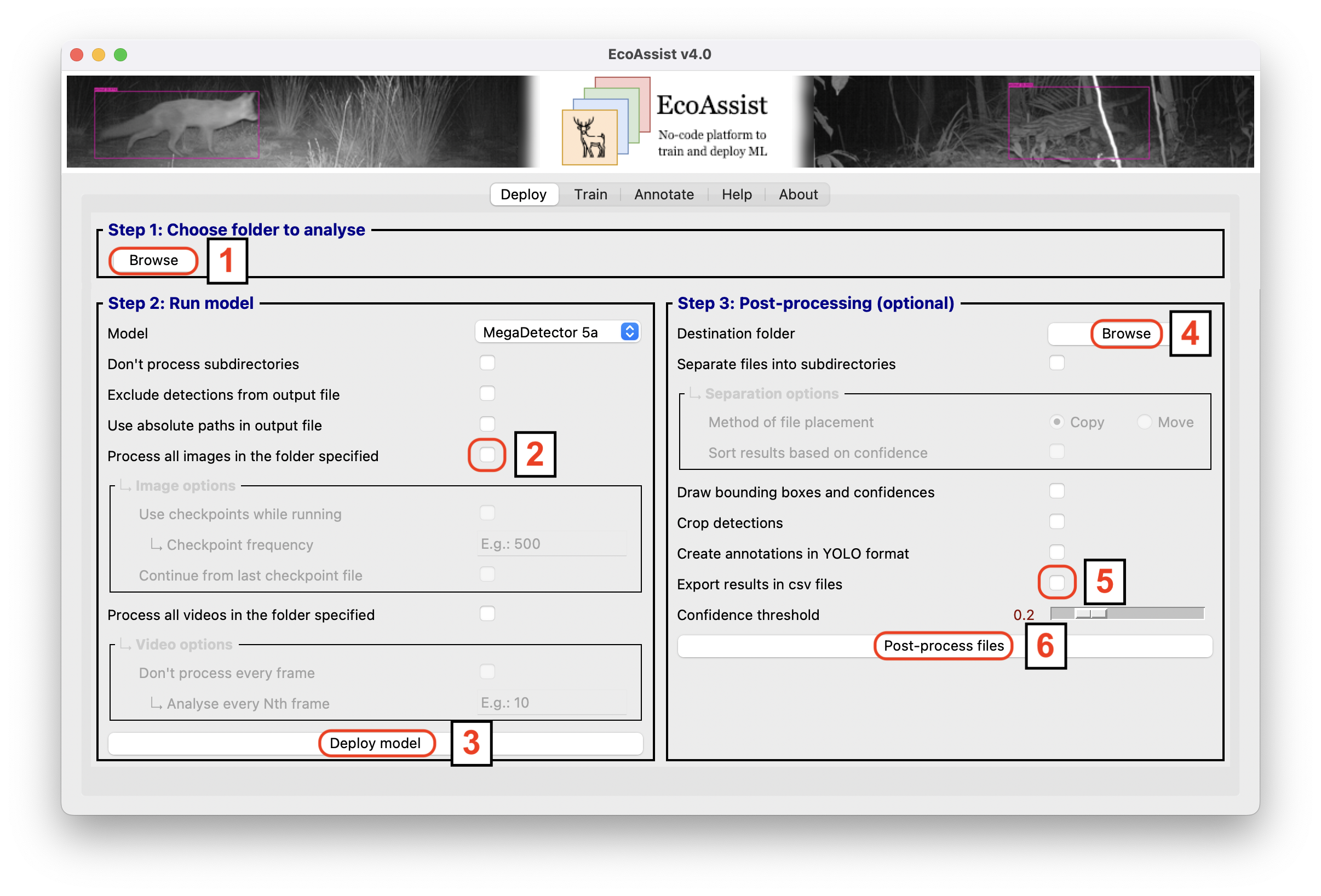

Open-source client-side tool for running MegaDetector and a number of species classifiers, including various postprocessing steps.

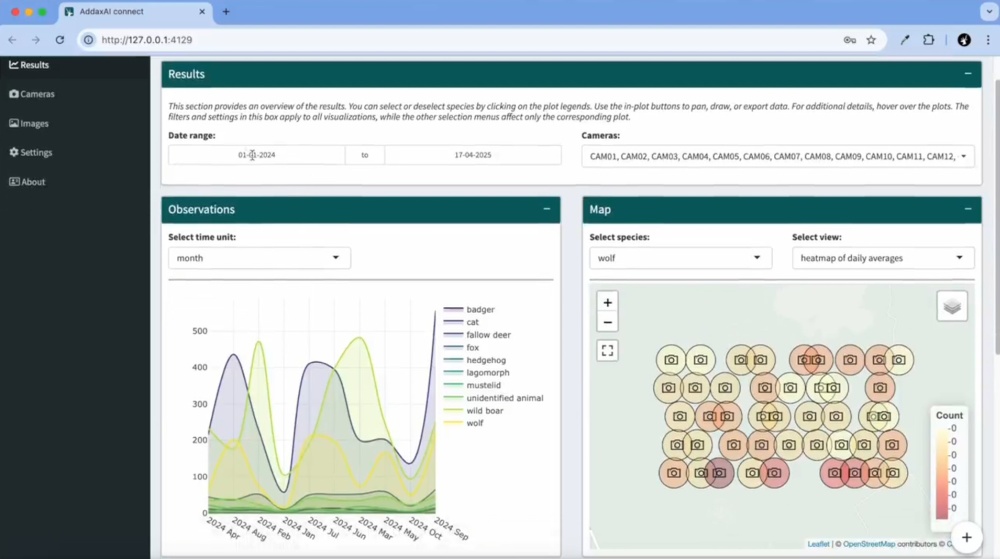

AddaxAI-connect

Cloud-based platform that receives images from connected cameras, runs AI models, and sends notifications based on specific conditions (e.g. specific species).

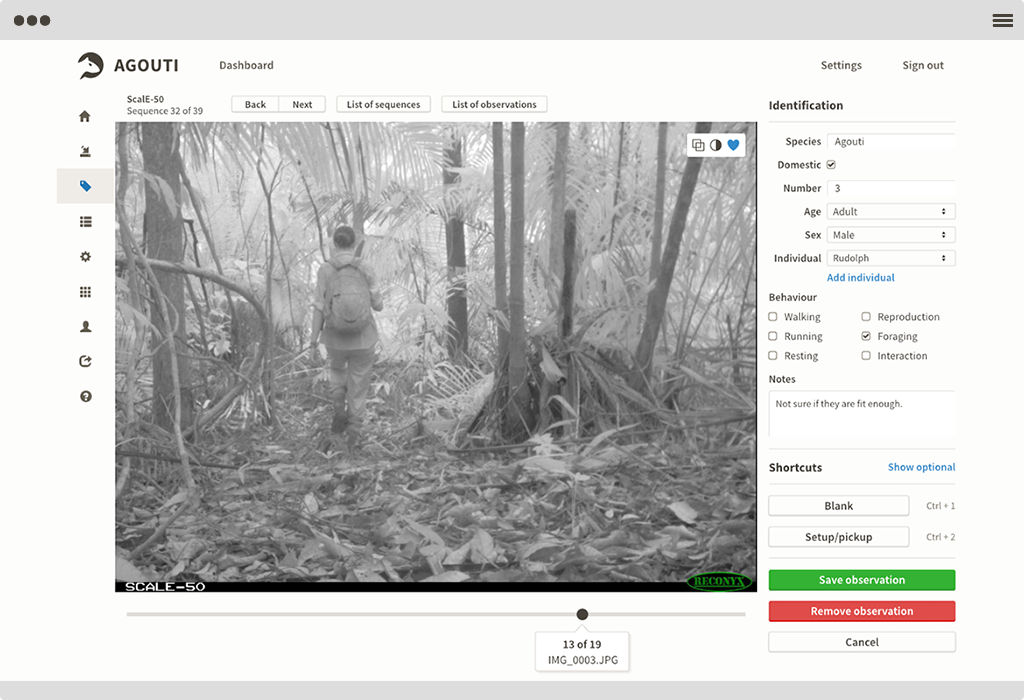

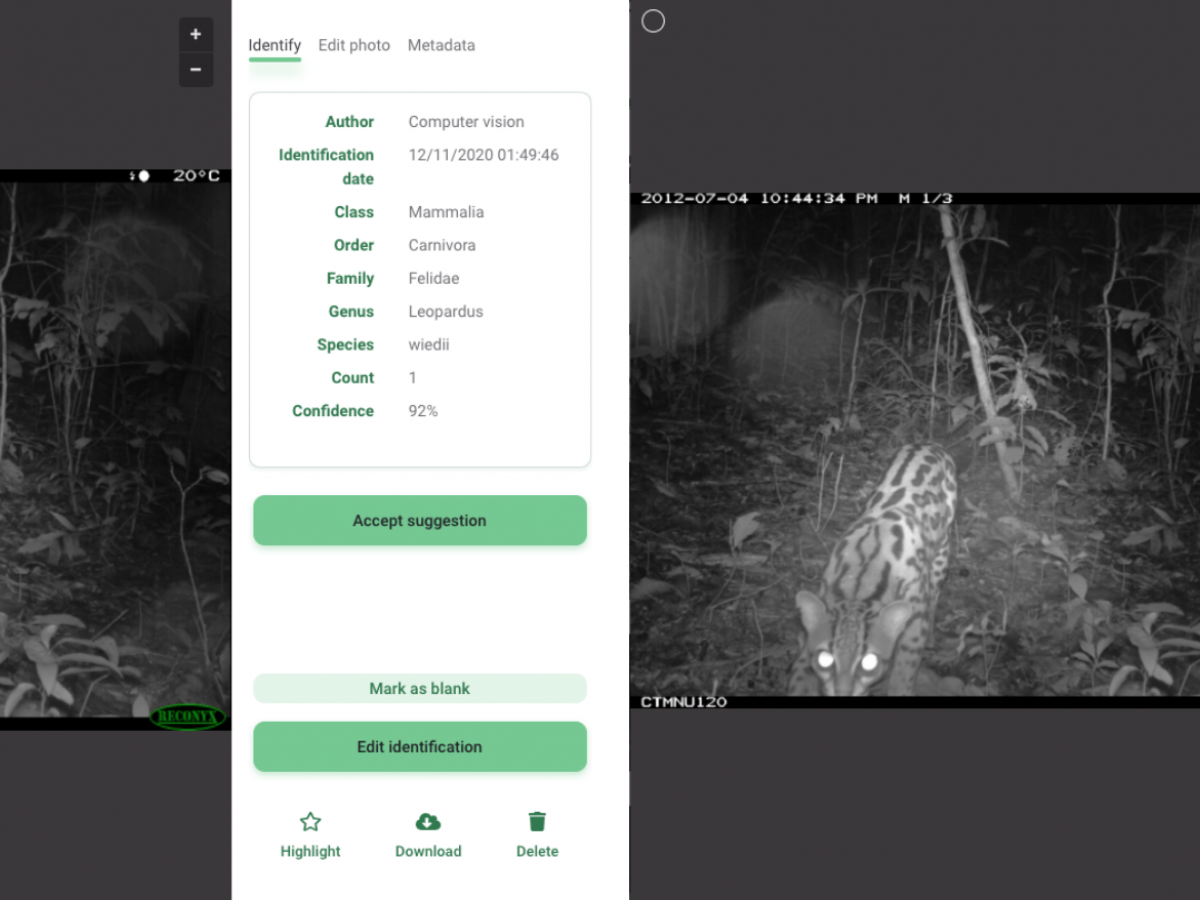

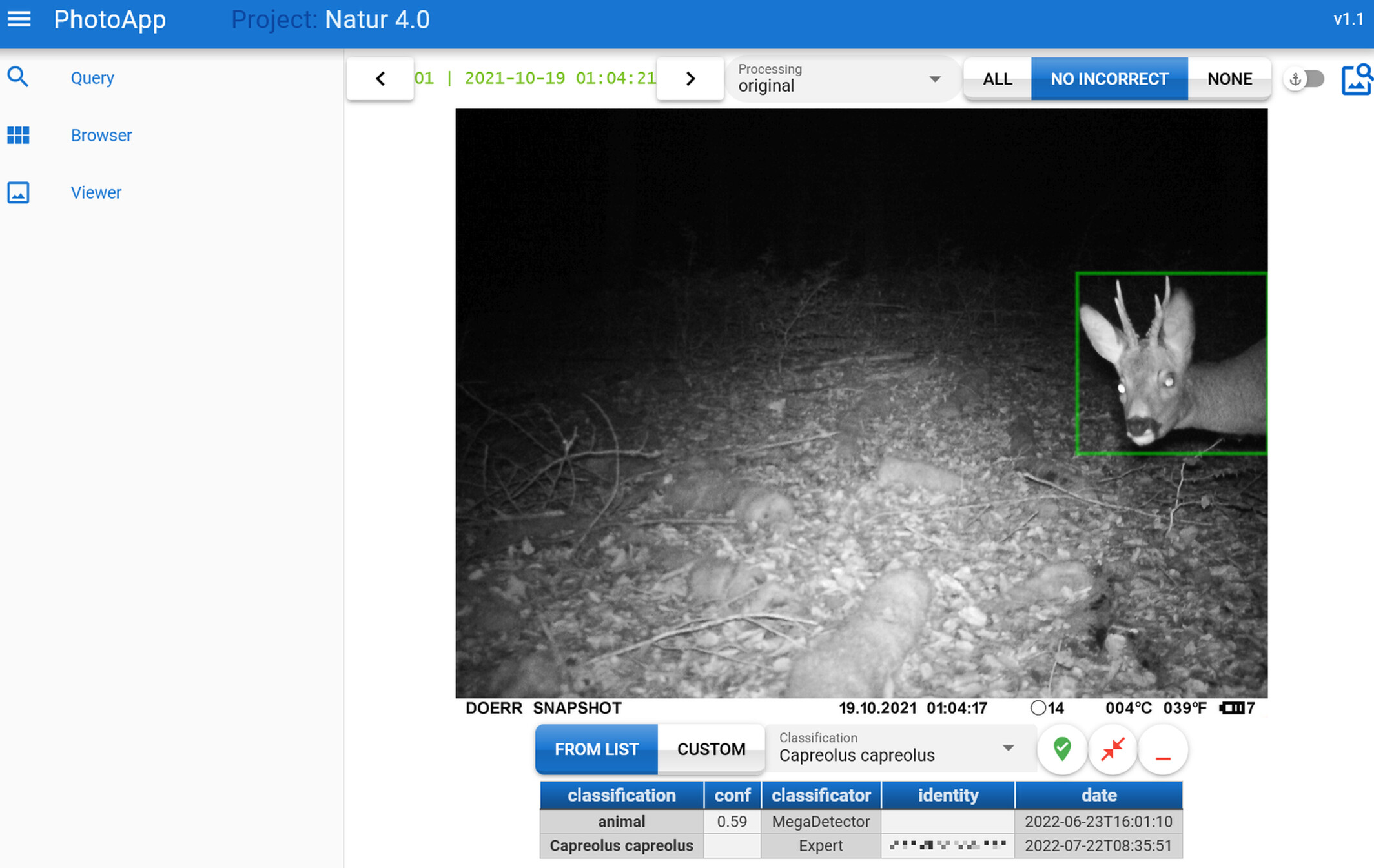

Agouti

Web-based tool for camera trap data management, annotation, and spatial analysis.

The documentation lists the available AI models, including MDv5a, DeepFaune’s classifier for European Species, and Agouti-specific models for Western Europe, Europe, French Guiana, India, Nepal, Panama, and Southern Africa. Early adopter of Camtrap DP export, especially to facilitate data release on GBIF.

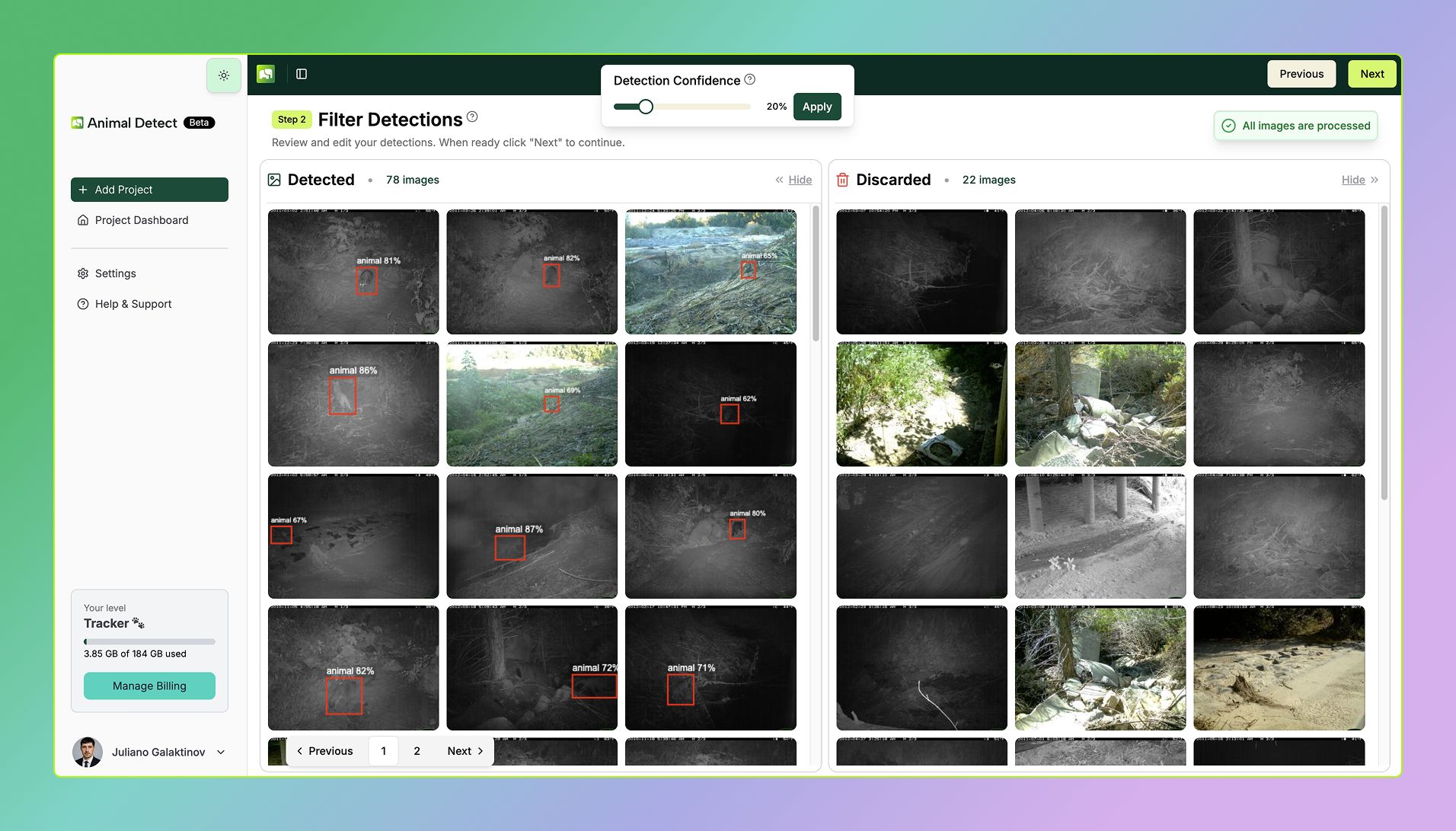

Animal Detect

Online platform for processing camera trap images. Uses MD for cropping and blank elimination, incorporates species classification, and allows similarity-based grouping in an embedding space.

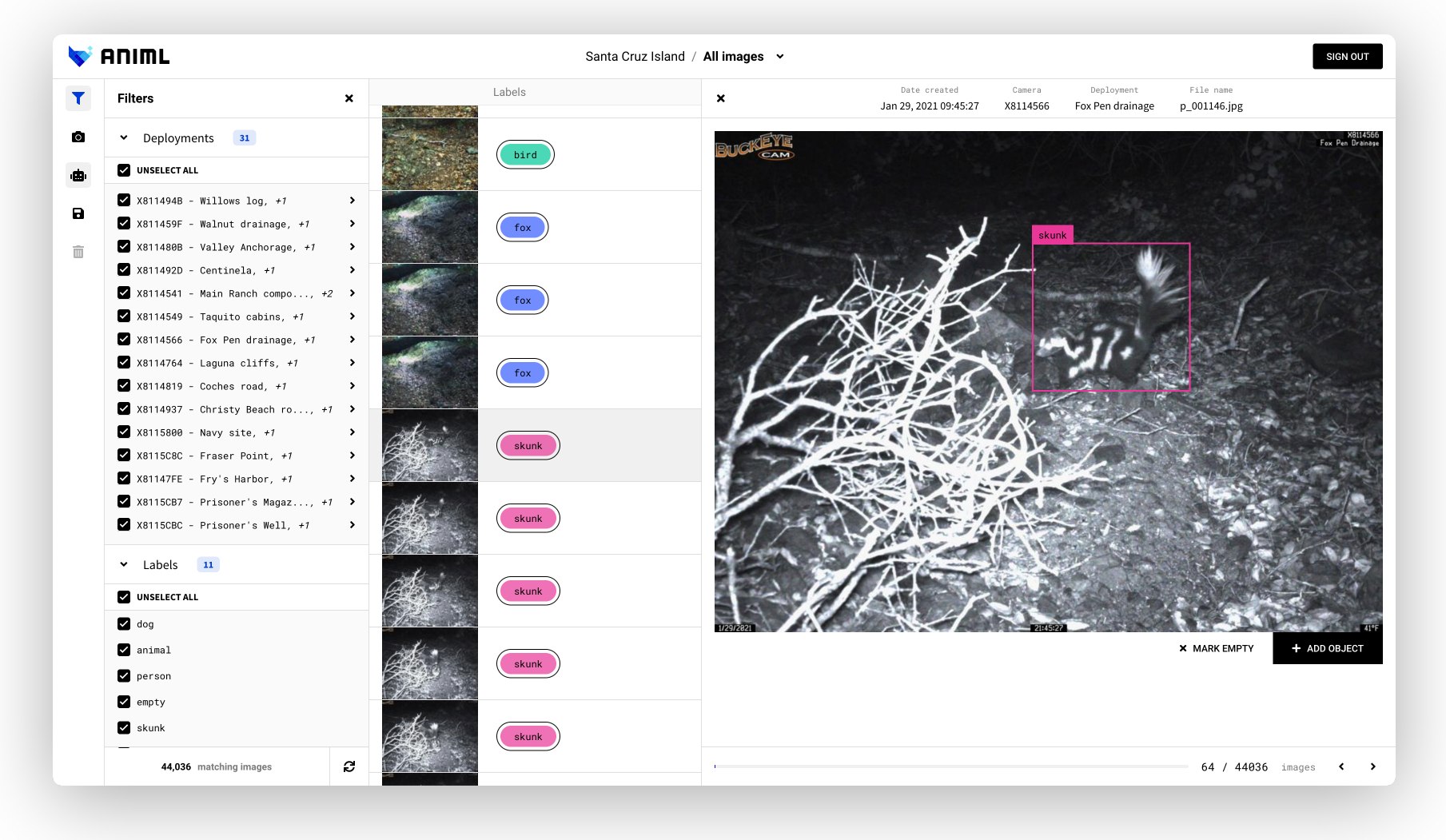

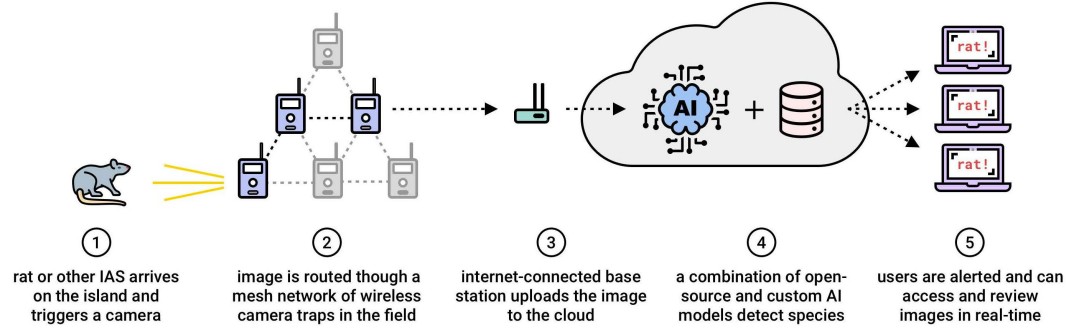

Animl

OSS platform developed by TNC for managing data from biosecurity cameras, with real-time detection and classifications. Code is here.

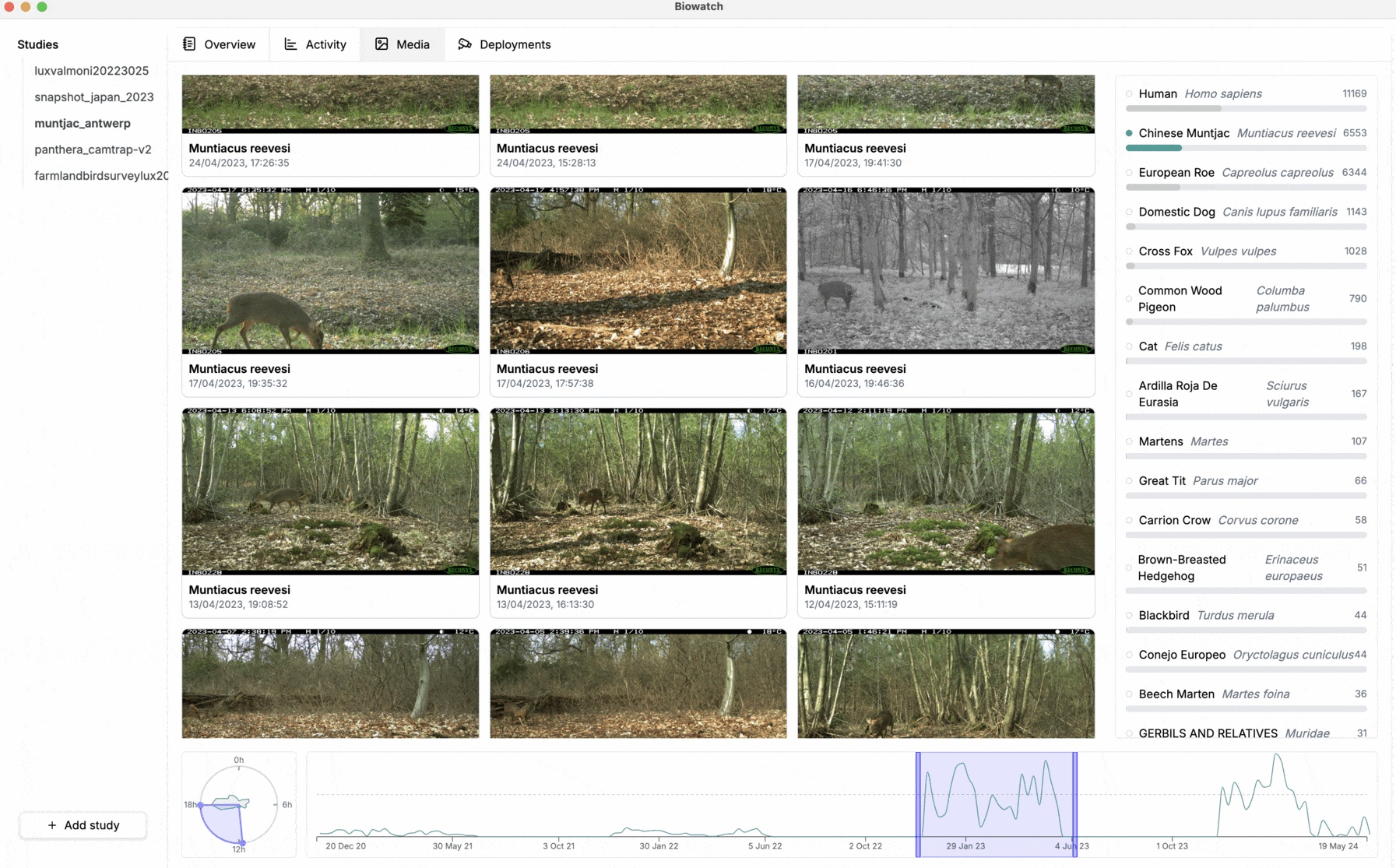

Biowatch

OSS, cross-platform client-side tool for running AI models, spatial visualizations, and image review.

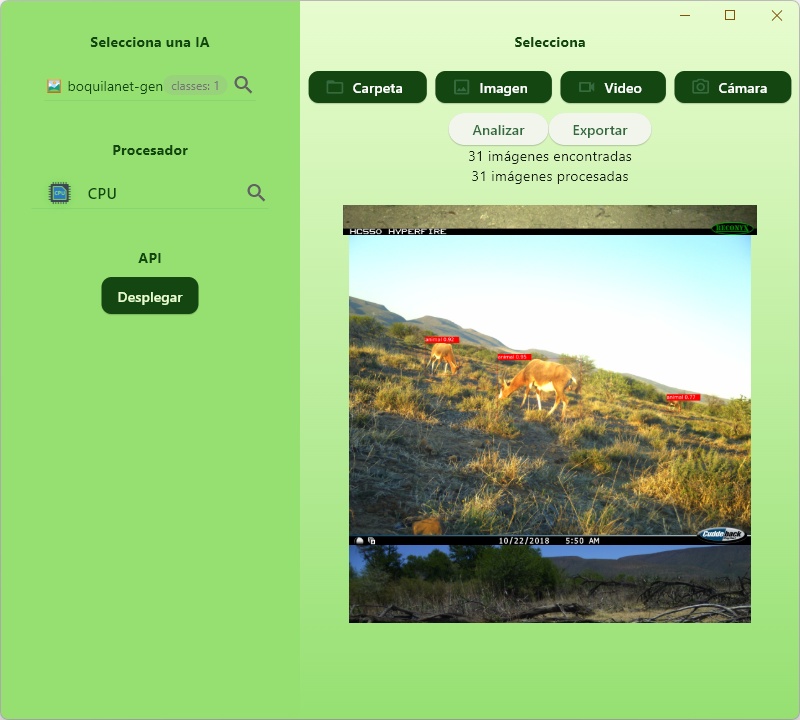

BoquilaHUB

Client-side tool that runs a variety of models (MD, SpeciesNet, custom animal detectors). Code is here.

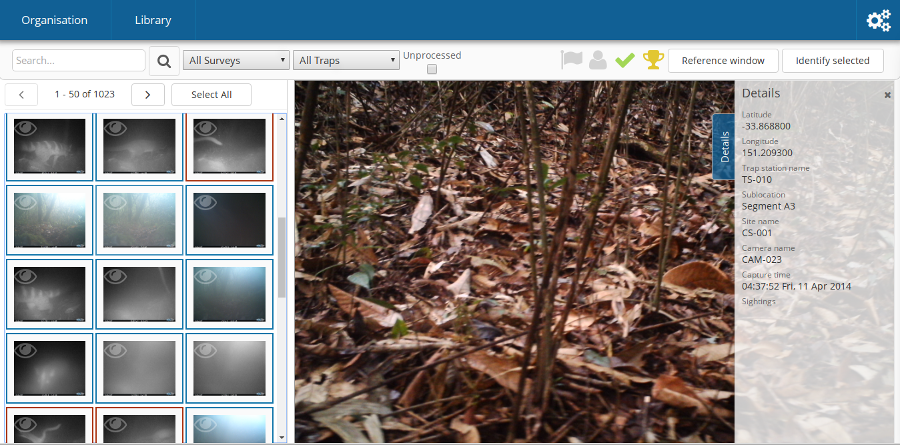

Camelot

Open-source, runs in Java in a browser. Developed in consultation with Fauna & Flora International. Preliminary integration with MegaDetector allows selective review of human/animal/empty/vehicle images.

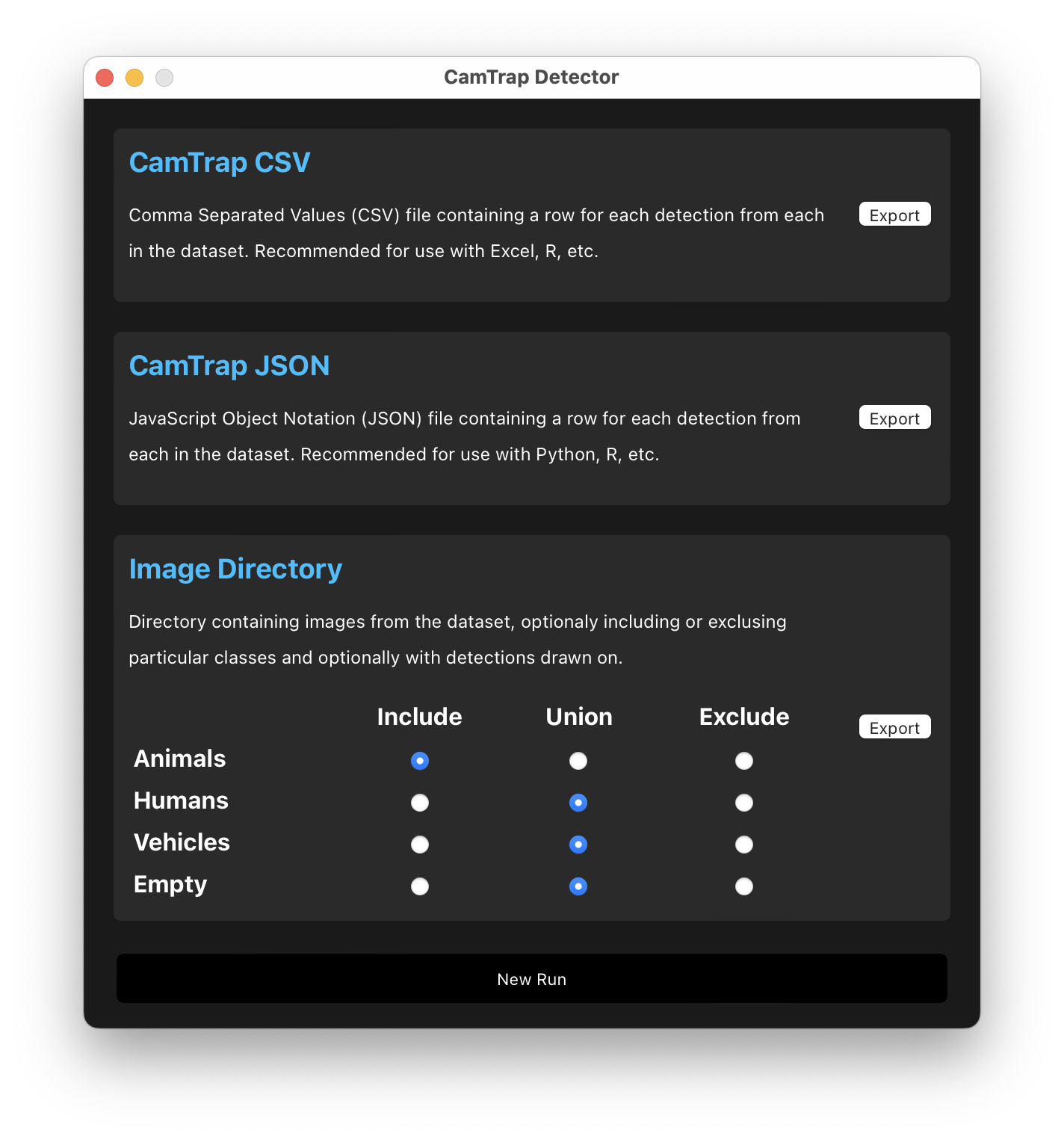

CamTrap Detector

Client-side tool for running MegaDetector, including various postprocessing steps.

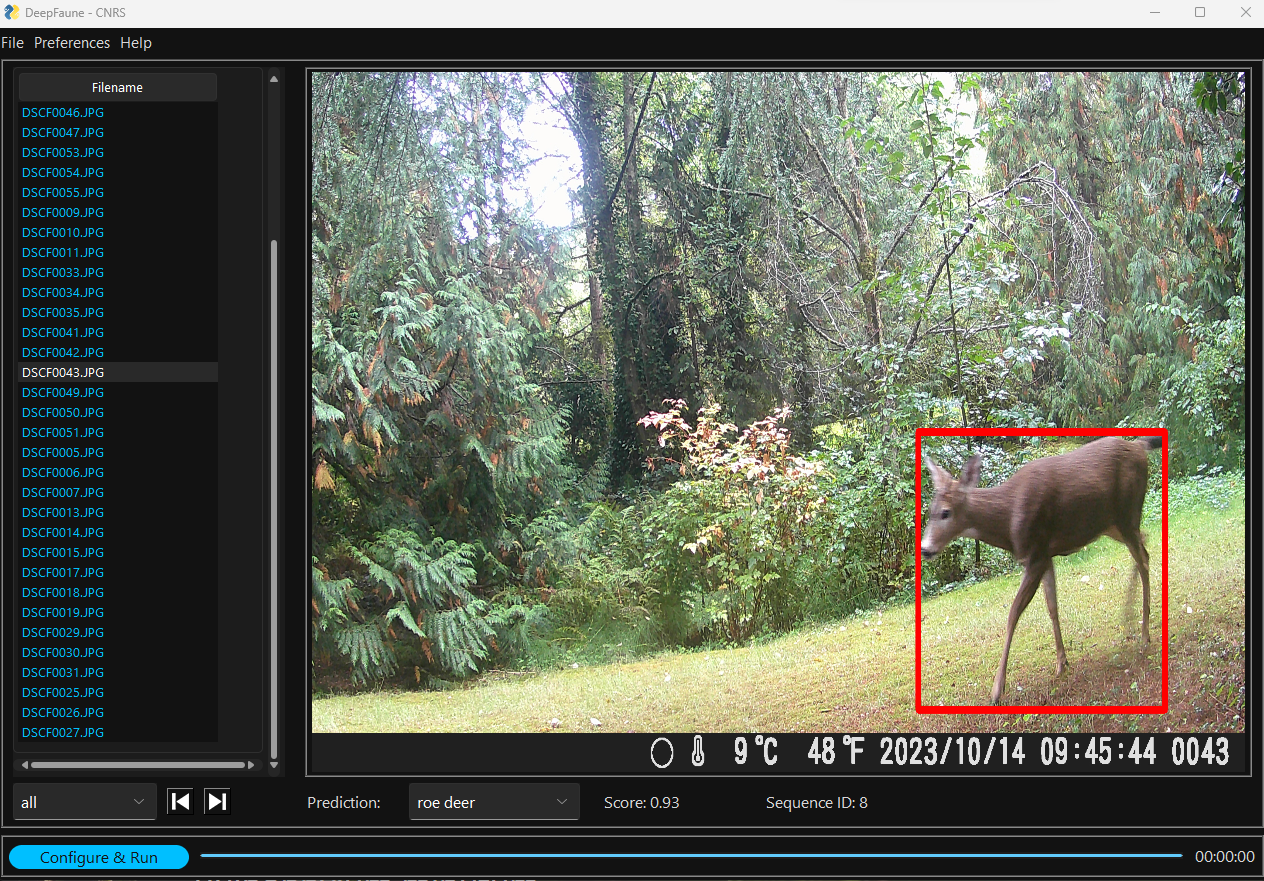

DeepFaune

Open-source thick-client tool with a custom multiclass detector (based on YOLOv8s) for European animals, operating in tandem with MDv1000-sorrel and/or MDv1000-redwood.

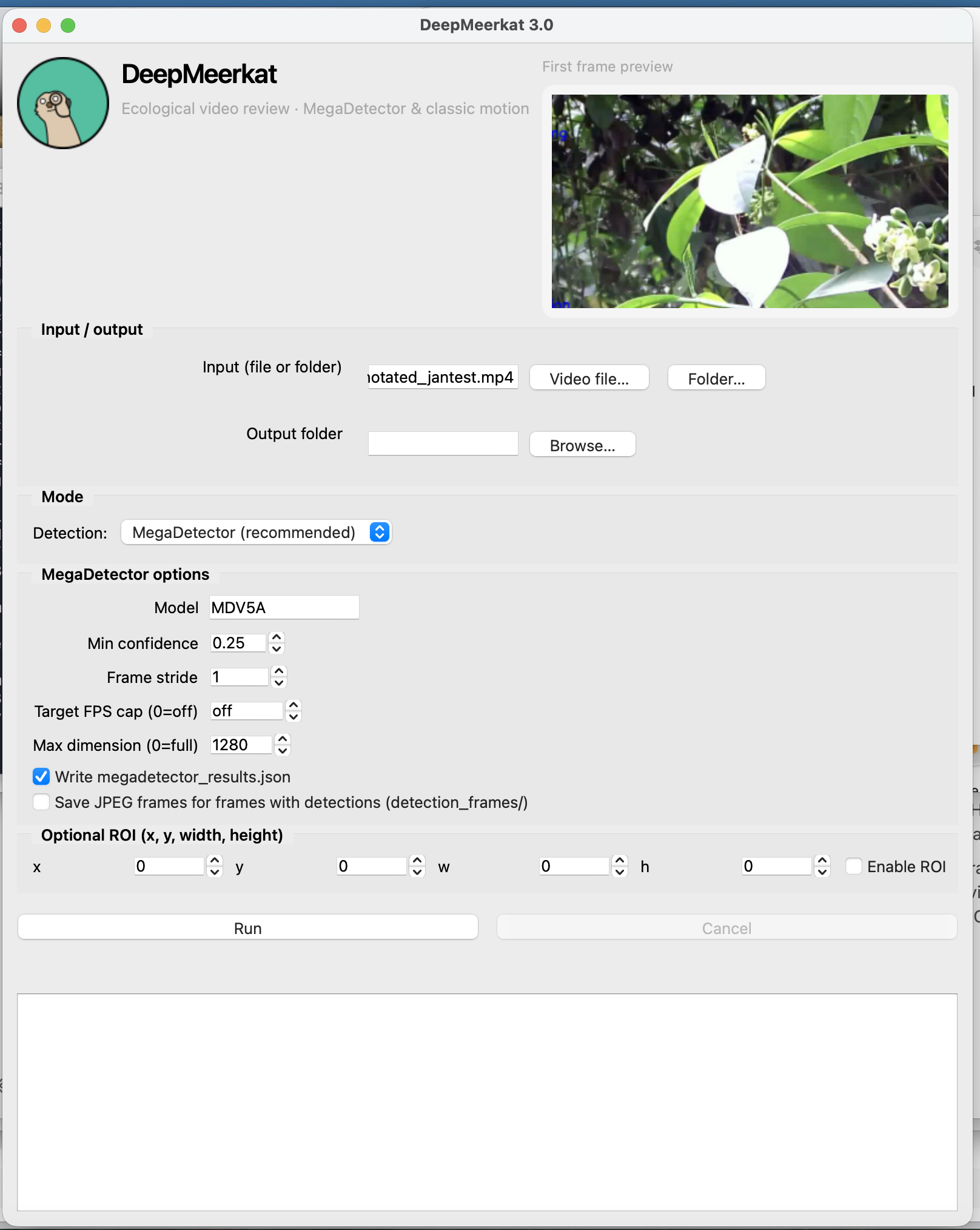

DeepMeerkat

Open-source client-side tool for processing ecological videos (not necessarily from caemra traps).

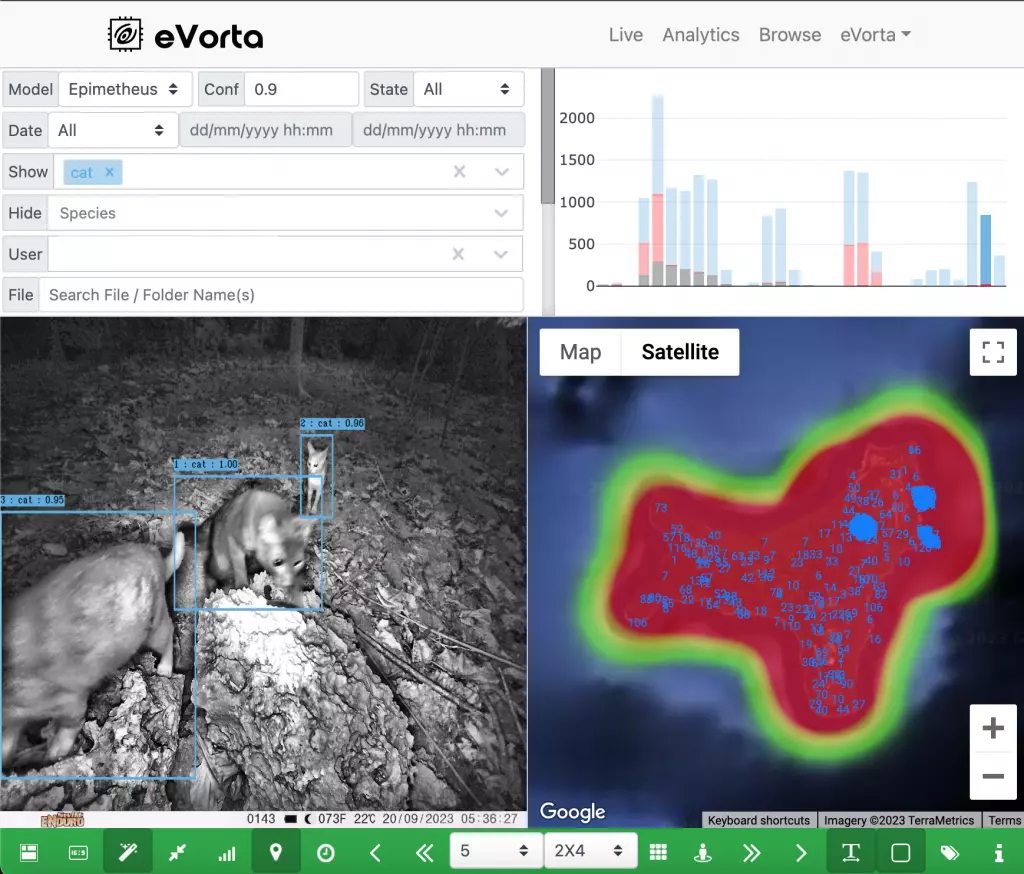

eVorta

Connected camera network with cloud-based AI capabilities (for detecting and classifying Australian wildlife).

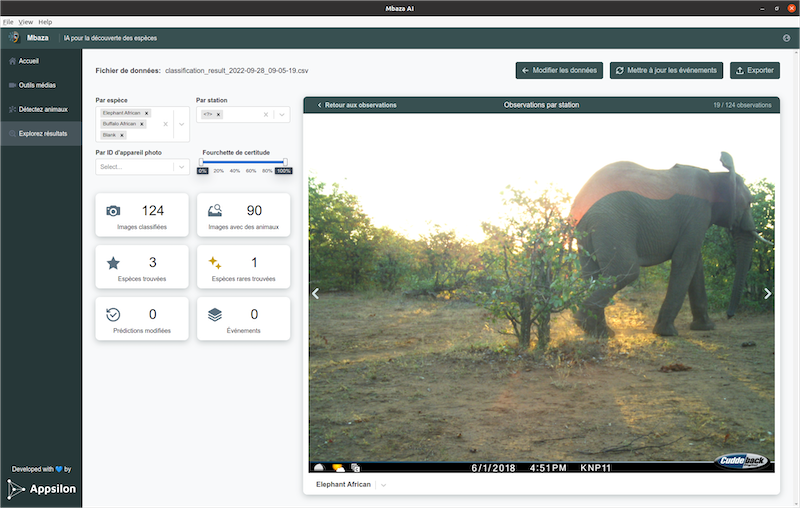

Mbaza

Open-source, client-side Shiny app that includes image review and client-side classifiers for two African ecosystems. Code is here. More information here, here, and here.

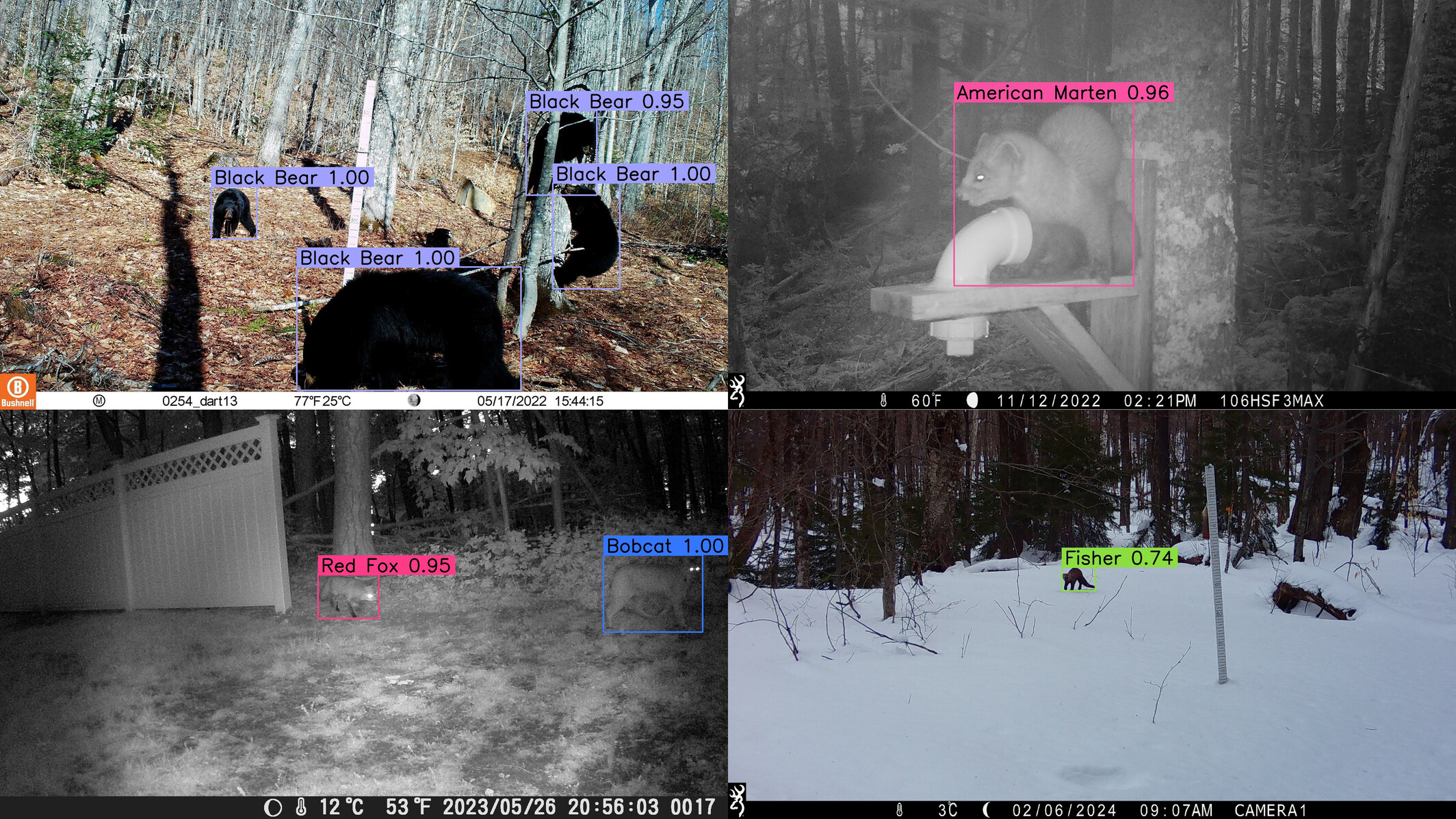

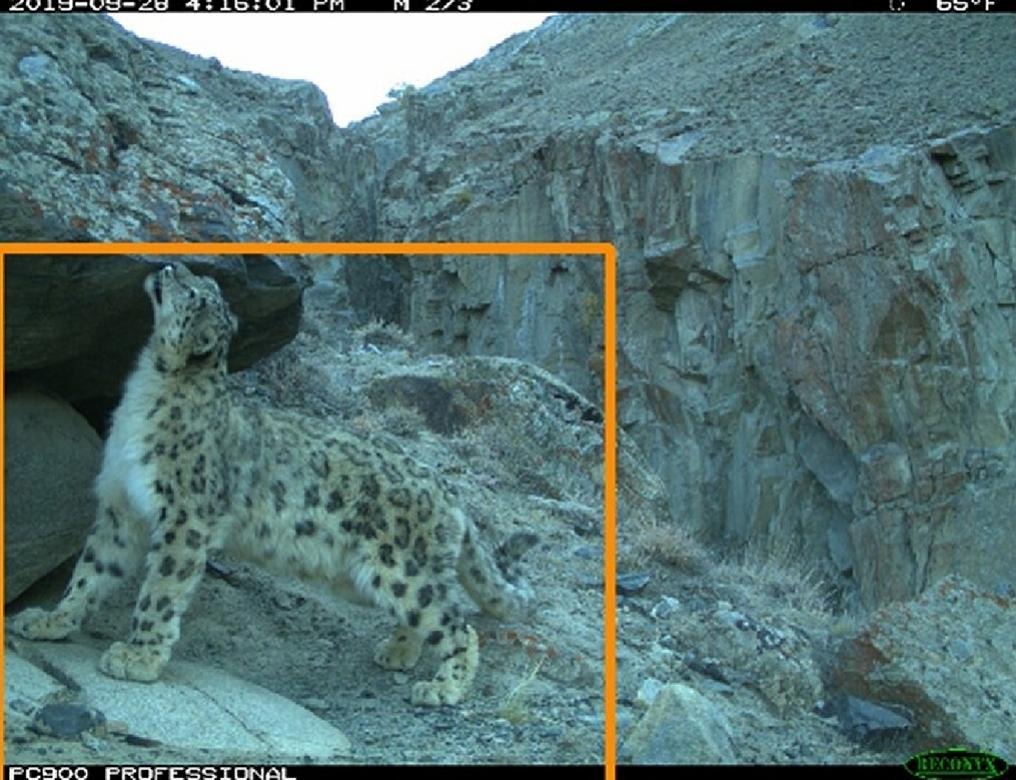

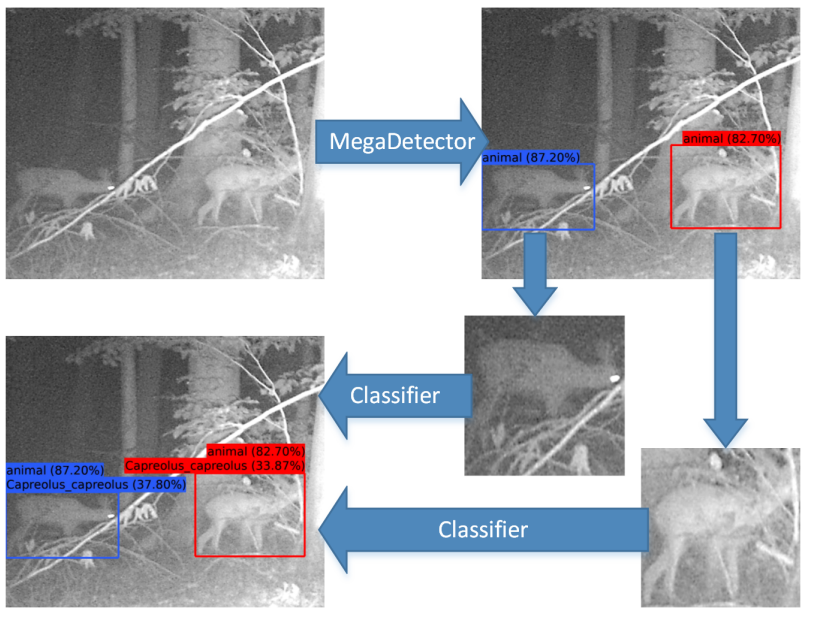

MegaDetector

This is not a “platform” or “system” in the same sense as other items on this list, the tooling is almost a “system”, so for this list, I’m upgrading MegaDetector to “system”. Full disclosure: I maintain this project.

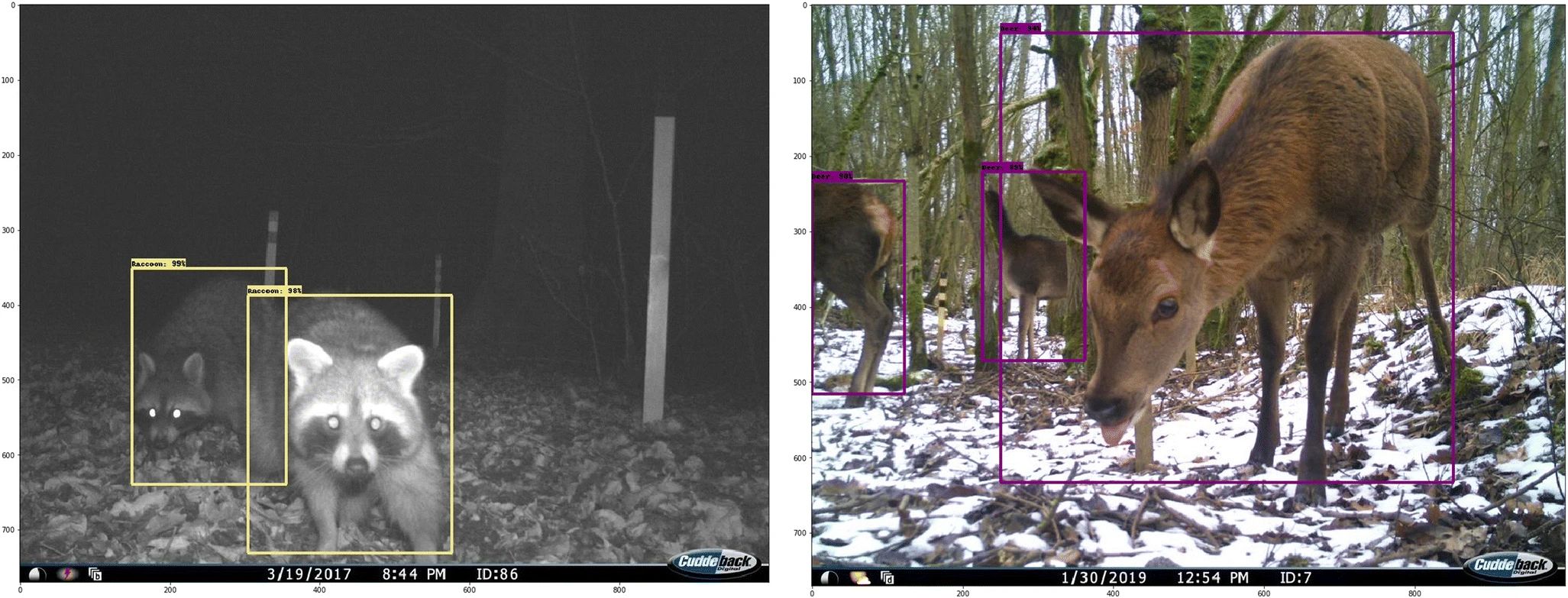

MegaDetector is an object detection model that is used to identify camera trap images that contain animals, people, vehicles, or none of the above; in practice, it’s primarily used to eliminate blank images from large camera trap surveys. The GitHub repo provides Python scripts to run MegaDetector and do stuff with the output, and the MegaDetector Python package wraps that up in pip-installable format. MegaDetector (or its output) has also been integrated into most of the other items on this list.

OCAPI

Web-based platform for camera trap data management, uses MegaDetector and a custom classifier for European taxa (class list here).

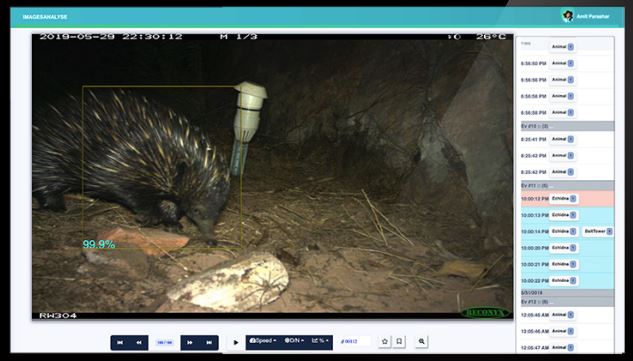

Reconyx connect

Reconyx mostly makes cameras, but their mobile app includes what appears to be cloud-based AI for classifying deer/buck/doe/turkey/human/vehicle in images from connected cameras (video).

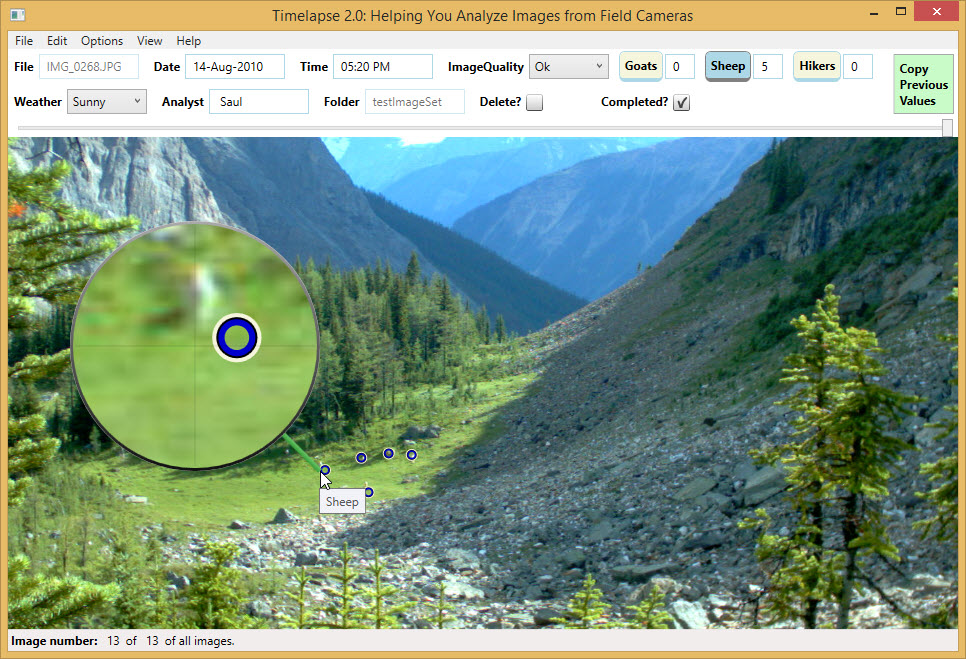

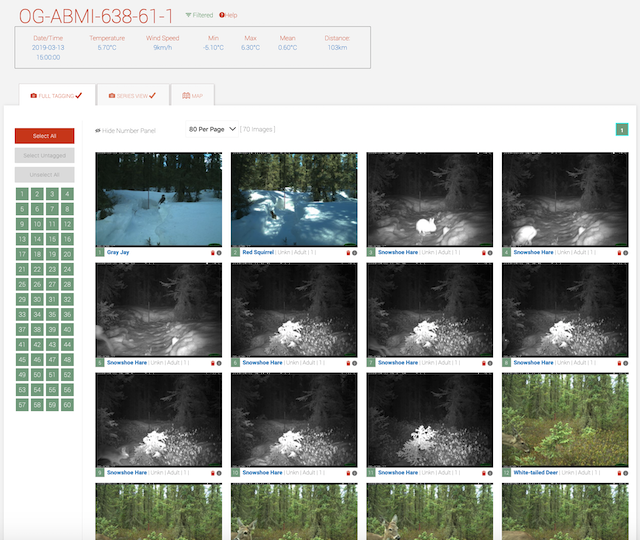

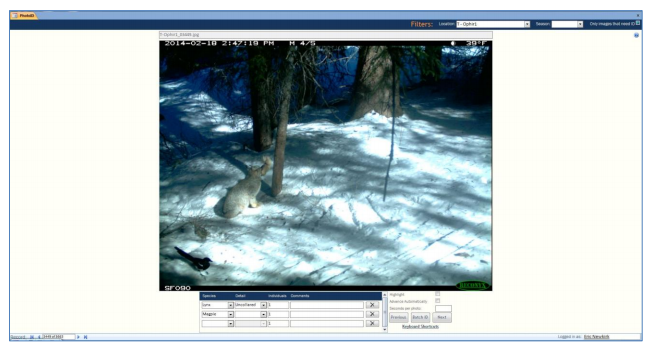

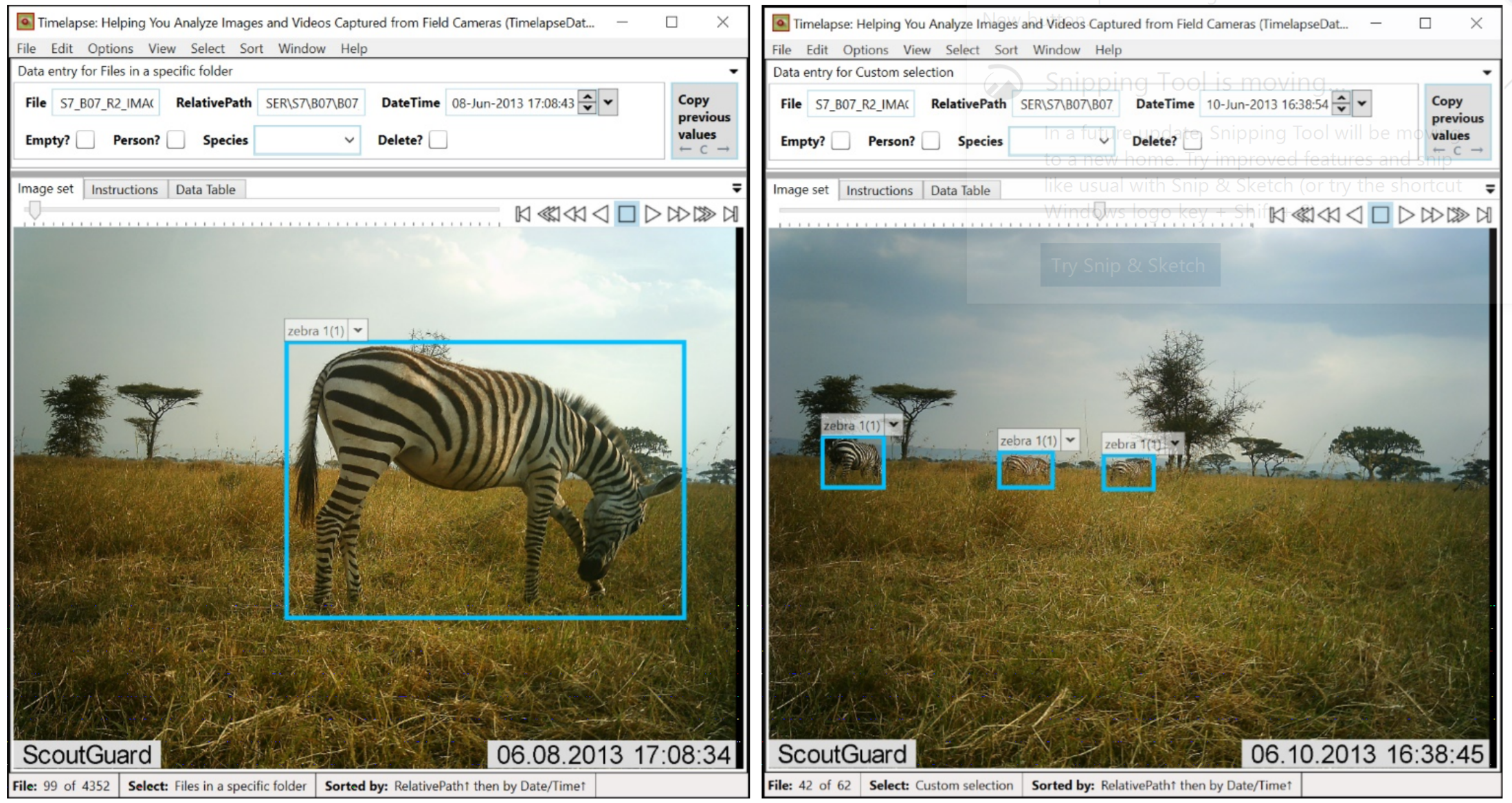

Timelapse2

Thick-client, .net-based tool for reviewing camera trap images. Incorporates ML in the sense that it has integrated the output from MegaDetector and associated species classifiers.

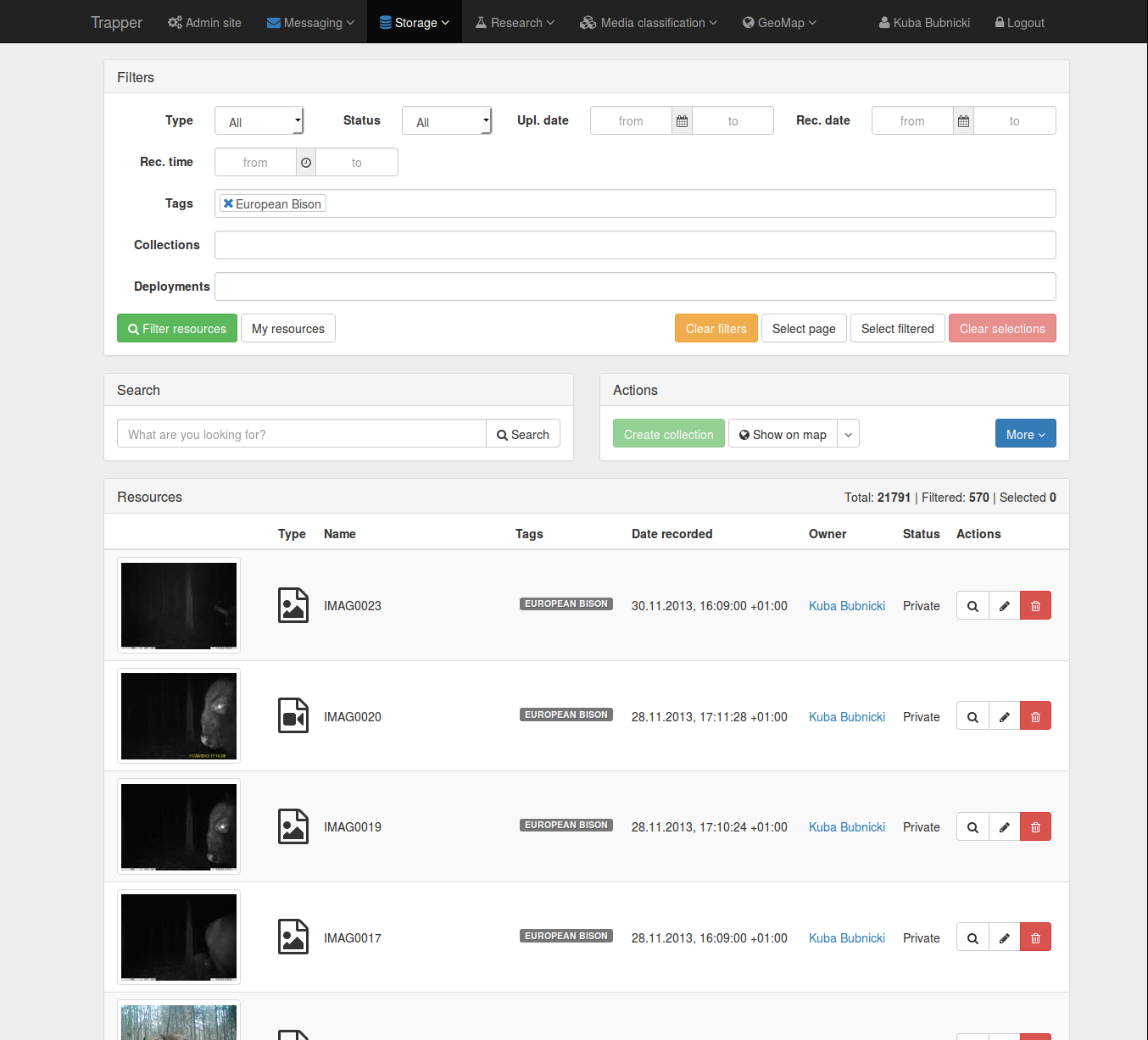

Trapper

Open-source system, interaction is via a browser, data is stored in Postgres. Can be hosted either locally or on a Linux VM. Offers several AI models, including MD (see the Trapper AI module).

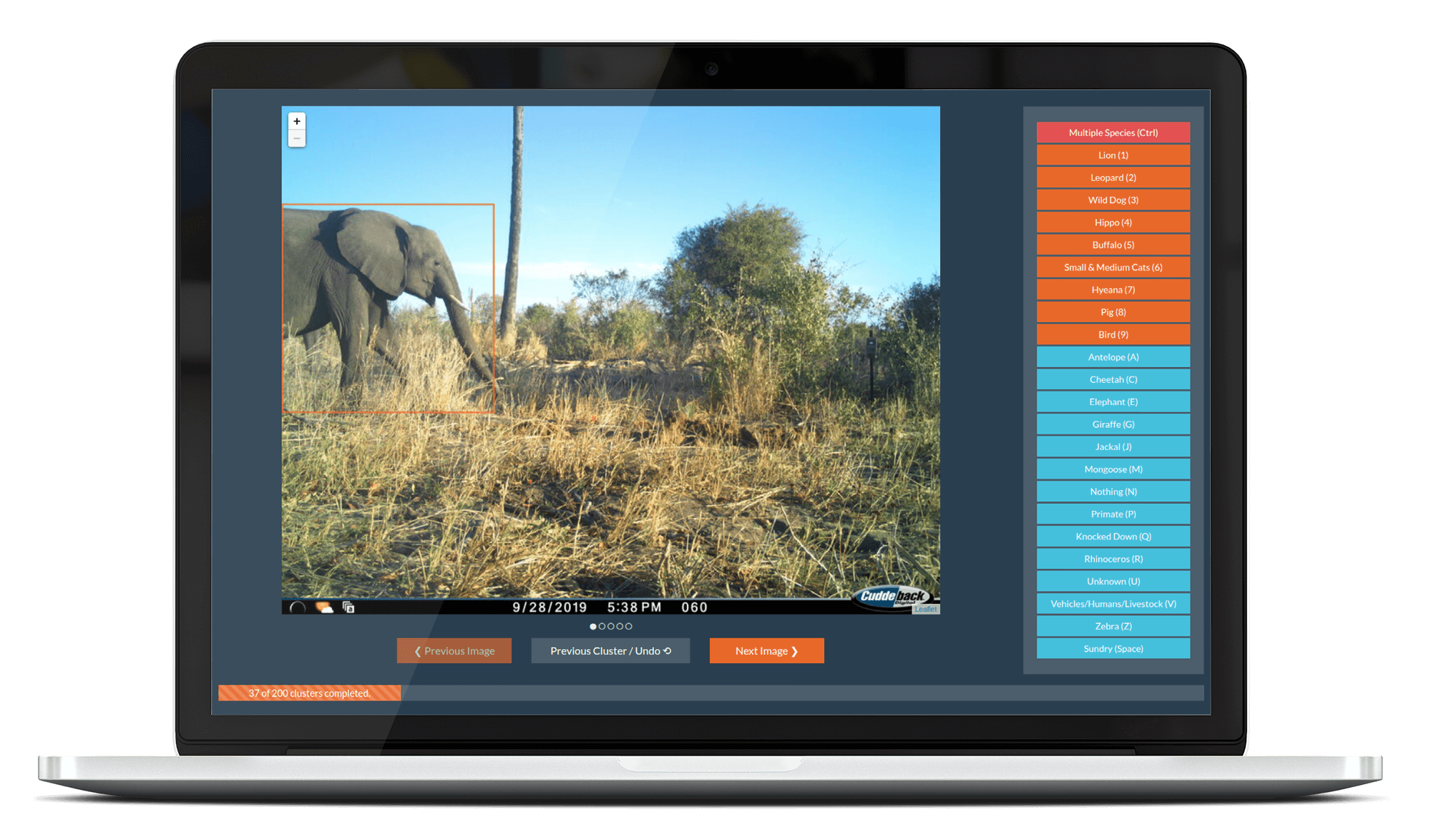

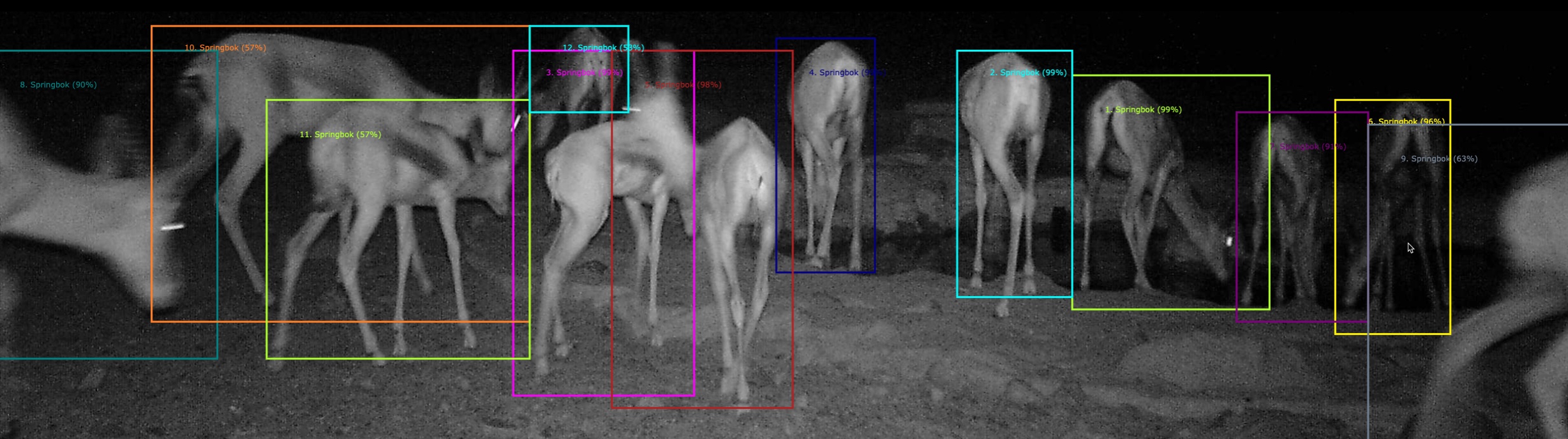

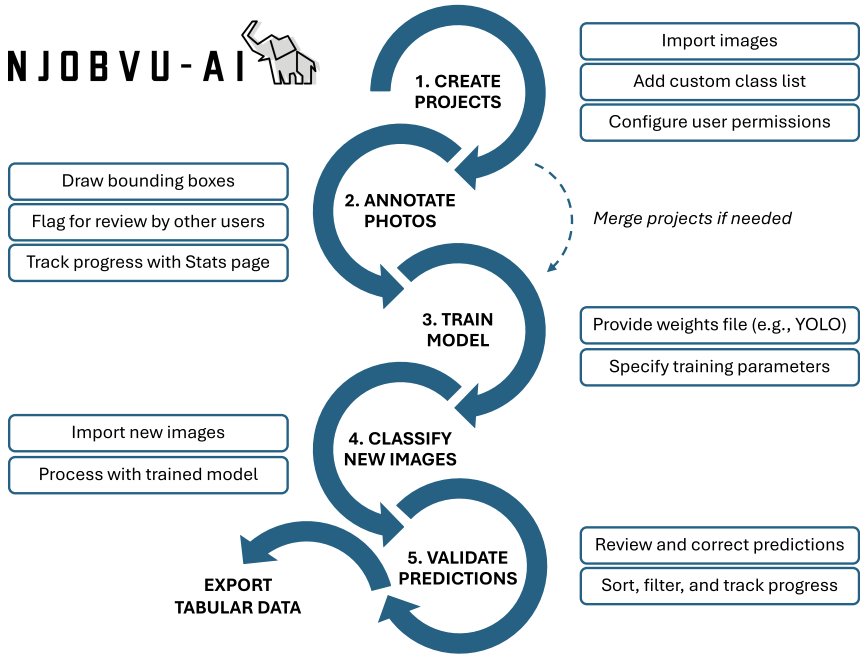

TrapTagger

A free, open-source online platform for camera trap data management that includes AI (for blank elimination and species classification), integration with HotSpotter for individual identification, and spatiotemporal analysis.

TrapTracker

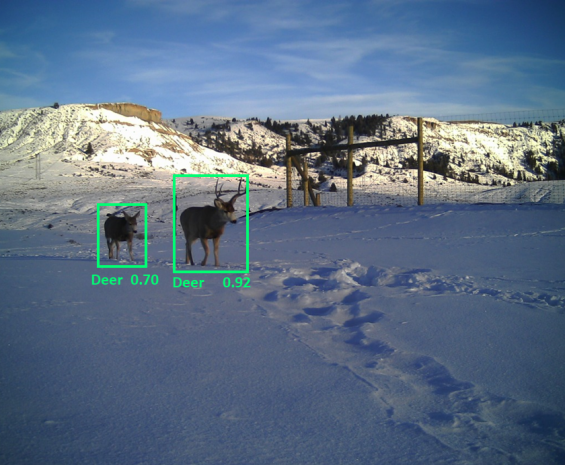

Includes a family (~10) of region-specific object detection models for camera traps and drones. Model selection and data upload are done with a desktop client.

NB: that information is based on the previous incarnation of this platform, as “Conservation AI”. IIUC the broader program/organization is still called “Conservation AI”, but the camera trap platform was renamed to “TrapTracker” in 2026.

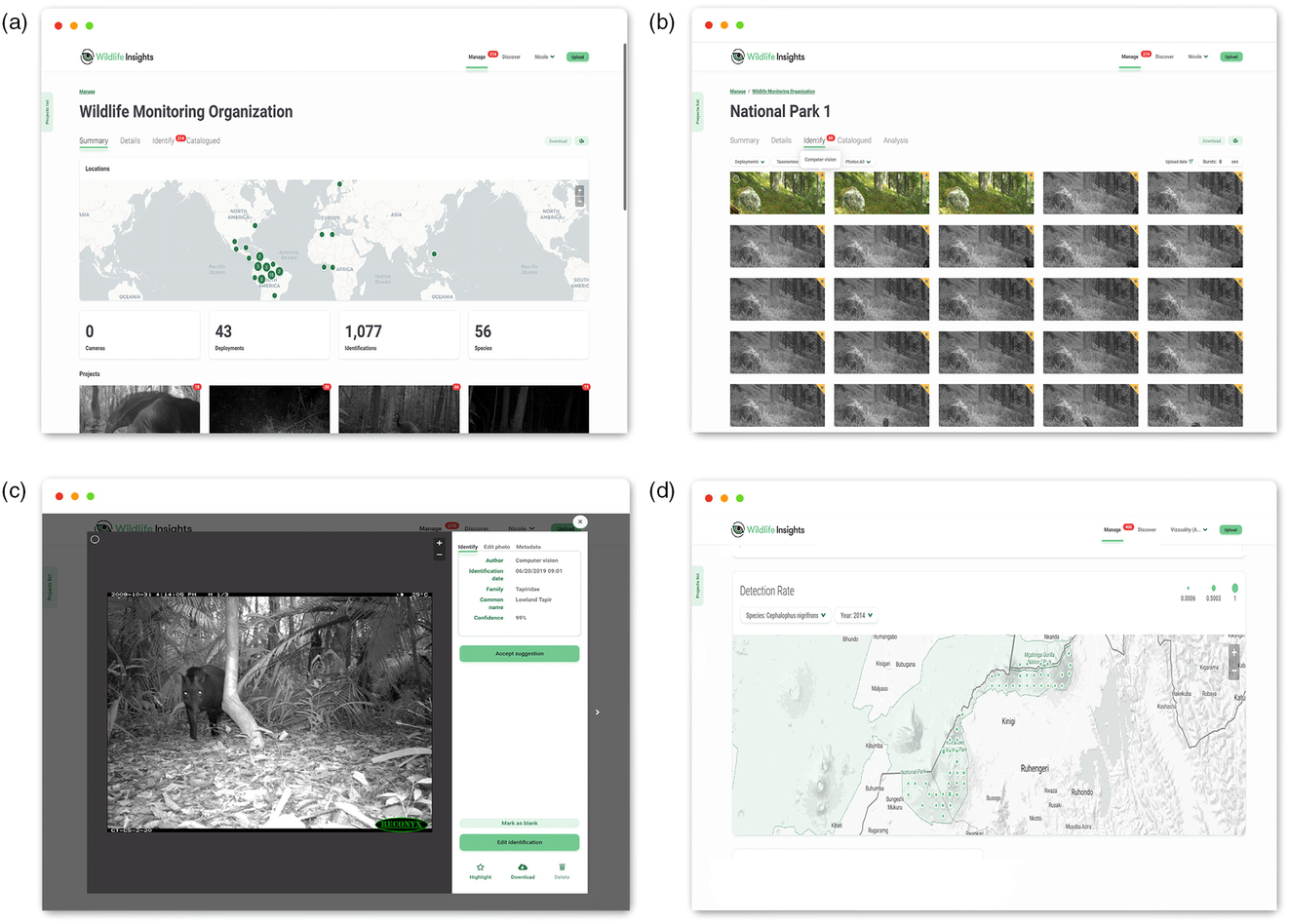

Wildlife Insights

Wildlife Insights (WI) is a platform for camera trap image management that includes AI-accelerated annotation, as well as data management and spatial analysis tools. WI is a collaboration among several NGOs, HQ’d at WildMon, with an AI component developed at Google.

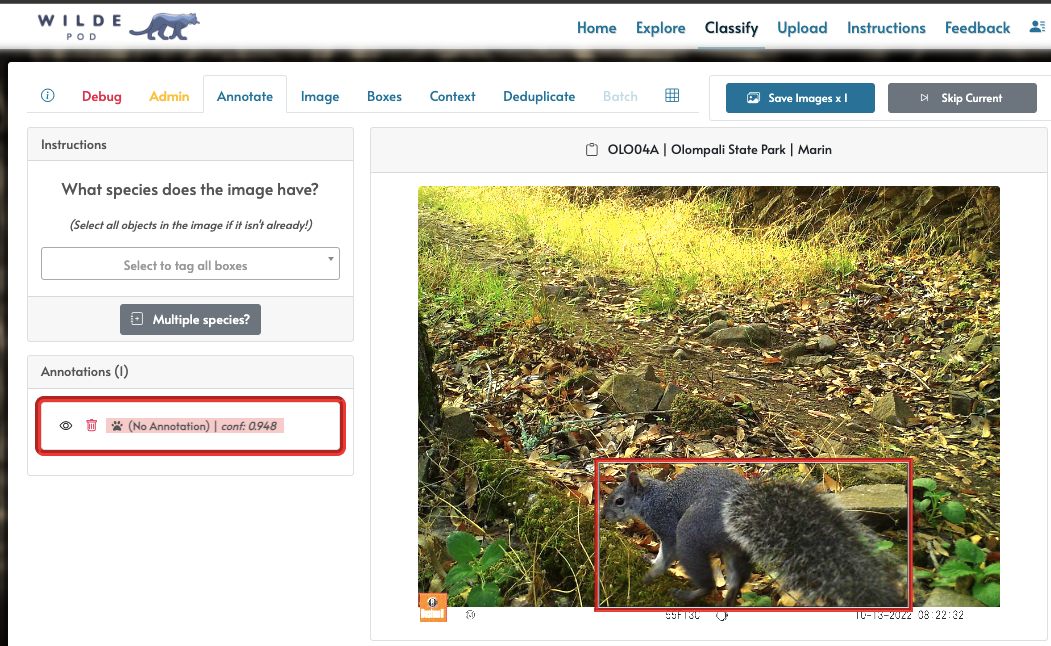

WildePod

Web-based image management platform built for the Felidae Conservation Fund. Uses MD for empty/animal/blank/vehicle categorization.

WildObs

Australia-specific deployment of Agouti, supporting SpeciesNet, MD, and several Australia-specific models (e.g. WildObs Wet Tropics, WildObs National, AWC135).

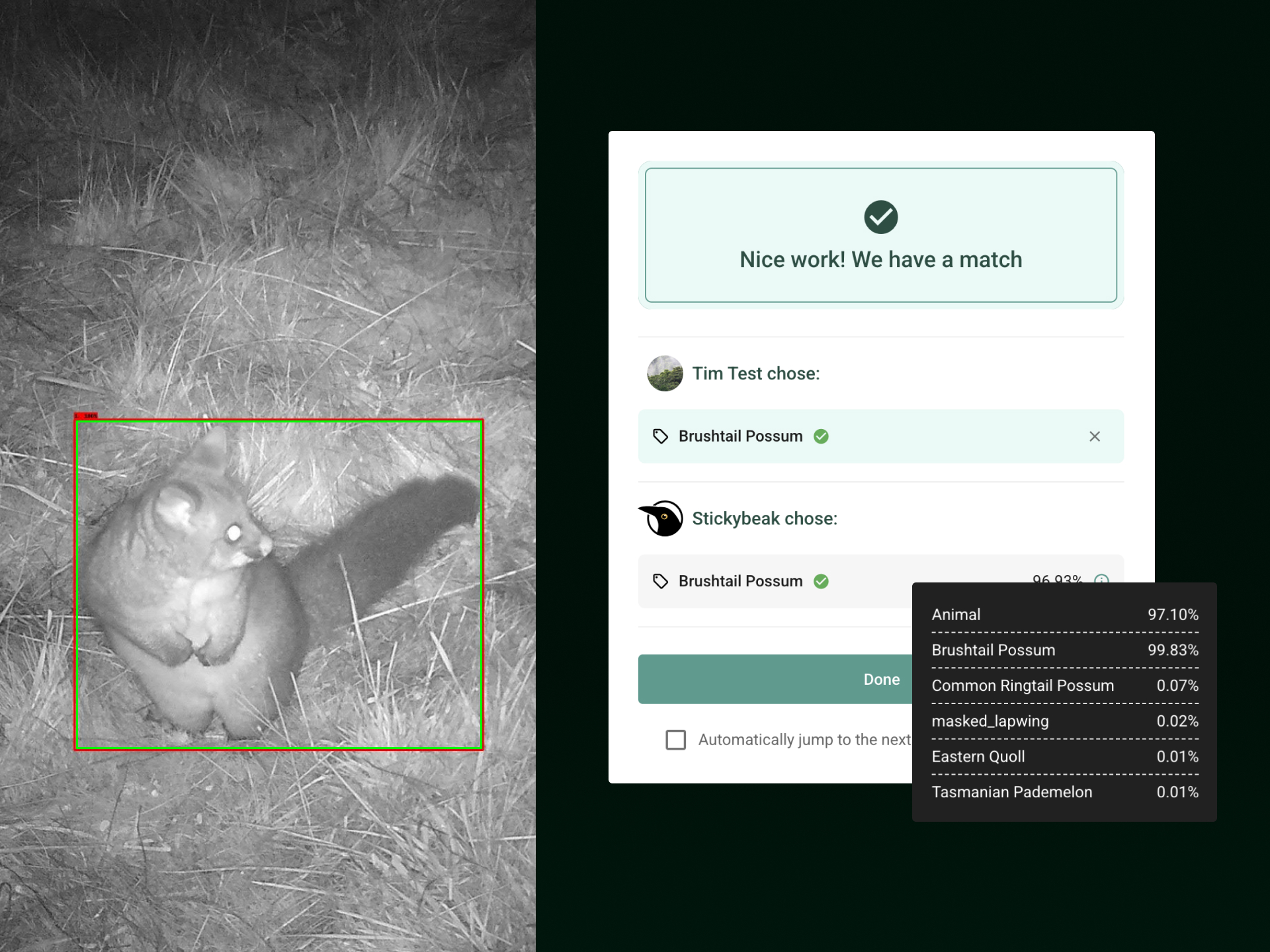

WildTracker / StickyBeak AI

https://wildtracker.com.au/

https://wildtracker.com.au/stickybeak-ai/

“WildTracker is a tool for private landholders to upload, tag, and share camera trap images of Tasmania’s exceptional wildlife.”

“Stickybeak is our AI-powered tool that helps landholders and citizen scientists process thousands of wildlife camera images more efficiently.”

Uses MDv1000-redwood, along with a Tasmania-specific classifier, via MEWC.

news story, developer’s project page

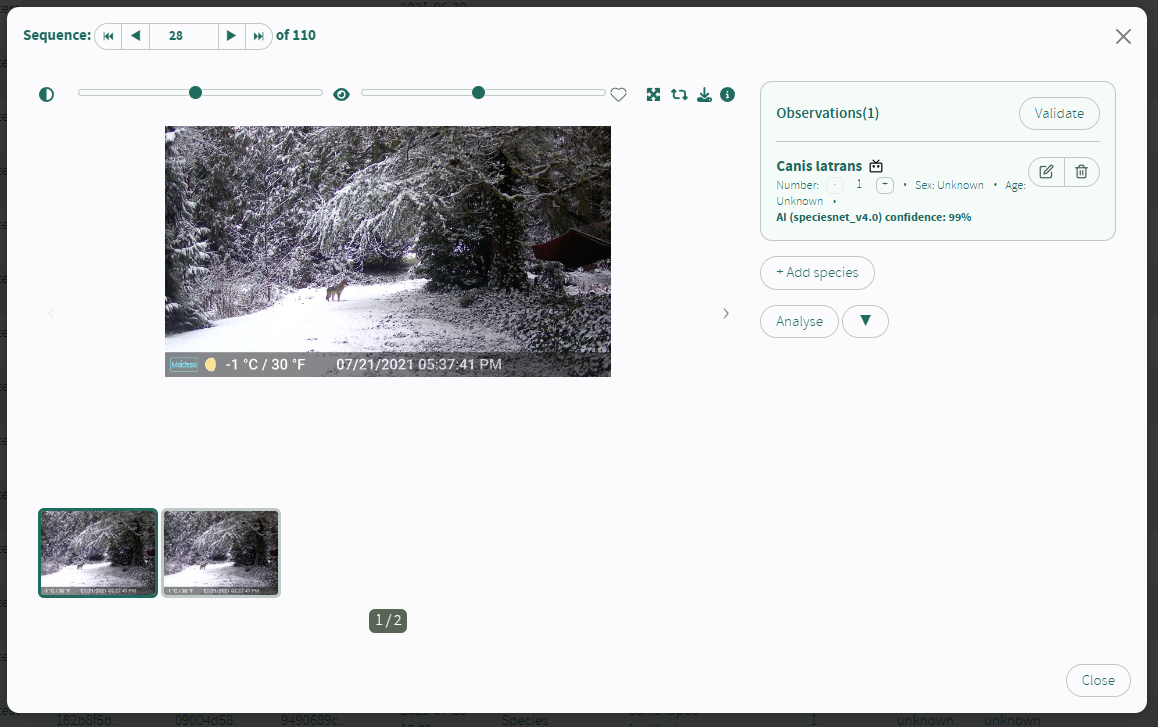

WildTrax

An online platform for camera trap data management that includes automated blank/non-blank elimination (using MegaDetector), species classification (as of the time I’m writing this, two models are available: (a) MegaClassifier, and (b) a platform-specific cattle/non-cattle model). Also manages acoustic data, with some AI functionality for acoustic data as well.

wpsWatch

Wildlife Protection Solutions deploys connected cameras in protected areas to detect and combat poaching; wpsWatch is their monitoring platform, which leverages AI for both human/animal/vehicle detection (using MegaDetector) and species classification.

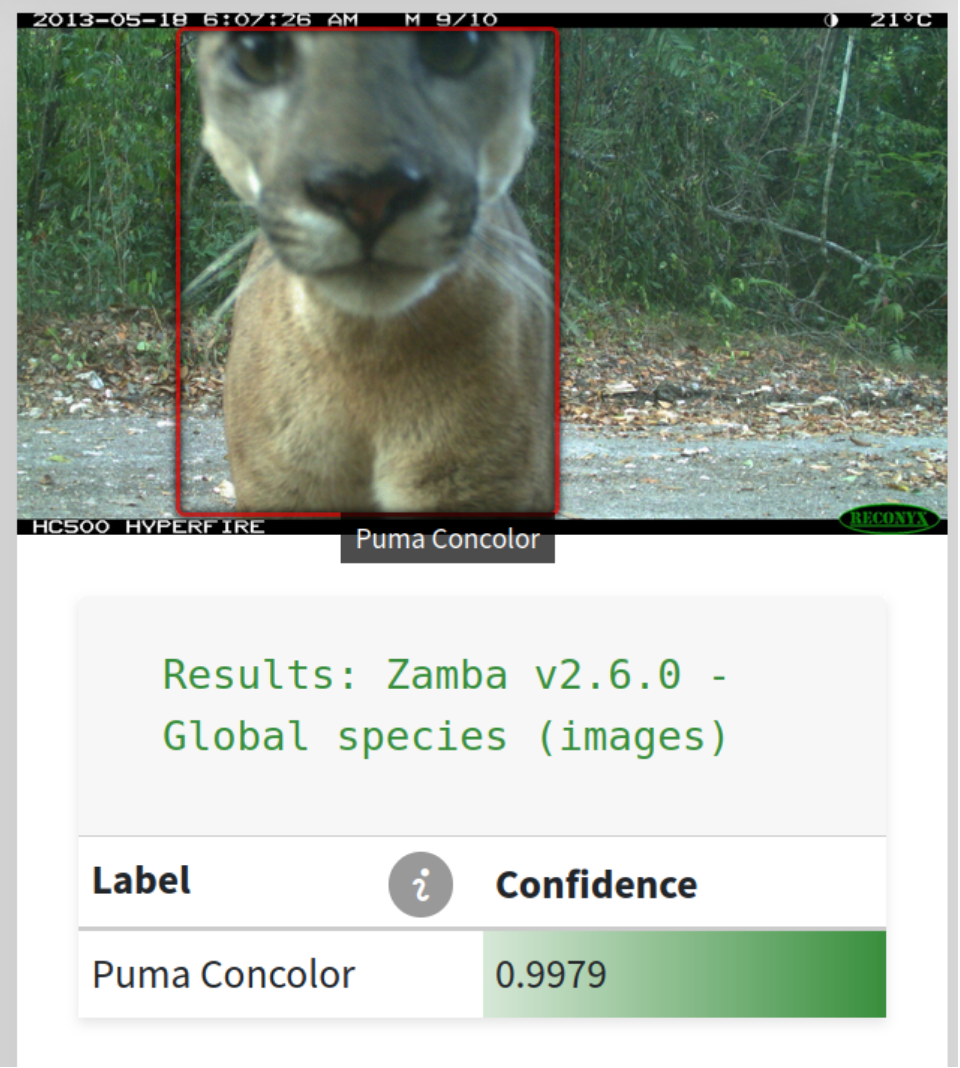

Zamba Cloud

Cloud-based platform for no-code training of image and video classification models. Explanatory video here.

They also maintain a Python package that includes some of Zamba Cloud’s functionality.

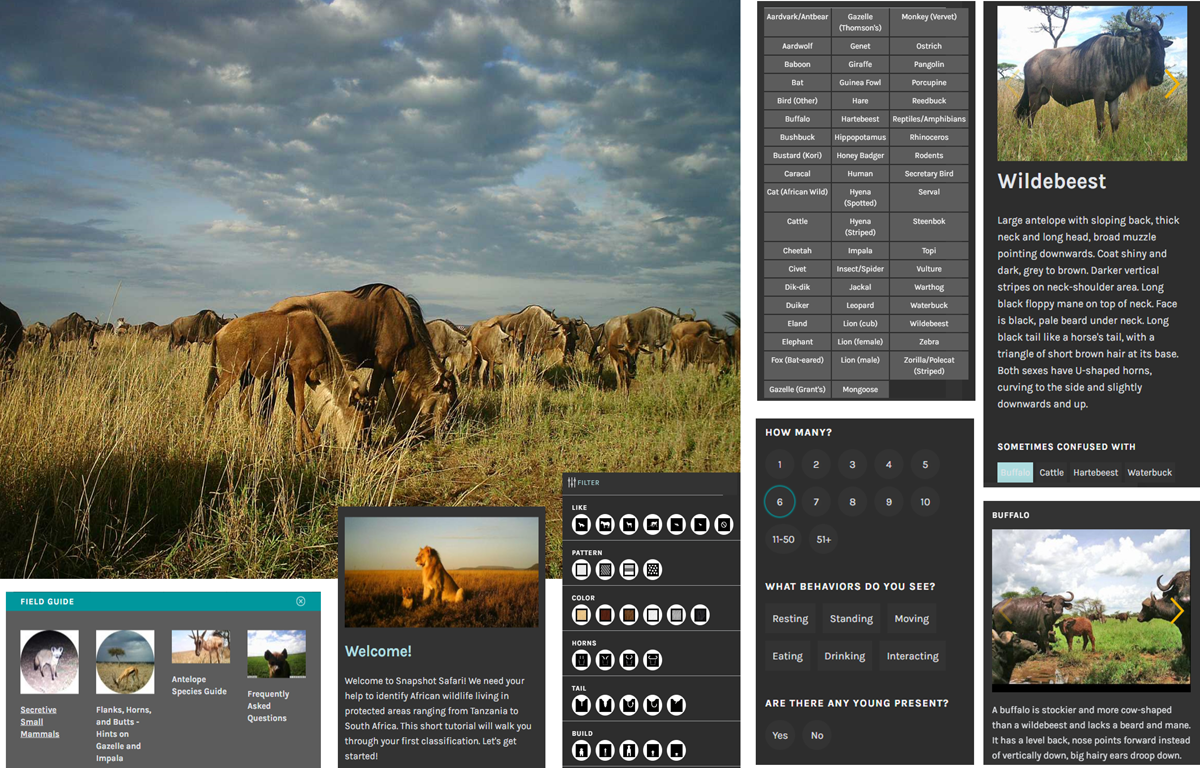

Zooniverse

This is a bit of an outlier on this list… Zooniverse is a platform for engaging human volunteers for data labeling. It doesn’t explicitly use ML, and it isn’t explicitly about camera traps, but here’s my rationalization for including it here:

- By some arbitrary way of dividing projects, a weak plurality of the projects on Zooniverse are about camera traps.

- By some arbitrary way of dividing data sources, Zooniverse projects contribute more data to the global pool of publicly-available camera trap data than any other data source (e.g. all of the Snapshot Safari projects on LILA began life on Zooniverse.

- Zooniverse is at least… noodling on the idea of incorporating ML for camera trap projects, e.g. see the Zooniverse subject assistant.

- I know from anecdotal interactions that lots of Zooniverse project owners use AI to (a) remove images of humans and (b) manage the number of blanks prior to uploading images to Zooniverse.

Wild.ai

Still “coming soon” as of 2026.02, keeping here for tracking.

WildID

Web-based platform for processing camera trap images, targeted for Southern Africa, that uses a custom multiclass detector. Free trial available; paid version allows larger bulk uploads.

Not to be confused with Wild.ID (a desktop tool for camera trap image processing that was used by the TEAM Network prior to Wildlife Insights) or Wild-ID (a desktop tool to accelerate the identification of individual animals).

Bounding Box Editor and Exporter (BBoxEE)

Client-side tool for semi-automated labeling of camera trap images.

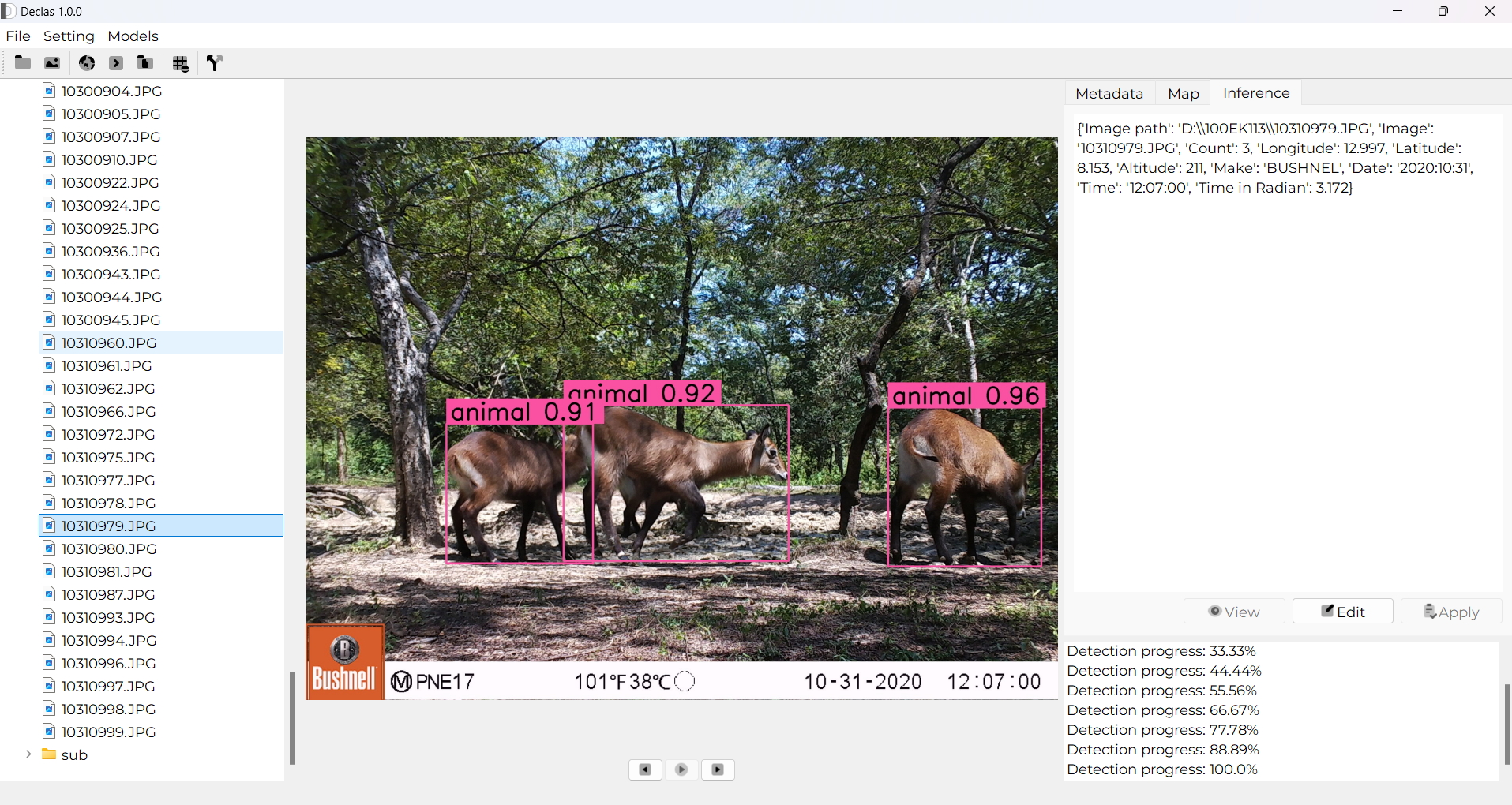

Declas

Cross-platform client-side tool for running MD and species classifiers.

Dudek AI Image Toolkit

Cloud-based platform that leverages MDv5.

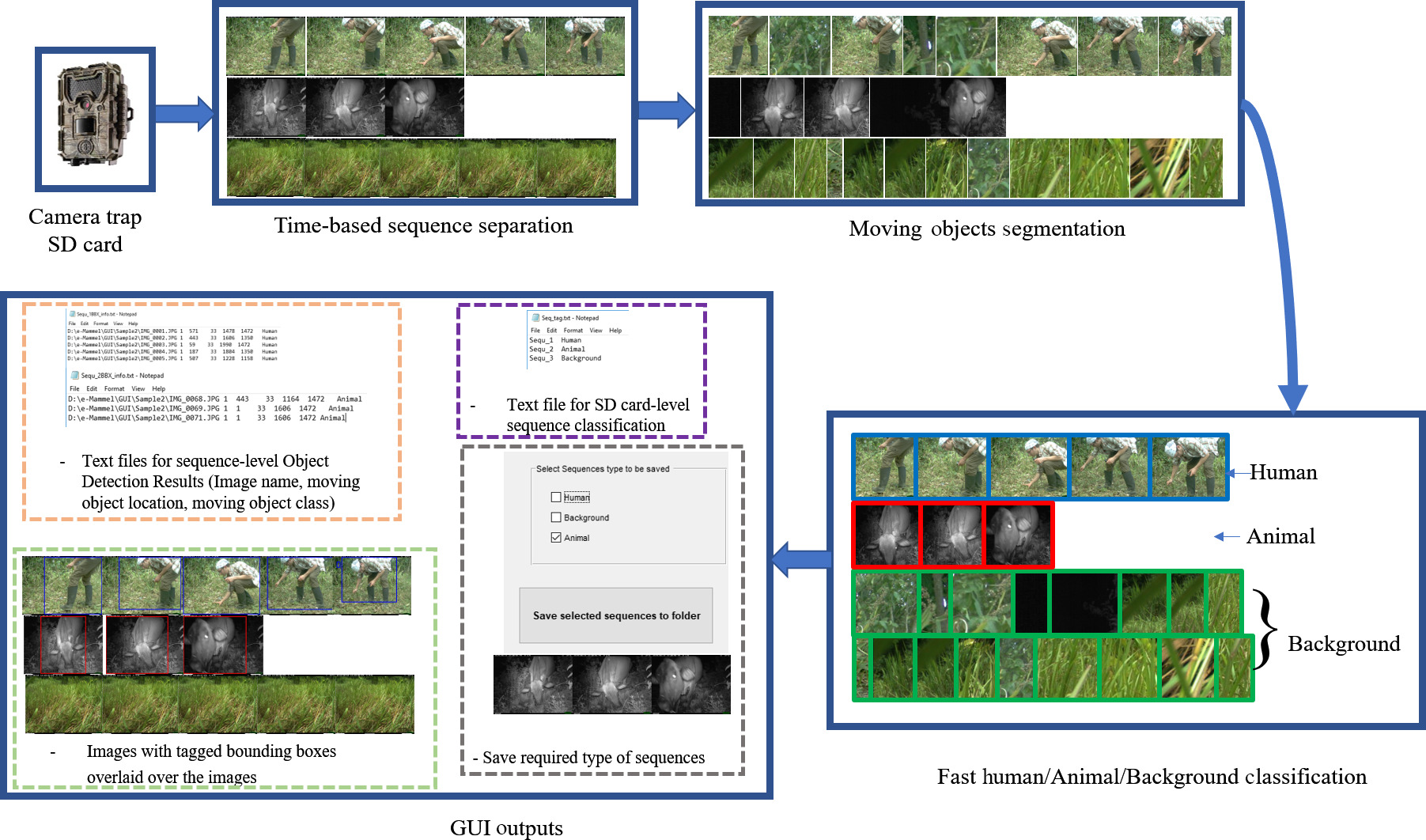

ecoSecrets

Web app for processing camera trap images. Docs refer to the image processing approach presented in Zhang et al.; MegaDetector is also mentioned in the code.

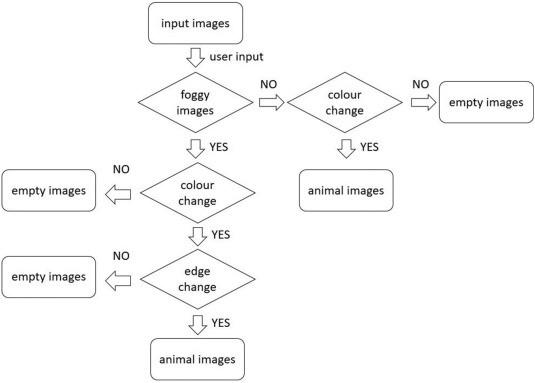

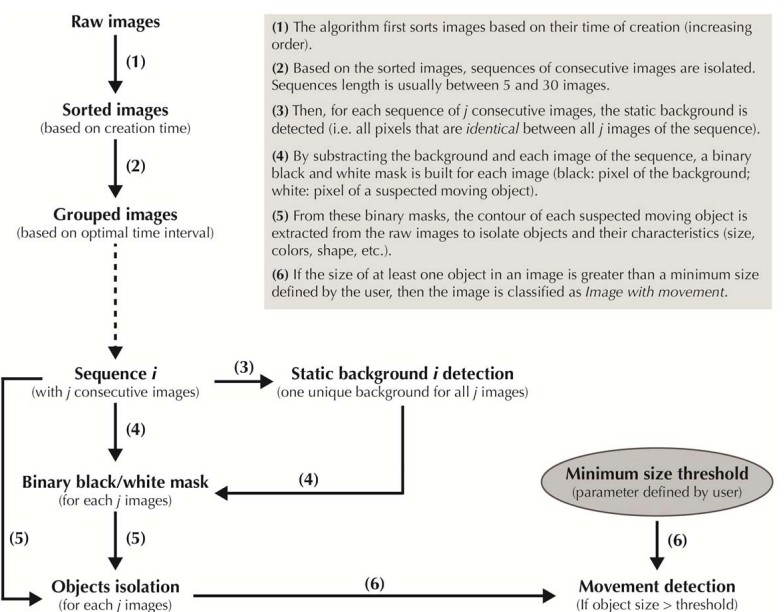

EventFinder

Java-based tool to separate empty from non-empty images using background subtraction and color histogram comparisons. Also see the associated paper. Uses MD in the “EventFinder Suite” version, released in 2024.

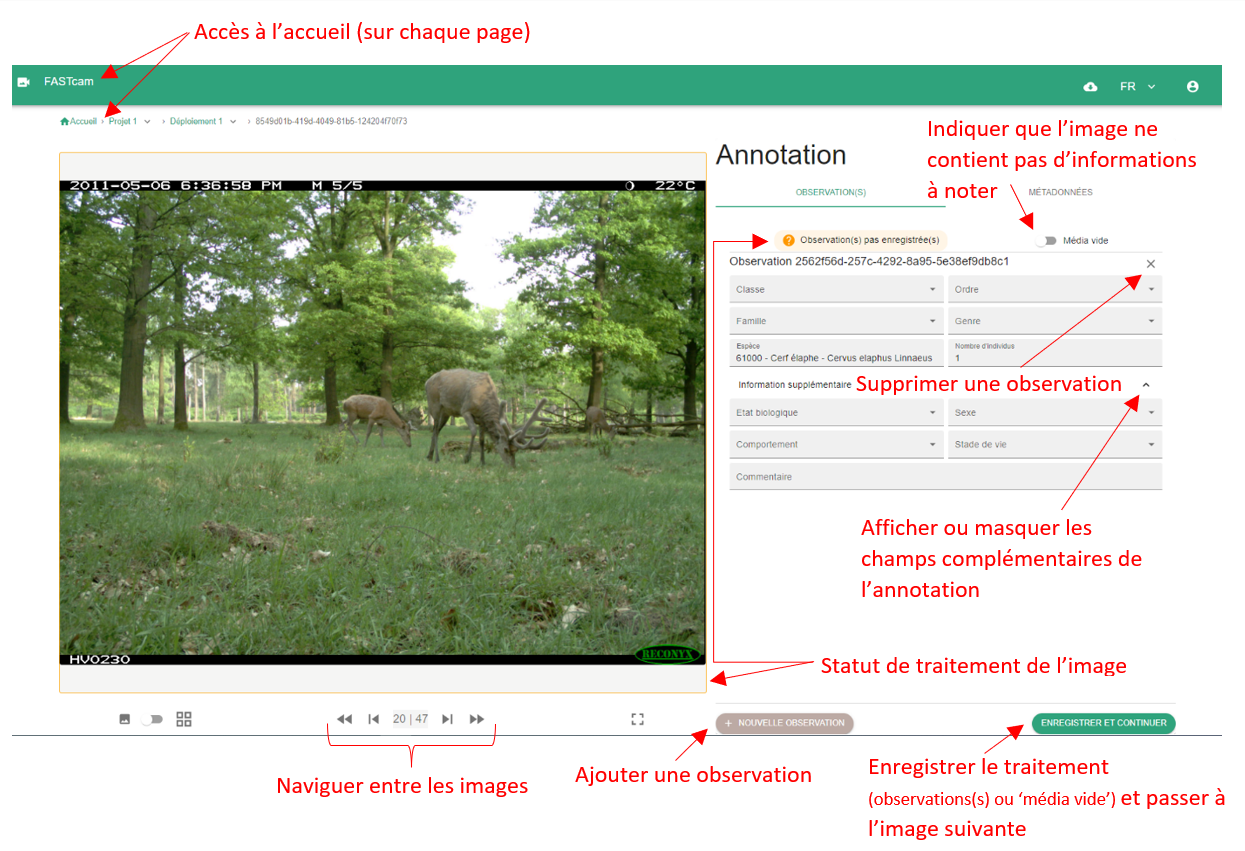

FASTCAT-Cloud

Online platform with several custom detectors for European wildlife, trained on GBIF data, which can be accessed via a Web demo or an API. Integrated with iSpot (an iNat-like platform for biodiversity observation logging). Also has a human/blank model, but details are not available on the Web page.

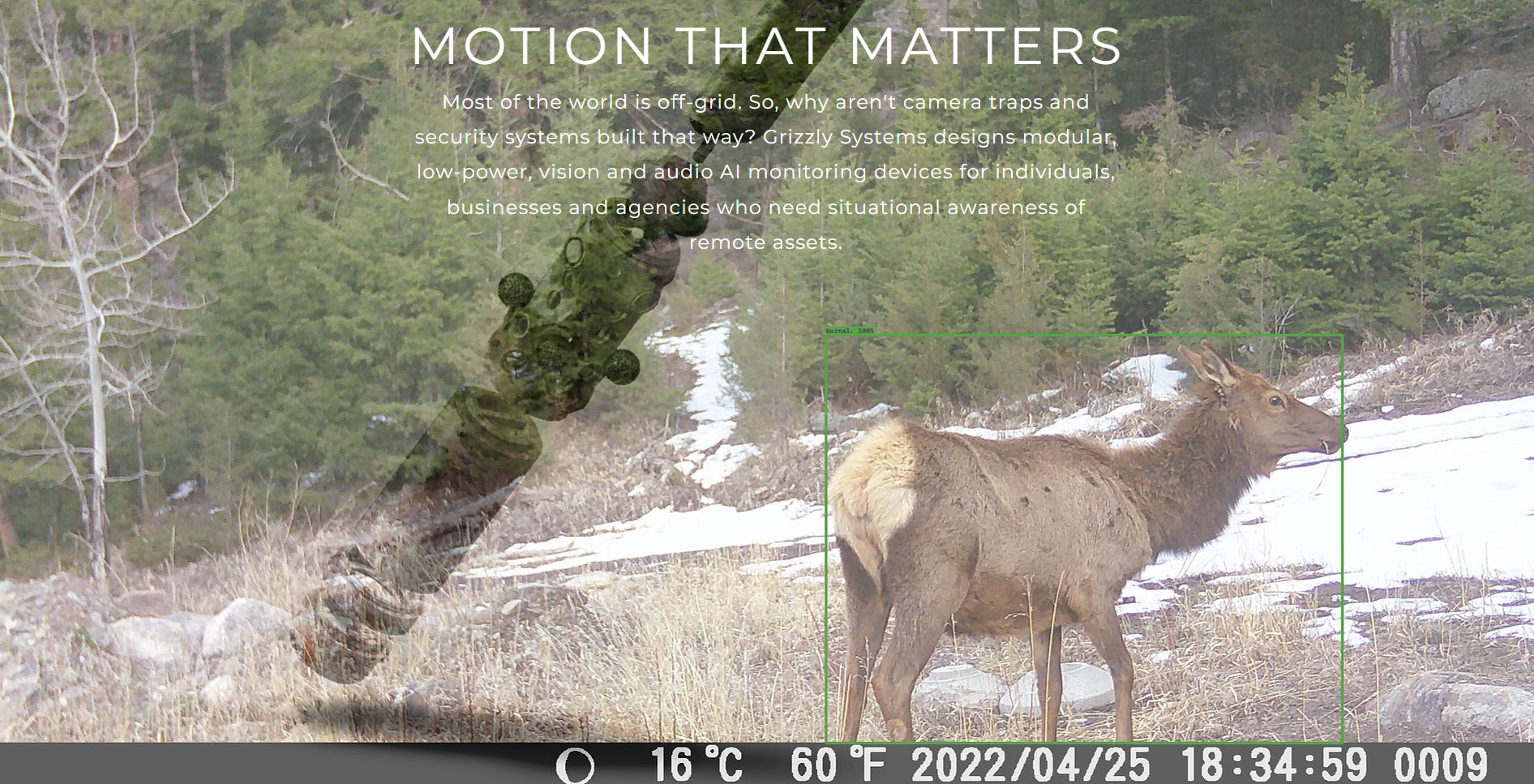

Grizzly Systems

They describe a device with edge inference capability (“smart trigger”) and mesh networking, and AI-enabled software tools that run locally or in the cloud.

PantheraIDS (Integrated Data System)

Image management and analysis software used at Panthera; includes machine learning functionality for blank removal, species classification, and individual ID.

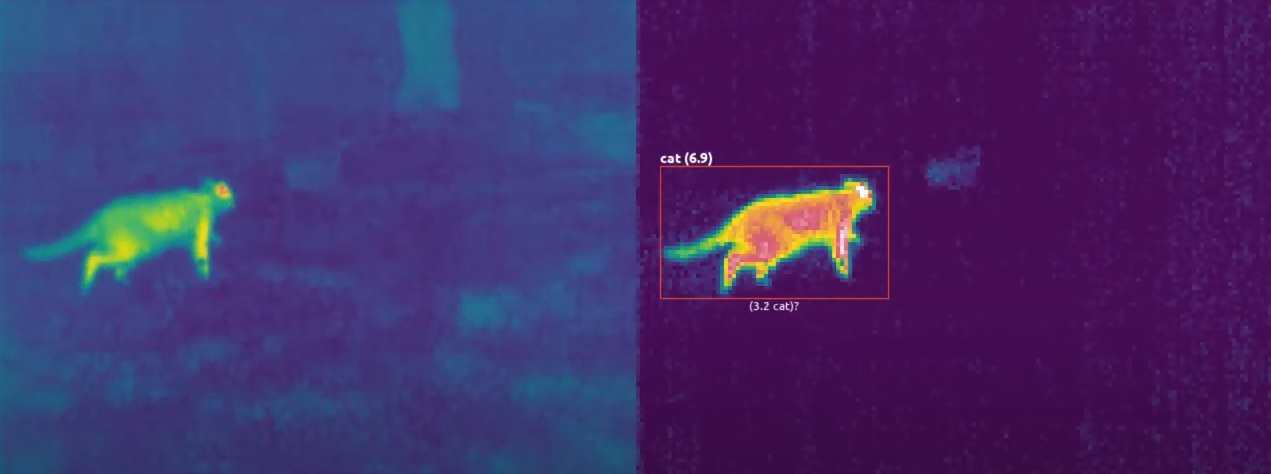

Cacophony Project (2040) Thermal Predator Camera

Thermal camera with a cloud-based AI service.

Systems that appear to be less active

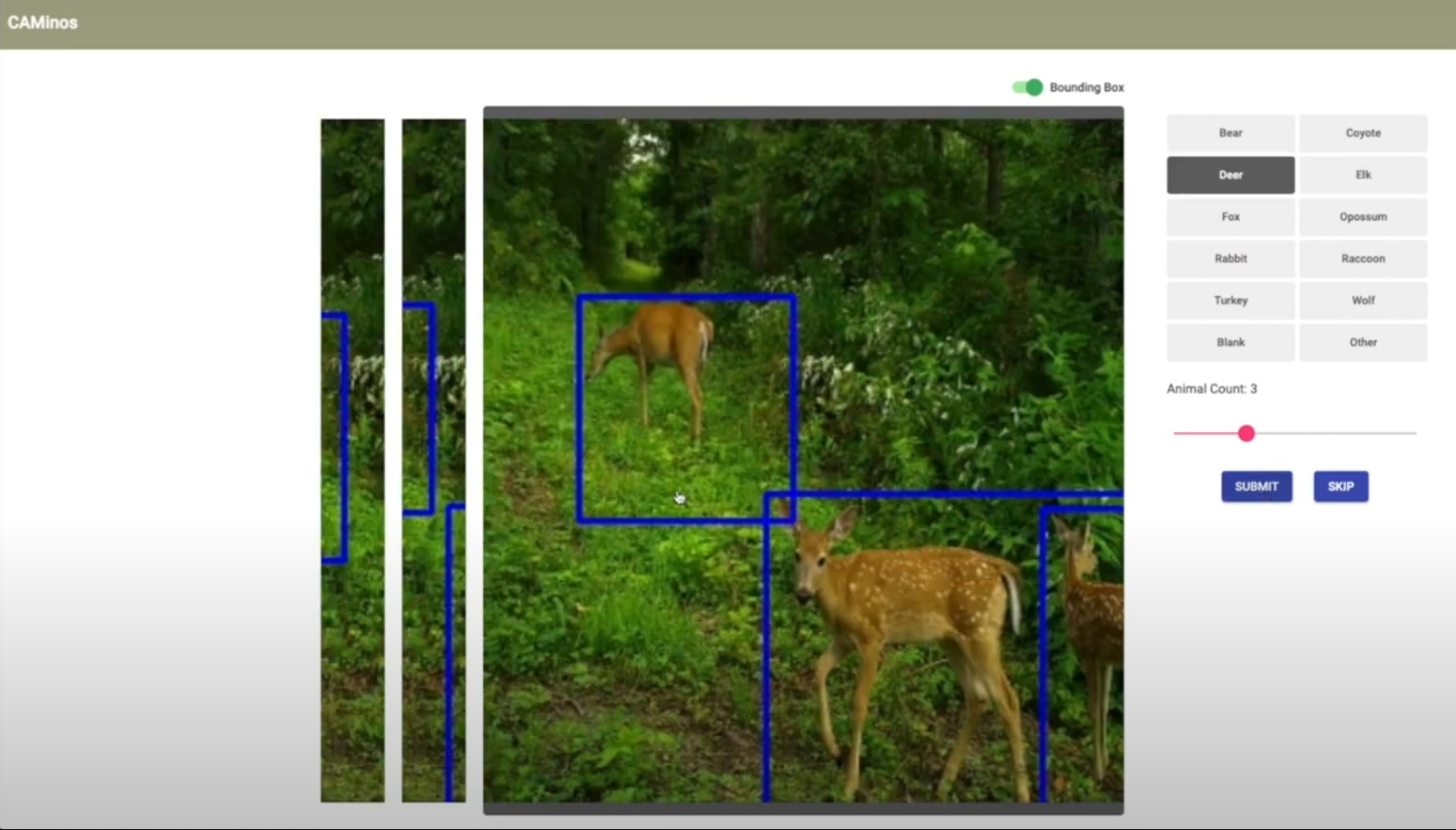

CAMinos

https://www.ischool.berkeley.edu/projects/2021/caminos-intelligent-trail-camera-annotation

This looks to have been a student project, so normally I would say it’s unfair to call it “inactive”, but the page and video are so slick by the standards of student projects that my intention is to give it props by including it here.

Online annotation tool that combines MDv5 with a species classifier.

Where’s the Bear?

IoT system with computer vision pieces for managing camera traps, currently in Southern California. They refer to having processed 1.2M images, and have used Inception with some clever synthetic data generation to get pretty good results.

“…is deployed at the UCSB Sedgwick Reserve, a 6000 acre site for environmental research and used to aggregate, manage, and analyze over 1.12M images.”

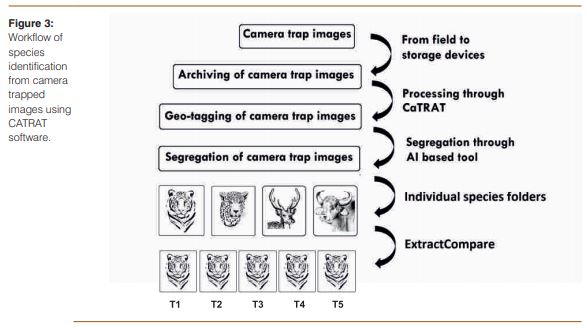

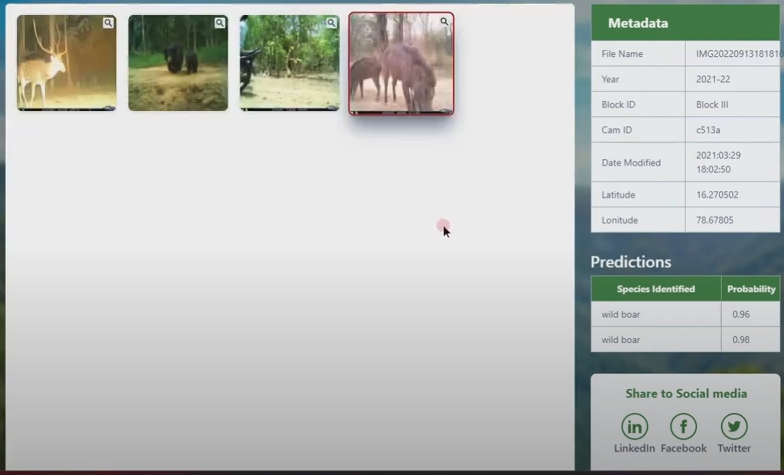

Wildlife Institute of India CaTRAT

https://wii.gov.in/publications/software/tiger-monitoring-software

CaTRAT (Camera Trap Data Repository and Analysis Tool) is an internal tool used by the Wildlife Institute of India, the National Tiger Conservation Authority, and regional wildlife authorities to accelerate the processing of camera trap images, with a focus on population surveys for tigers and snow leopards. Not a lot of information is publicly available, but this paper suggests that CaTRAT uses ExtractCompare for individual tiger identification. This article suggests that HotSpotter is used as well, but I can’t verify that anywhere else. (June 2025 story, July 2025 story)

MooseDar

Thermal-camera-based system that uses CNNs to detect moose, for accident prevention.

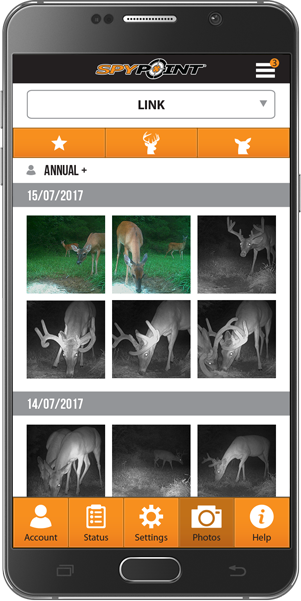

BuckTracker

https://www.spypoint.com/us/en/blog?id=298&topic=les-avantages-du-filtre-d-espces-buck-tracker-a.i

App associated with SpyPoint trail cameras, allowing users to filter photos by species for consumer hunting applications.

Systems that appear not to exist any more

This assessment is based mostly on 404’s, please let me know if I’ve missed the boat on any of these moving somewhere I couldn’t find them.

Wildlife Observer Network Image ID+

Web-based system that takes a zipfile of camera trap images and produces an estimate of the presence/number of animals in each image.

CAIMAN

Cloud-based, AI-enabled system for camera trap image processing. Integrated with online spatial analysis tools (Focus and WildCAT). Listed as “available by the end of 2021”, but not yet available as of 10/2024.

DeCaTron

AI-driven tool for camera trap image review, with spatial analytics.

RECONN.AI

Cloud-based tool that includes a detector and species classifier. Documentation suggests the classifier is tailored for the American Midwest.

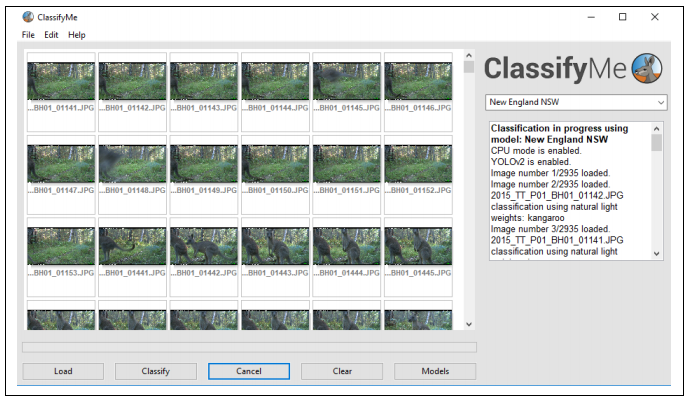

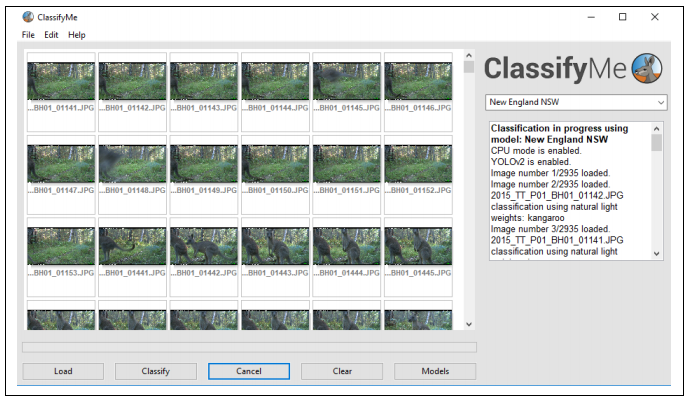

ClassifyMe

Thick-client tool that allows a menu of Yolov2-based models. Five models are provided out of the gate, trained primarily on open data sets (Snapshot Serengeti, Caltech Camera Traps, Snapshot Wisconsin). Downloadable by request.

Mala ML

AI-accelerated review tool that advertises both browser-based and desktop experiences.

Trailcam Data

System for removing false positives from camera trap image collections. Unclear if this is automated or manual; I think manual.

OSS repos about ML for camera traps

Stratifying these based on whether they appear to be active, but this isn’t updated automatically, so if I’ve incorrectly filed a project into the “last updated a long time ago” category, please let me know!

Last updated >= 2021

- SpeciesNet R wrapper (github.com/boettiger-lab/speciesnet)

- TropiCam-AI (arboreal taxa classifier) (github.com/andrewzamp/TropiCam-AI)

- AWC Wildlife Classifier (github.com/Australian-Wildlife-Conservancy-AWC/awc-wildlife-classifier)

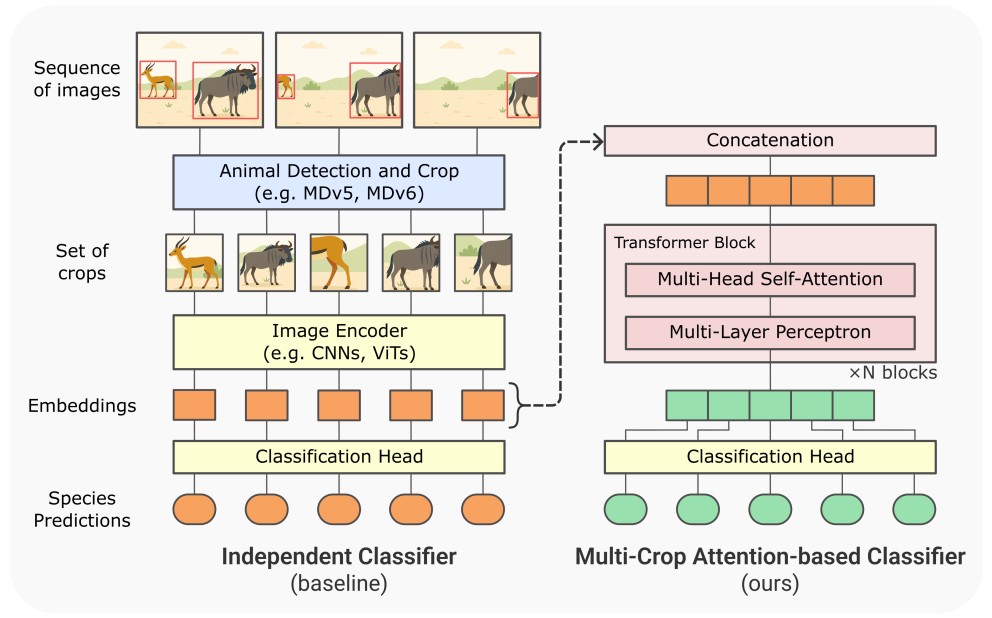

- Paying attention to other animal detections improves camera trap classification models (github.com/gdussert/MCA_Classifier)

- A semi-automatic workflow to process camera trap images in R (github.com/hannaboe/camera_trap_workflow)

- Bounding Box Editor and Exporter (BBoxEE) for the Animal Detection Network (github.com/persts/BBoxEE)

- Camelot (gitlab.com/camelot-project/camelot)

- CameraTrapDetectoR (github.com/TabakM/CameraTrapDetectoR)

- DeepFaune software (plmlab.math.cnrs.fr/deepfaune/software)

- Wildlife Age-Sex Classification (github.com/slds-lmu/wildlife-age-sex)

- Wildlife Detection API (Flask app to serve MD) (github.com/cloudpresser/wildlifeApp)

- Image Level Label to Bounding Box Pipeline (github.com/persts/IL2BB)

- Trapper species classification (gitlab.com/oscf/trapper-species-classifier)

- Gimenez et al 2021 (integrating DL results into ecological statistics) (github.com/oliviergimenez/computo-deeplearning-occupany-lynx)

- Johanns et al 2022 (distance estimation and tracking) (github.com/PJ-cs/DistanceEstimationTracking)

- Pantazis et al 2021 (self-supervised learning) (github.com/omipan/camera_traps_self_supervised)

- Mbaza (species classification and Shiny app) (github.com/Appsilon/mbaza)

- Hilton et al 2022 (analyzing tortoise video) (github.com/hiltonml/camera_trap_tools)

- Haucke et al 2022 (depth from stereo) (github.com/timmh/socrates)

- Cacophony Project: species classification in thermal images (github.com/TheCacophonyProject/classifier-pipeline)

- Oregon Critters (species classification) (github.com/appelc/oregon_critters)

- Mbaza AI (desktop app for CT data management) (github.com/Appsilon/mbaza)

- BatNet (classification of bats in CT images) (github.com/GabiK-bat/BatNet)

- BoquilaHUB (client-side tool for running models) (github.com/boquila/boquilahub)

- MegaDetector (finds animals/people/vehicles in camera trap images) (github.com/agentmorris/MegaDetector)

- TNC Animl platform (github.com/tnc-ca-geo/animl-frontend)

- AddaxAI (formerly EcoAssist) (GUI for running models) (github.com/PetervanLunteren/AddaxAI)

- Zamba (github.com/drivendataorg/zamba)

- TrapTagger (github.com/WildEyeConservation/TrapTagger)

- Goanna detector (detector for several Australian species, esp goannas) (github.com/agentmorris/unsw-goannas)

- Tegu detector (detector for several species in Florida, esp tegus) (github.com/agentmorris/usgs-tegus)

- ecoSecrets (github.com/naturalsolutions/ecoSecrets)

- tapis-project camera traps (edge device tools for camera traps) (github.com/tapis-project/camera-traps)

- Smart camera traps (see Velasco-Montero 2024 below) (github.com/DVM000/smart_camera_trap_research)

- European mammal/bird recognition (see Schneider 2024 below) (github.com/umr-ds/Mammal-Bird-Camera-Trap-Recognition)

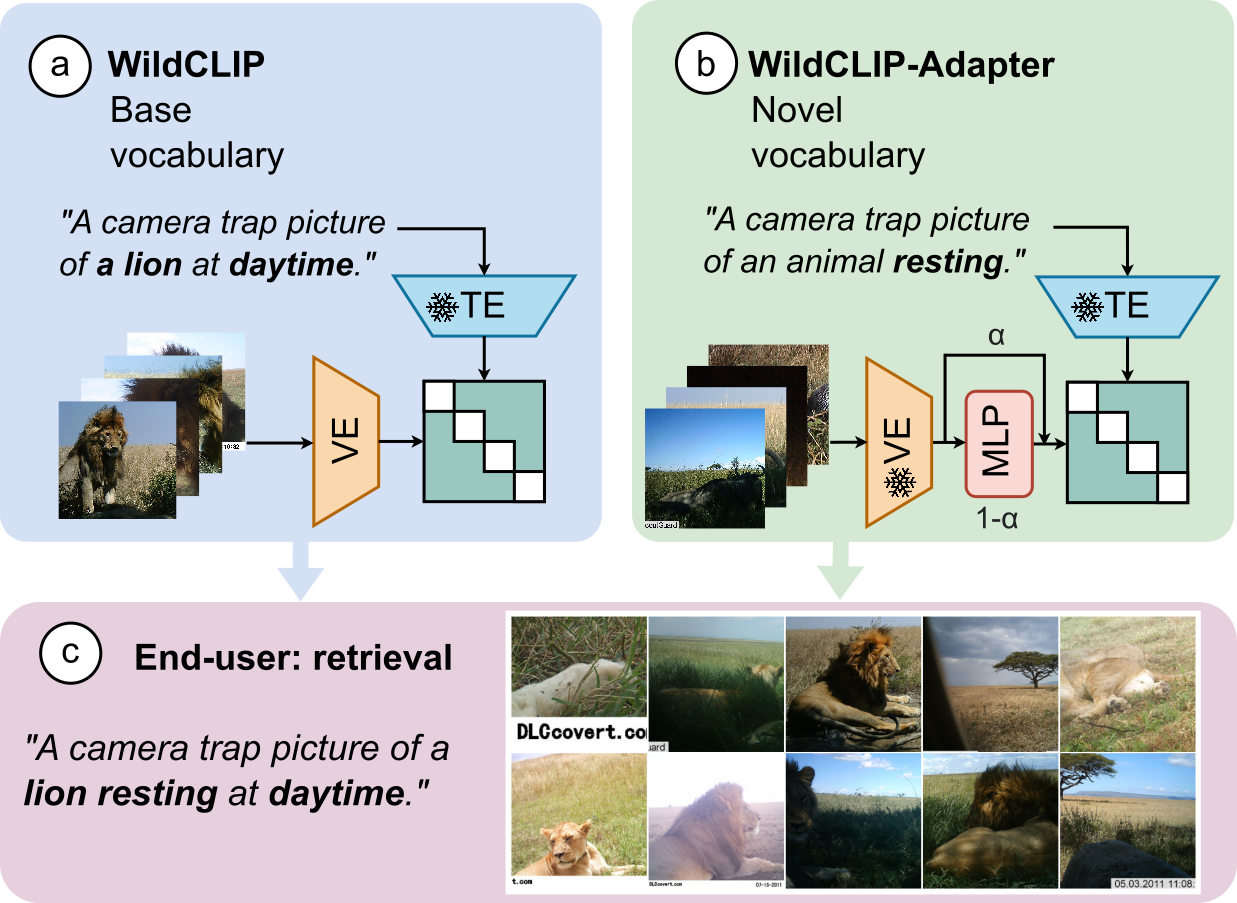

- WildCLIP (VLMs for camera trap analysis) (github.com/amathislab/wildclip)

- TeraiNet (species classifier for Nepal) (github.com/alexvmt/TeraiNet)

- SpeciesNet-Rust (Rust port of SpeciesNet) (github.com/zubalis/speciesnet-rust)

- Alita (species classifier for NZ wildlife) (github.com/Wologman/Alita)

- Irvine Ranch Conservancy Animal Detection (classifier for California wildlife) (github.com/ttgminh/Irvine-Ranch-Conservancy-Animal-Detect)

- DeepForestVision (classifier for African tropical forests) (github.com/MNHN-OFVI/DeepForestVision)

- DeepMeerkat (tools for video-based ecological surveys) (github.com/bw4sz/DeepMeerkat)

- SpeciesNet (global species classifier for ~2k species) (github.com/google/cameratrapai)

- Mega-Efficient Wildlife Classifier (MEWC) (tools for training classifiers on MD crops) (github.com/zaandahl/mewc)

- MegaDetectorLite (ONNX/TensorRT conversions for MD) (github.com/timmh/MegaDetectorLite)

- MegaDetector-FastAPI (MD serving via FastAPI/Streamlit) (github.com/abhayolo/megadetector-fastapi)

- MegaDetector UI (tools for server-side invocation of MegaDetector) (github.com/NINAnor/megadetector_ui)

- MegaDetector Container (Docker image for running MD) (github.com/bencevans/megadetector-contained)

- MegaDetector V5 - ONNX (tools for exporting MDv5 to ONNX) (github.com/parlaynu/megadetector-v5-onnx)

- MEWC (Mega Efficient Wildlife Classifier) (github.com/zaandahl/mewc)

- CamTrapML (Python library for camera trap ML) (github.com/bencevans/camtrapml)

- WildCo-Faceblur (MD-based human blurring tool for camera traps) (github.com/WildCoLab/WildCo_Face_Blur)

- CamTrap Detector (MDv5 GUI) (github.com/bencevans/camtrap-detector)

- SDZG Animl (package for running MD and other models via R) (github.com/conservationtechlab/animl)

- SpSeg (WII Species Segregator) (github.com/bhlab/SpSeg)

- Wildlife ML (detector/classifier training with active learning) (github.com/slds-lmu/wildlife-ml)

- BayDetect (GUI and automation pipeline for running MD) (github.com/enguy-hub/BayDetect)

- Automated Camera Trapping Identification and Organization Network (ACTION) (github.com/humphrem/action)

- TigerVid (animal frame/clip extraction from videos) (github.com/sheneman/tigervid)

- Trapper AI (AI backend for the TRAPPER platform) (gitlab.com/trapper-project/trapper-ai)

- video-processor (MD workflow for security camera footage) (github.com/evz/video-processor)

- Declas (client-side tool for running MD and classifiers) (github.com/stangandaho/declas)

- AI for Wildlife Monitoring (real-time alerts using 4G camera traps) ([github.com/ratsakatika/camera-traps])(https://github.com/ratsakatika/camera-traps/)

- Identifying snow depth in camera trap images (github.com/catherine-m-breen/snowpoles)

- CATALOG: A Camera Trap Language-guided Contrastive Learning Model (github.com/Julian075/CATALOG)

- WildSight AI (autonomous pan/tilt camera that tracks wildlife) (github.com/s59mz/wild-sight-ai)

Last updated < 2021

- Autofocus species classifier (github.com/uptake/autofocus)

- Torch Traps (student project on training/running classifiers on LILA data) (github.com/winzurk/torchtraps/)

- Deep Learning for Nilgai Management (Kutugata et al, 2021) (github.com/mkutu/Nilgai)

- Deep Learning for Camera Trap Images (Norouzzadeh 2018) (github.com/Evolving-AI-Lab/deep_learning_for_camera_trap_images)

- DrivenData classification competition winners (github.com/drivendataorg/hakuna-madata/)

- WildAnimalDetection (Jasper Ridge Biological Preserve) (github.com/qiantianpei/WildAnimalDetection)

- Willi et al species classification (github.com/marco-willi/camera-trap-classifier)

- MLWIC: Machine Learning for Wildlife Image Classification in R (github.com/mikeyEcology/MLWIC)

- MLWIC2: Machine Learning for Wildlife Image Classification (github.com/mikeyEcology/MLWIC2)

- Wildlife detection and classification with MD and RetinaNet (github.com/oliviergimenez/DLcamtrap)

Publicly-available ML models for camera traps

This section lists ML models one can download and run locally on camera trap data (or use in cloud-based systems). This section does not include models that exist in online platforms but can’t be downloaded locally. Almost everything in this section will also appear in either the systems or repos section on this page.

My hope is that this section can grow into a more structured database of models with sample code… if you want to help with that, email me.

I am making a very loose effort to include last-updated dates for each of these. Those dates are meant to very loosely capture repo activity, so that if you go looking for a classifier for ecosystem X, you can start with more active sources. But I’m not digging that deep; if someone trained a classifier in 2016 that is totally obsolete, but they corrected a bunch of typos in their repo in 2023, they will successfully trick my algorithm for determining the last-updated date.

When possible, the first link for each line item should get you pretty close to the model weights.

Last updated ≥ 2025

- Addax Data Science Central Indian Wildlife (fine-tuned SpeciesNet for 40 categories from Central India) (2026)

- Addax Data Science Northern Territory Vertebrates (fine-tuned SpeciesNet for 140 categories from NT, Australia) (2026)

- TropiCam-AI (ConvNeXt in TF, for 84 neotropical arboreal mammal and bird taxa) (code) (2026)

- TrapTracker UK Mammals (YOLOv26x detector, 31 categories of UK wildlife (mostly mammals, some birds)) (2026)

- TrapTracker Sub-Saharan Africa Mammals (YOLOv26x detector, 35 categories of African wildlife (mostly mammals, some birds)) (2026)

- Australian Wildlife Conservancy Classifier (EfficientNetV2S trained in timm for 135 categories of Australian wildlife) (2026)

- Addax Data Science Hawaii (fine-tuned SpeciesNet for 13 taxa in Hawaii) (2026)

- Addax Data Science Southwest Borderlands USA (fine-tuned SpeciesNet for 68 species in the Southwest UW and the US/Mexico border region) (2026)

- Addax Data Science New Zealand Invasives (YOLOv8-cls model for 17 New Zealand taxa) (2026)

- Addax Data Science Victoria (fine-tuned SpeciesNet for 212 categories in Victoria, Australia) (2026)

- AHDriFT-ID (fine-tuned SpeciesNet for 46 categories in downward-facing small animal cameras in Ohio) (2026)

- WildObs Wet Tropics (fine-tuned SpeciesNet for 121 Australian taxa) (2026)

- WildObs National (fine-tuned SpeciesNet (according to this notebook) for 46 Australian taxa) (2026)

- TeraiNet (EfficientNetV2M trained on MD crops for 10 classes relevant to the Terai region of Nepal) (code) (2025)

- U Tasmania model for Tasmanian vertebrates (EfficientNetV2S trained on MD crops for 96 classes) (2025)

- DeepFaune (custom detector and classifier for European wildlife, both in PyTorch) (code) (also deployed via the DeepFaune client) (2025)

- DeepFaune-New-England (species classifier for New England wildlife, fine-tuned from the DeepFaune model, runs on crops) (2025)

- SpeciesNet (global species classifier for ~2k categories) (2025)

- MegaDetector (v5a and v5b, both YOLOv5, human/animal/vehicle) (2022, metadata update in 2025)

- MegaDetectorLite (ONNX and TensorRT exports of MegaDetector v5) (2022)

- Animl’s TF export of MDv5b (2022)

- Addax Data Science Sub-Sarahan Drylands Classifier (EfficientNet-V2M trained on 2.8M MD crops from LILA images, covering 328 categories) (2025)

- Addax Data Science Japan Gifu (ResNet-50 trained on 13 taxa from Kuraiyama Experimental Forest in Japan)

- Weka Research New Zealand Alita (77 New Zealand taxa) (source) (report)

- Camera Trap Vehicle Classifier (classifies MegaDetector vehicle crops into car/bike/motorbike/quad) (2025)

- Camera Trap Horse Classifier (classifies crops that SpeciesNet says are horses into packhorse/saddlehorse/free-ranging horse) (2025)

- DeepForestVision (classifier for African tropical forests) (2025)

Last updated 2024

- The SDZG Animl package includes several models, all trained in TF, all run on MD crops:

- Southwest US v3 (26 classes, including human and empty) (2024)

- Southwest US extended v3 (33 classes, including human and empty) (2023)

- Kenyan savanna v3 (60 classes, including human and empty) (2024)

- Peruvian Amazon v1 (43 classes, including empty) (2024) (on Hugging Face)

- Peruvian Andes v1 (53 classes, including human and empty) (2024) (on Hugging Face)

- Hex-Data/Panthera AI model for Kyrgyzstan (EfficientNetV2L trained on MD crops for 11 class-/family-level categories) (2024)

- TRAPPER AI model for 18 European mammals (YOLOv8-m detector) (2024)

- Addax Data Science New Zealand Classifier (YOLOv8 classifier trained on MD crops for 17 New Zealand mammal/bird classes) (documentation) (2024)

- Addax Data Science Iran Classifier (YOLOv8 classifier trained on MD crops for 14 Iranian mammal/bird classes) (2024)

- OSU small mammal classifier for Ohio (for AHDriFT cameras) (TF, whole-image classifier(s) at the class and species levels) (code) (2024)

Last updated 2023

- Addax Data Science Namib Desert Classifier (YOLOv8 classifier trained on MD crops for 30 African mammal/bird classes) (documentation) (2023)

- Marburg Camera Traps (EfficientNetv2 and ConvNext classifiers in TF2 for European mammals and birds) (code) (paper) (2023)

- MegaClassifier (EfficientNet, PyTorch, runs on crops, several hundred output classes but really only ever used for a small set of classes in North America) (2023)

- MegaClassifier’s close cousin, the “IDFG classifier” (EfficientNet, PyTorch, runs on crops, ~10 categories relevant to Idaho) (2023)

- Rewilding Europe YOLOv8 (detector trained from YOLOv8m on 30 European species) (requires login, but is otherwise publicly accessible) (2023)

- Mbaza AI (primarily intended for use in the Mbaza AI desktop client, but model weights are available as part of the release (gabon.onnx, ol_pejeta.onnx, and serengeti.onnx) (code) (all three models are whole-image classifiers AFAIK) (2023)

- Goanna detector (available as a YOLOv5x6 detector (trained from MDv5a) and a YOLOv8x detector, five Australian classes) (dingo, fox, goanna, possum, quoll) (2023)

- Tegu detector (YOLOv5x6 detector for tegus and a few other species in Florida, trained from MDv5a) (2023)

- AI4GAmazonRainforest (PyTorch ResNet-50, runs on MD crops, 34 Amazon species (class info) + human + unknown) (code) (2023)

- AI4GOpossum (PyTorch ResNet-50, runs on MD crops, binary opossum classifier) (code) (2023)

Last updated 2022

- Small mammal classifier for Norway (TF, whole-image classifier, 8 classes including “empty”) (code) (2022)

- SpSeg models (TF classifier(s) that run on MD crops, 36 Indian species) (code) (requires filling out a form, but access to model weights is granted automatically) (2022)

Last updated earlier than 2022

- MLWIC2 (TF, whole-image classifiers for (a) blank/non-blank, (b) 58 North American species) (code) (2020)

- Willi et al African Classifier (TF1, whole-image classifier) (2019)

- MLWIC (TF, whole-image classifier for North American species) (code) (2019)

- Norouzzadeh et al. Serengeti Classifier (TF1, whole-image classifiers for blank/non-blank, species, and counting) (2018)

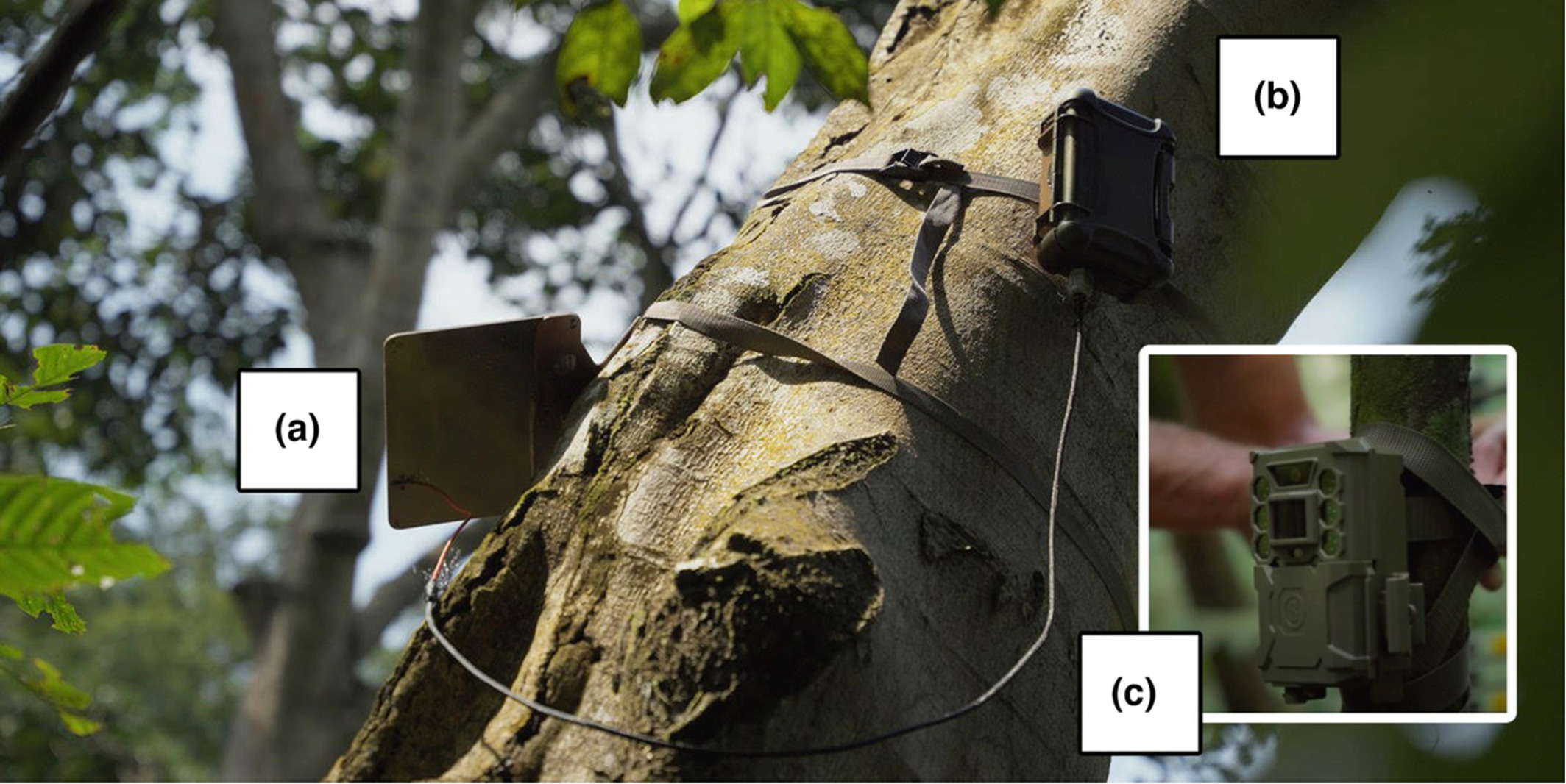

Smart camera traps

Smart camera traps I’m pretty sure have active deployments

The Sentinel (Conservation X Labs)

Module that attaches to existing camera traps to provide connectivity and AI.

Not related to this other smart camera trap also called “Sentinel”.

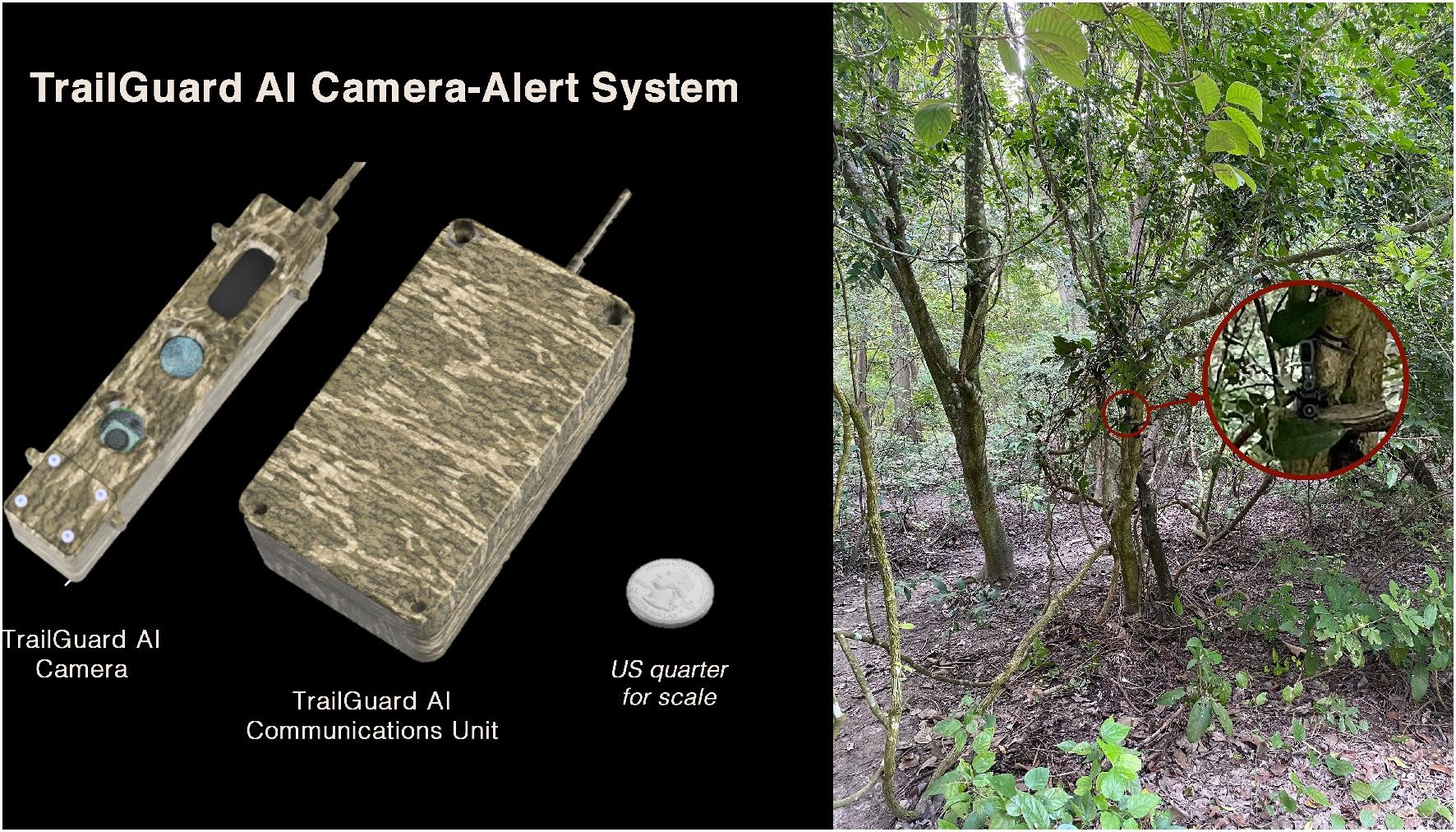

TrailGuard AI

AI-enabled camera developed for anti-poaching and HWC applications.

Thylation Felixer

AI-enabled camera trap for automated invasive predator control in Australia.

Flox Intelligence

AI-enabled camera trap with acoustic deterrent, used to deter wildlife from transportation corridors or human-wildlife conflict scenarios. (Story about using Flox to deter wildlife from train tracks in Sweden.)

Instant Detect

Connected camera that runs a human/animal detector on device, then transmits images via LoRa to a base station, which transmits images via Iridium.

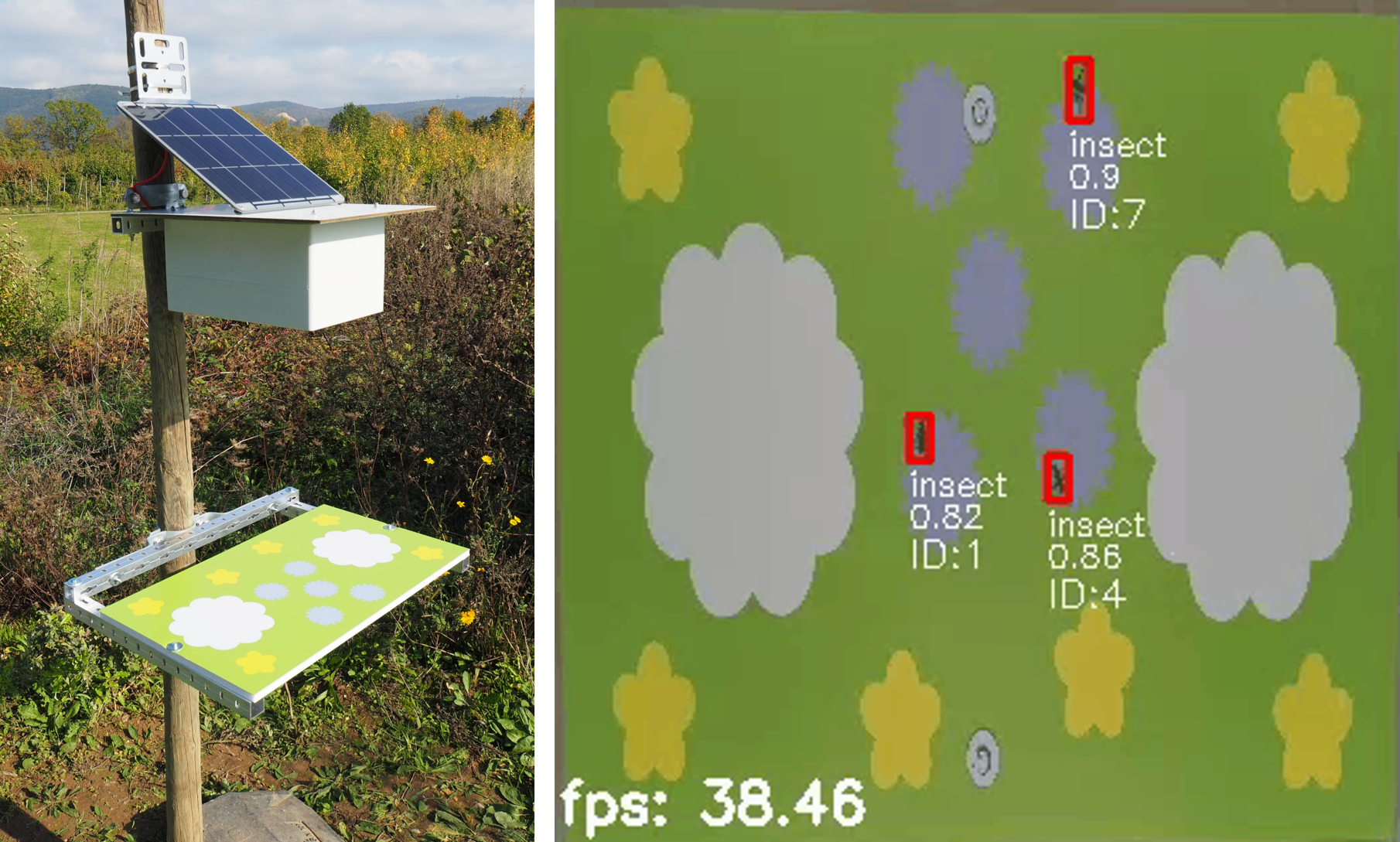

Insect Detect

AI-enabled, RPi-based camera trap for insect monitoring.

Smart camera traps that might not be available…

…either because they are no longer being produced, or because they were still conceptual prototypes as of the last time I updated this page. Stuff gets moved into this category if I can’t find anything online about it that’s more recent than ~2 years old, if I moved your thing and I shouldn’t have, email me.

University of Idaho RCDS camera (no fancy name that I’m aware of)

AI-enabled camera with detection and classification capabilities.

RobotEye Kapan

AI-enabled camera trap designed for anti-poaching applications.

Behold

AI-enabled camera trap designed for backyard wildlife monitoring. Still conceptual as of 2026.01, so still hard to say what this will be, or whether it will get made.

PoacherCam

Web page says: “Adapted from Panthera’s previous camera traps, the PoacherCam has a groundbreaking new feature: its motion-triggered detection system can now instantly distinguish between people and animals—the world’s first camera to do so.” This was from a 2015 blog post, unclear what the status is.

Manual labeling tools people use for camera traps

I’ve broken this category out into “tools that look like they’re being actively developed” and “tools that are less active”. This assessment is based on visiting links and searching the Web; if I’ve incorrectly put something in the latter category, please let me know!

Not repeating items that were already included in the “AI-enabled” list above. E.g., lots of people use Timelapse without AI, but I’m not including it in both lists.

Review papers about labeling tools

Sneaking these in before I get to the list of actual labeling tools. These aren’t necessarily just about labeling tools, but if they’re in this section, they at least contain a good review of labeling tools.

-

Wearn, O., & Glover-Kapfer, P. (2017). Camera-trapping for Conservation: a Guide to Best-practices. WWF-UK: Woking, UK. (pdf)

-

Young, S., Rode-Margono, J., & Amin, R. (2018). Software to facilitate and streamline camera trap data management: a review. Ecology and Evolution, 8(19), 9947-9957.

Tools that appear to be online or were last updated >= 2021

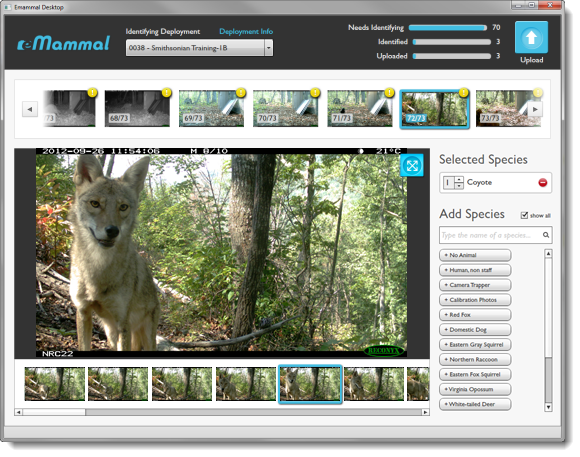

eMammal (Smithsonian)

Software package and Smithsonian-hosted storage. All labeling happens through their tool prior to upload. Data stored by Smithsonian, owned by individual data set owners, and released to collaborators upon request.

I’ve worked with a lot of camera trap data, and I will say that because the tool enforces consistent metadata at the time of labeling, in terms of organization and matching images to labels, data coming through eMammal is an order of magnitude cleaner than anything I’ve worked with from any other source. eMammal metadata is provided in the Camera Trap Metadata Standard (XML variant).

Carnassial (Cascades Carnivore Project)

Offshoot of TimeLapse2; both git pages acknowledge the divergence and refer to differing project requirements.

SPARC’d

Thick-client Java-based tool. Open-source.

Reconyx MapView

CameraSweet

Series of command-line tools for image organization and annotation used by the Small Wild Cat Conservation Foundation.

Zip Classifier

Thick-client Windows tool for tagging camera trap images.

Tools that appear to be less active

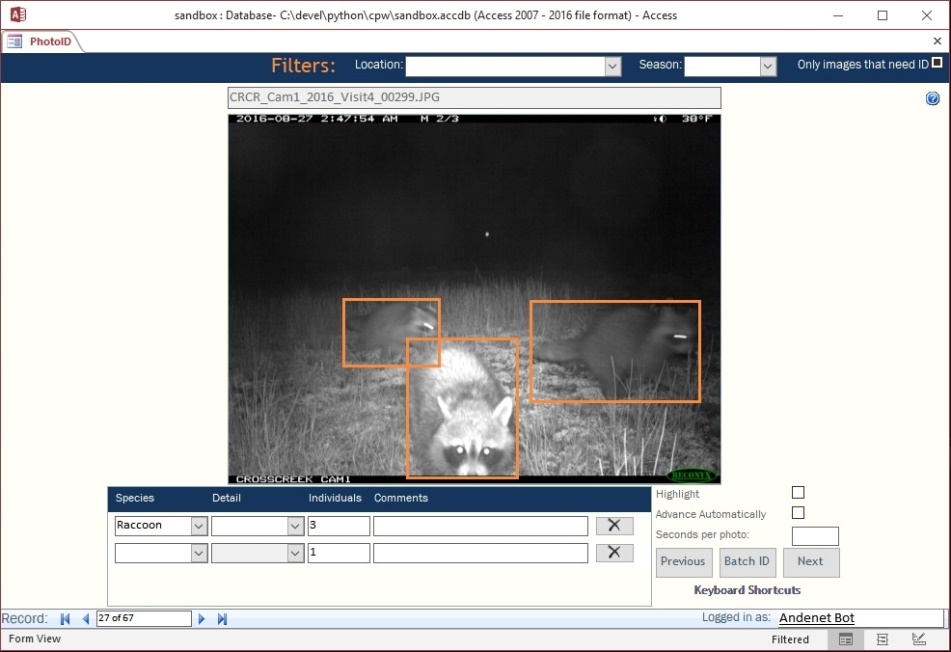

CPW Photo Warehouse

Thick-client (Windows) tool for image management, annotation, and spatial analysis.

https://cpw.state.co.us/learn/Pages/ResearchMammalsSoftware.aspx

Vixen

Open-source, multi-platform, thick-client (Python). As of 8/22, this appears to have been last updated in ~2019.

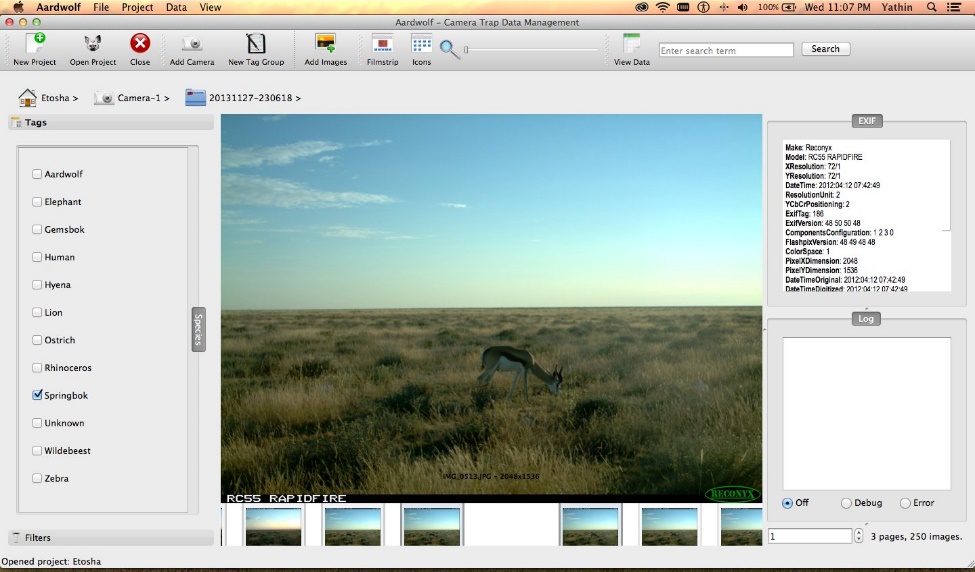

Aardwolf2

As of version 2, this is browser-based (but runs locally) (v1 was a thick-client app). Linux only.

As of 8/22, last update appears to be ~2017.

Camera Base

Thick-client tool for Windows. As of 8/22, it looks like the last update (at least to the user guide) was in 2015.

Camera Trap Manager

“.NET desktop application for managing pictures taken by automatic camera traps”

As of 8/22, the last update appears to have been ~2016.

Non-camera-trap-specific labeling tools that people use for camera trap data

Post-hoc analysis tools people use for camera trap data

Camera trap ML papers

Tags in this section

As promised above, although I don’t filter papers for this list based on whether they use stuff I’ve worked on, I do use this list as a way of tracking how those systems are being used. So you will see the following tags throughout this section:

The first tag (“Ecology Paper”) is used to indicate that this paper isn’t about camera trap AI, it just happens to use AI for camera traps. With any luck, a few years from now, this kind of paper will be 99% of this list! NB: there is a gray area around ecology methods papers that are clearly not about AI, but aren’t exactly ecology papers (e.g. papers comparing camera traps to other forms of observation); I’ve included those in this tag.

The “Individual ID” tag is new as of the time that I’m writing this, in fact it’s possible that someone already has a survey on papers that used AI-assisted individual ID for camera trap images. If that exists, email me. My goal is not to track the literature on individual ID, just to track papers that are pretty specific to camera traps, especially ecology papers that use AI-assisted individual ID in camera trap images.

If you have other tags you think I should be tracking here, email me.

Papers with summaries

Papers from 2026

Parsons MA, Dellinger JA, Vercauteren KC, Lombardi JV, Young JK. Introduced wild pigs affect the foraging ecology of a native predator as both prey and scavenger. Wildlife Biology. 2026 Jan;2026(1):e01581.

“We monitored cougars in an area with wild pigs to evaluate impacts on cougar habitat selection, diet, and feeding behavior, [and] to understand if scavenging by pigs affects cougar feeding behavior or kill rates. … cougars selected for areas based on both landscape factors and prey density, but selection was stronger for native black-tailed deer … than for pigs. We also documented that wild pigs, especially juveniles, were an important secondary prey item for cougars. Finally, we observed that wild pigs scavenged large prey items killed by cougars, which led to reduced feeding time by cougars at individual kills. However, wild pigs did not scavenge frequently enough to affect cougar kill rates.”

Deployed cameras at cougar feeding sites in California, and also deployed cameras at 59 locations in the same area on a 3km grid to estimate background density. Cameras were deployed from May 2021 to April 2023. Used MD with a 70% confidence to eliminate blanks (version unspecified, but at a 70% confidence threshold, I hope it was MDv4!), reviewed images in Timelapse.

Adams TM. Coexisting with Change: Wildlife Responses to Invaded Forest Communities in Southeastern Michigan, Doctoral Dissertation, University of Michigan, 2026.

“I aimed to clarify how invasive plants influence habitat use and behavior of … white-tailed deer and eastern coyote.” “Deer site use was positively associated with buckthorn cover and was greatest during the fall, possibly due to late season cover provided by buckthorn. Plant diversity was not correlated with deer site use. Deer browse was positively associated with honeysuckle, but not other invasive plants. Coyote site use was greatest in areas with buckthorn.” “My findings suggest that deer and coyotes both appear to utilize invasive resources and indicate wildlife behavior within invaded habitat may be highly specific to the invasive plant species.”

One camera was placed at each of 16 sites in Michigan. Used MD to eliminate blanks. MD version is unclear; the text says “v1.2.0”, which is not an actual MD version number, and they use a 70% confidence threshold with the intention of being more conservative than prior work that uses a 90% confidence threshold with MDv4. I’m somewhat confident that this work actually used MDv5, in which case a 70% threshold is very very very high. Reviewed images in DigiKam after removing blanks with MD.

Cardona LM, Brook BW, Buettel JC. Fear on the Landscape: How human activity shapes wildlife habitat use in protected areas in Tasmania. EcoEvoRxiv, 2026.

“Here, we used time-to-event camera trap data from a large-scale survey in Tasmanian protected areas to investigate the influence of motorised (vehicles) and non-motorised (hikers, joggers, and cyclists) recreation on wildlife return times - the time between consecutive detections of the same species – during the warm and cold season.” “We found that more motorised and non-motorised events delayed Tasmanian devil return times in both seasons. For herbivores, all species showed longer return times under motorised activity irrespective of season, whereas non-motorised activity had no significant effect on wallabies during the cold season.”

119 cameras in Tasmania, along gravel/dirt roads. Removed blanks and separated animals/vehicles with MDv5 at a confidence threshold of 50%.

Dimitriou A, Benson-Amram S, Gaynor K, Burton C. The effect of outdoor recreation on mammal habitat use and diversity revealed by COVID-19 closures. bioRxiv. 2026:2026-04.

“We leveraged a natural experiment, in which two adjacent PAs were closed to the public for different durations during the COVID-19 pandemic. Using detections from 39 camera traps in Joffre Lakes and Garibaldi Parks, Canada, from 2020-2022, we examined how recreation influenced mammal habitat use and diversity. Bayesian regression showed weak evidence that, when recreation was higher, detections declined for black bear, mule deer, and marten, while detections of bobcat and hoary marmot shifted closer to trails. Accumulation curves revealed that species richness and diversity were higher in the closed vs. open PA in 2020. … However, diversity did not decline consistently despite increases in recreation in 2021 and 2022. Notably, several rare species were only detected in the lower-recreation PA, suggesting they may be filtered out of the higher-recreation PA.”

Used MD to separate humans/vehicles from blanks.

Larson CL, Bloom TD, Egan A, Farris T, Gersh K, Merigliano L, Owen C, Seidler R, Turner H. Neighbors to nature: A case study of recreation-wildlife co-existence in the Greater Yellowstone Ecosystem. Conservation Science and Practice. 2026:e70263.

“We monitored medium to large mammal and human activity to assess impacts of recreation and inform management, deploying 27 trail cameras along multi-use non-motorized recreational trails for 2.5 years in a heavily used area within the Greater Yellowstone Ecosystem, USA. A diverse mammal community coexists alongside intense recreational use, and while many species do not appear to respond negatively to current levels of recreation, moose showed temporal avoidance and elk showed both temporal and spatial avoidance of recreation. Foot traffic—including hiking, skiing, and snowshoeing—had a stronger negative effect on wildlife than other activities, while some species overlapped with high levels of cyclist and dog activity.”

Used MD (version unspecified) to separate humans/blanks/vehicles/animals, with a 75% threshold (which is very high for MDv5, so I’m hoping that was MDv4). They found that almost all images with a human and a vehicle were cyclists, so they treated human+vehicle classifications as cyclists, and human-only classifications as hikers. Species classification was done on Zooniverse, with expert review in cases where there was not a clear Zooniverse consensus or where the Zooniverse consensus was “unknown”.

Trepel J, Atkinson J, Le Roux E, Abraham AJ, Aucamp M, Greve M, Greyling M, Kalwij JM, Khosa S, Lindenthal L, Makofane C. Large herbivores are linked to higher herbaceous plant diversity and functional redundancy across spatial scales. Journal of Animal Ecology. 2026 Jan;95(1):230-42.

“…we investigated the effects of large herbivores along a gradient of trophic complexity … and herbivory intensity … in 10 reserves in the savanna biome in South Africa. We found higher total plant species richness, driven by higher herbaceous (but not woody) plant species richness, in areas with higher herbivory intensity across multiple scales. While herbivores had no significant relationship with plant functional richness, we observed higher functional redundancy at all scales in areas more frequently visited by herbivores.” … “Our results suggest that restoring large herbivore populations can be expected to promote herbaceous plant diversity and ecosystem resilience.”

They surveyed five sites in each of 10 reserves (50 sites total), with a camera at each site for >1 year. Used MDv5 via EcoAssist with a threshold of 0.2 (huzzah!), then reviewed the remaining images in DigiKam.

Holmes HA, Specht HM, Bate LJ, Smucker K, McCormack C, Staab C, Franklin TW, Bachen D, Maxell B, Begley AJ, Millspaugh JJ. Combining non-invasive survey methods increases cumulative detection probability for breeding harlequin ducks Histrionicus histrionicus. Wildlife biology. 2026 Mar:e01610.

“Population monitoring of harlequin ducks on their breeding streams … has historically relied on ground-based footsurveys (GBS) … . We quantified the detection probability of GBS and compared it to … eDNA and camera traps for detecting breeding harlequin ducks. … Both eDNA and camera traps proved effective in detecting harlequin ducks, particularly when replicated across space or time. It is important to note that eDNA and camera traps only provide presence/absence data, while GBS provides relative abundance. Combining methods proved particularly effective; we estimated a cumulative detection probability > 0.95 for a single-day survey effort by collecting eDNA samples spread throughout a stream reach in tandem with a GBS.”

Study area was in Idaho/Montana, along 5km-8km stretches on 10 streams. Two cameras (one timelapse, one motion-triggered) were placed each river mile, so somewhere around 50 total camera sites (100 total cameras). Used MDv5a with a confidence threshold of 0.5, reviewed images in Timelapse.

Breaking the fourth wall: I know anecdotally that this was a tough case that required augmentation and pretty extensive RDE.

Ringwaldt EM, Buettel JC, Carver S, Brook BW. Epidemiological Dynamics of a Visually Apparent Disease: Camera Trapping and Machine-Learning Applied to Rumpwear in the Common Brushtail Possum. Integrative Zoology. 2026 Jan;21(1):116-28.

“We visually classified images of rumpwear clinical signs in 6908 individual possums collected from a 3-year camera trapping network. Our results revealed that: (1) adults were twice as likely to show signs of rumpwear compared to young possums; (2) rumpwear occurrence increased with the relative activity of possums at a site; and (3) prevalence of rumpwear was seasonal, being lowest in May and highest in December.” … “Additionally, a CNN was trained to identify rumpwear.”

For training and running their classifier, they used MD and resampled crops to 384x384; they trained on ~8k total crops. They used EfficientNet-v2 in Keras for the classifier.

</br>Paton AJ, Flett I, Pauza M, Brook BW, Buettel JC. Did curiosity kill the cat? The impacts of aerial baiting and Felixer deployment on feral cat populations on Three Hummock Island, Tasmania. Wildlife Research. 2026 Jan 27;53(2):WR25057.

“This study aimed to evaluate the effectiveness of lethal aerial baiting and Felixer grooming traps in reducing feral cat activity on Three Hummock Island, Tasmania, to support the establishment of a hooded plover stronghold.” “A network of 20–60 camera traps, operating over 37,175 trap days during 2019–2024, was used to monitor changes in feral cat activity and site usage.” “Felixers were effective in reducing feral cat activity with minimal intervention, whereas aerial baiting alone had limited impact.”

Used MD to remove blanks, MEWC (presumably the Tasmanian classifier, though this isn’t specified) to classify species.

The cats in their study area were too similar to identify individuals, but they manually reviewed images for evidence of a kinked tail as a marker of genetic diversity.

Study code is here.

Yamashita TJ, Tanner AM, Tanner EP, Scognamillo DG, Tewes ME, Young Jr JH, Lombardi JV. At the intersection of soundscapes and roads: Quantifying anthrophony’s influence on wildlife crossing structure use. Ecological Applications. 2026 Jan;36(1):e70192.

Examined the relationship between anthropogenic noise and wildlife crossing use by opossums at five crossings in Texas, using camera traps and acoustic recorders. Found that “opossums spent more time at WCSs when it was quieter on average and were more likely to successfully cross through a WCS when there was less vehicle noise.”

Used MD (presumably MDv5a) with thresholds of 55% for animal/person/vehicle, and 60% for empty (I’m not sure exactly what that empty threshold refers to, I think that means in practice they used a threshold of 40% for all categories, after a somewhat faster initial review with 55% thresholds). Used Timelapse for image review.

Notario Rincon D, Muñoz A, Barrientos R, Gaytán Á, Cano L, Quiles P, Sánchez L, Redondo A, Cisneros A, Bonal R. Disentangling Multitrophic Interactions: How Vegetation Cover, Wild Boar, Deer, and Predators Shape Rodents Activity and Acorn Dispersal. Integrative Zoology. 2026 Mar 26.

“We combined camera trapping and piecewise structural equation modeling to evaluate how vegetation structure, carnivores, deer, and wild boars influence rodent activity and their role as seed dispersers. Rodent activity increased with shrub density, as they find protection under vegetation cover. However, at a finer scale, rodent activity was unrelated to carnivore records. … While wild boars had a direct negative effect on rodent activity …, deer had no effect on rodents. Unlike wild boars, deer do not trample and root in the soil, destroying burrows and even predating rodents. Most acorns were dispersed under shrubs by rodents, and the effect of rodent activity on dispersal distances was entirely mediated by vegetation cover. By explicitly separating the effects of different large-sized mammals, our study identifies wild boars as the main disruptor of mice activity and rodent-mediated seed dispersal.”

Used MD for blank removal (version/threshold unspecified), reviewed images in XnView MP.

Boer-Cueva M, Bombieri G, Centomo E, Partel P, Dorigatti E, Ferraro E, Greco I, Rovero F, Salvatori M. Hunting and Outdoor Recreation Affect Large Herbivore Activity Patterns More Than Natural Predators in a Human-Dominated Landscape. Ecology and Evolution. 2026 Feb;16(2):e73033.

Study the impact of hunting and wolves on red deer space use in the Italian Alps. Find that “behavioural constraints imposed by humans on red deer, coupled with the cursorial predatory strategy of wolves, likely limit the possibility of wolf avoidance by red deer. In human-dominated European landscapes, human disturbance can therefore override natural predator–prey dynamics, reshaping behavioural landscapes and potentially increasing predator and prey spatiotemporal co-occurrence.”.

Placed camera traps at 42 sites between 2020 and 2024 (6,883 camera-trap-days). Used Wild.AI, “hosted on a platform maintained by the University of Florence” to separate blank/human/animal/vehicle images, then classified species manually. I’m not technically sure that Wild.AI uses MegaDetector, but I’m comfortable using the “MegaDetector” tag here based on that description, since I’m like 99.98% sure.

Schille L, Poirier V, Raspail F, Chaumeil P, Bordenave P, Herrault PA, Paquette A. Urbanization drives dietary specialization in insectivorous bird communities: insights from a multi-prey cafeteria experiment monitored by innovative cameras. bioRxiv. 2026:2026-02.

Study the impact of urbanization on bird biodiversity at urban edges in Montreal, specifically aim to assess the mechanisms driving the decline in avian biodiversity along the urbanization gradient. Used both natural environments and controlled study areas “cafeterias”. Studied both avian and prey (insect/arthropod) biodiversity at 24 study plots. “We found a strong decline in avian biodiversity and in the availability of high-quality prey along the urbanization gradient, with a convergence toward generalist dietary traits. Yet, the avian biodiversity loss was buffered by canopy cover and tree diversity. Impervious surfaces, canopy cover, local vegetation cover, and lepidopteran abundance were key drivers of the composition of foraging communities observed at cafeterias.”

Studied vocalizing birds with AudioMoths and BirdNET.

In the cafeteria sites, they used custom cameras to monitor predation events. Cameras were time-triggered. They used MD through EcoAssist, with a confidence threshold of 2%, to remove empty images. Species identification was manual.

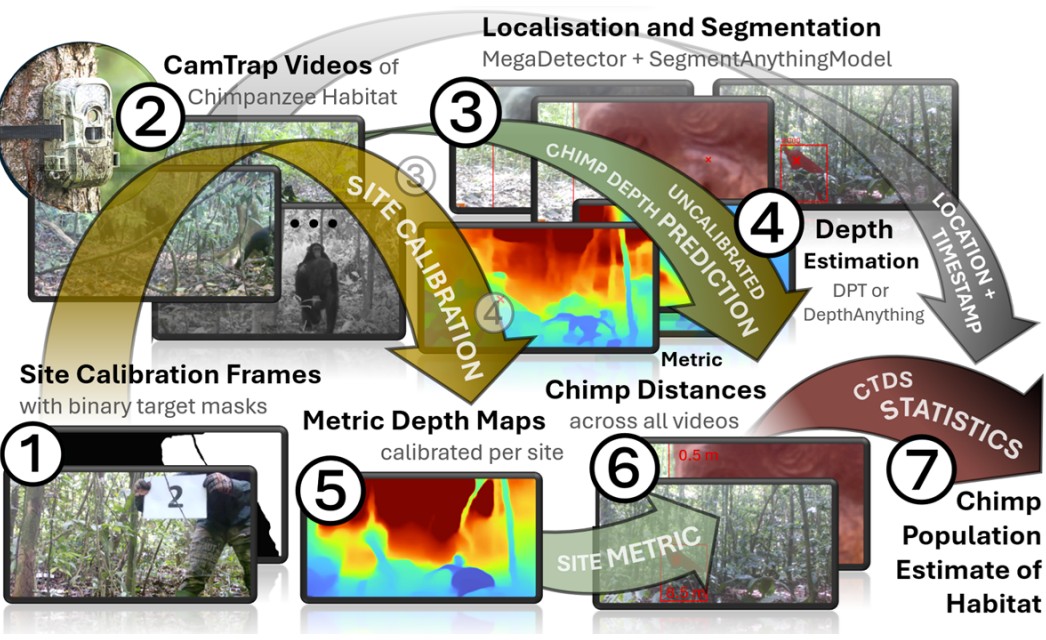

Raynes T, Brookes O, Haucke T, Bösch L, Crunchant AS, Kühl H, Beery S, Mirmehdi M, Burghardt T. Deep in the Jungle: Towards Automating Chimpanzee Population Estimation. arXiv preprint arXiv:2601.22917. 2026 Jan 30.

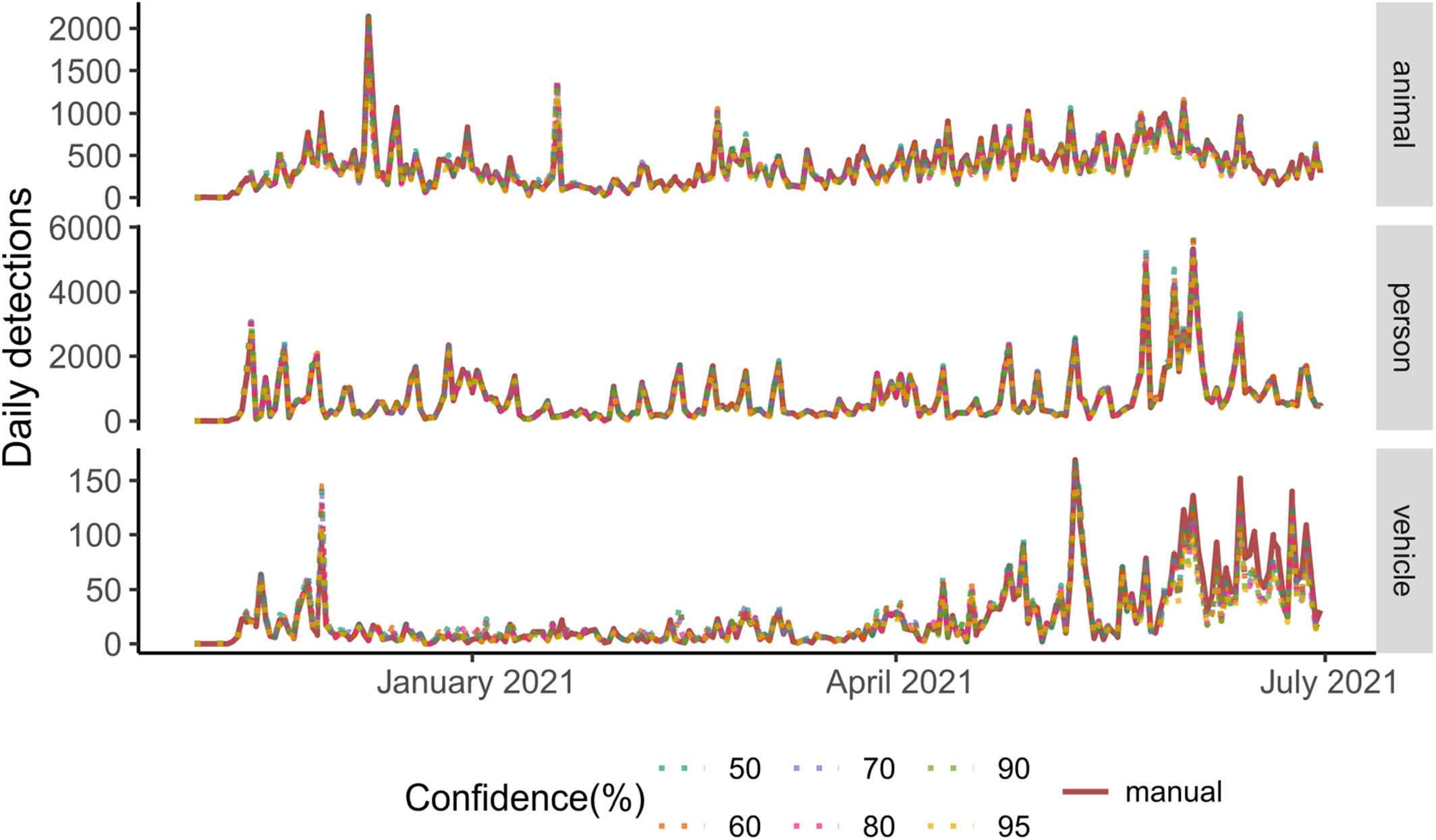

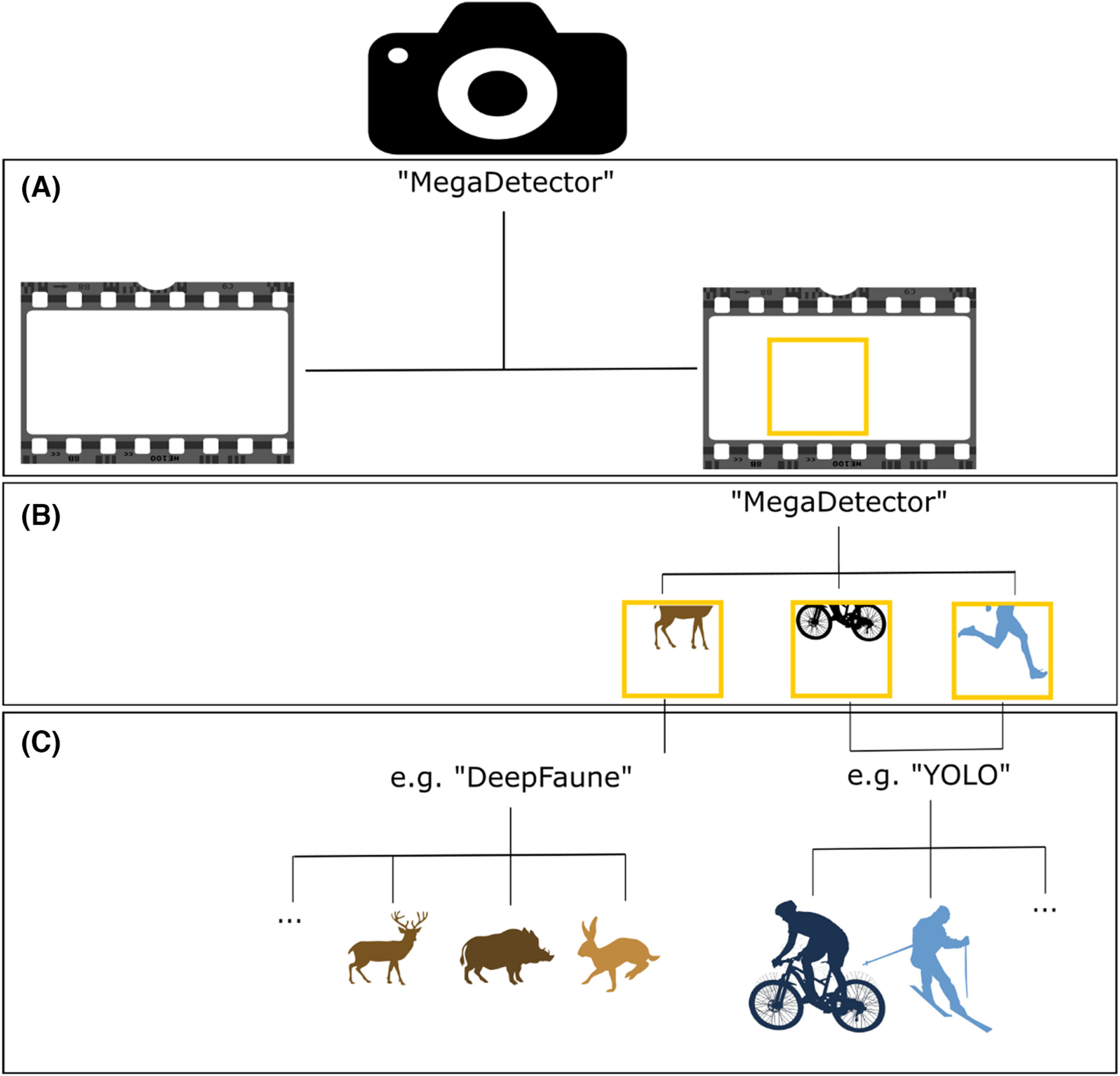

Compared two approaches - Dense Prediction Transformers (DPT) and Depth Anything (DA) - to monocular depth estimation in camera trap videos.

Worked with 220 camera trap videos from 65 locations in Cote d’Ivoire. A reference video with known distances marked is recorded at each location, and experts reviewed every video to estimate depth based on the reference video.

Used MD to crop animals, then used SAM to get instance masks, then used DPT/DA to estimate depth. Found that DPT provided better depth, although DA provided more fine-grained detail. Using DPT gave abundance estimates “well inside the uncertainty cone of CTDS”.

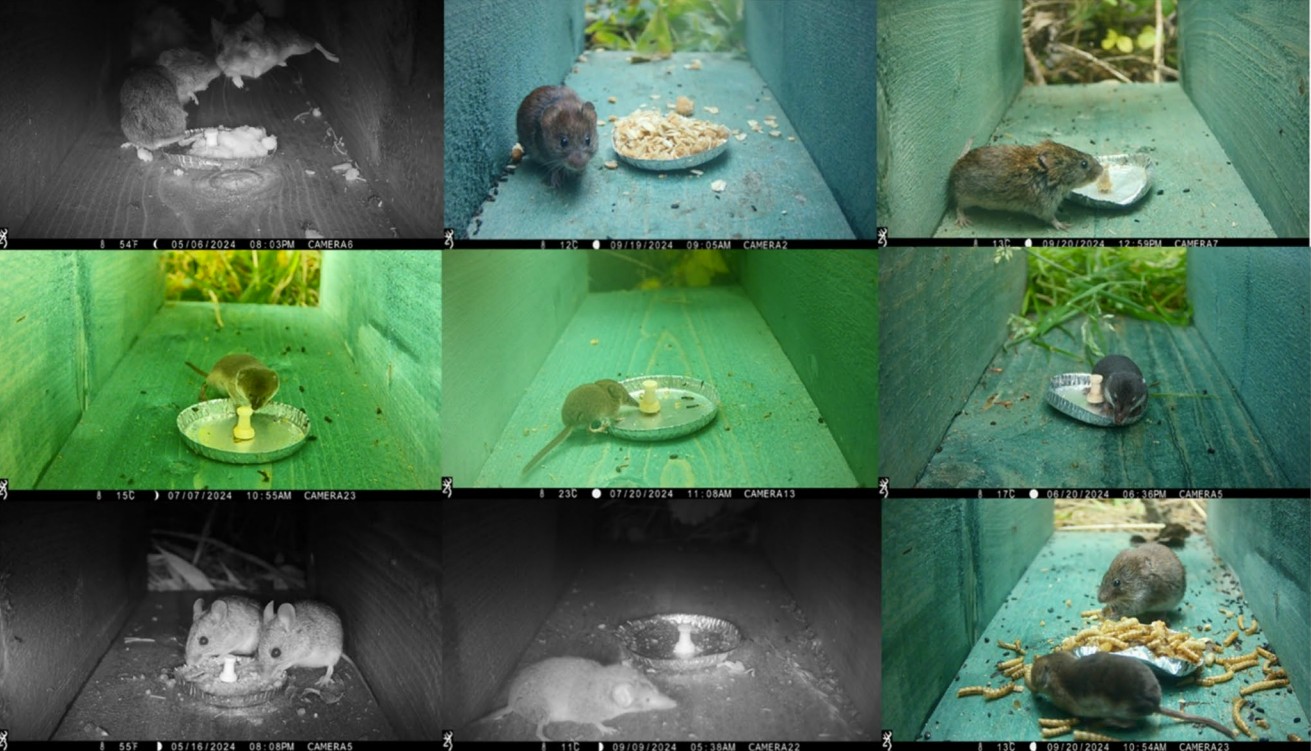

Sharpe CR, Hill RA, Diggins SY, Stephens PA. Camera trapping for small mammals: the case of a non-native shrew. European Journal of Wildlife Research. 2026 Feb;72(1):20.

“Investigated the optimal bait strategy to maximise small mammal detections in the Northeast of England within the currently known range of the non-native greater white toothed shrew … We found no significant difference in the probability of detection of small mammal species by bait type, but there were greater numbers of captures of shrew species at traps baited with mealworms. We conclude that the use of bait is associated with a greater number of captures for all small mammal species observed compared to non-baited traps.”

Used small baited boxes, deployed 12 cameras at each of eight sites (108 unique locations total). Used MammalWeb (which used MegaDetector) to process images, assessed a subset of sequences to evaluate MD performance, found that “Of sequences that Megadetector classifies as containing ‘Nothing’ (936 sequences), there were only 18 in which C.S. alternatively identified an animal”. (Breaking the fourth wall: I’m pleasantly surprised by this! I’m usually quite cautious about encouraging anyone to use MD for small animal cameras.) All that said, these boxes are not super-prone to false positives, so MD only reduced their total number of sequences from ~50k to ~47k. MD version unspecified, but the threshold of 0.1 implies MDv5.

Hughes C, Caouette A, Lorentz B, Scherger J, Becker M, Harrison WC. Lessons Learned for Using Camera Traps to Understand Human Recreation: A Case Study from the Northern Rocky Mountains of Alberta, Canada. Land. 2026 Jan 7;15(1):120.

Experience report on their use of camera traps to monitor recreation activity in a large area in the Rocky Mountains of Alberta, spanning several parks. Deployed 60 cameras from 2021 to 2023, totaling ~650k images. Used WildTrax for image review, and used MDv5 within WildTrax.

Found some challenges in assessing recreational activity, e.g. “…inconsistent numbers of inbound and outbound images tagged. This means some recreationists likely have routes that are unknown to us and therefore not monitored with a camera; that they were traveling too fast (i.e., on a mountain bike, by horse, or in a vehicle) to be detected by the camera; or purposefully avoided detection by the camera.”

Dan steps aside to email the authors about the camera trap vehicle classifier and SpeciesNet…

…and we’re back.

Shapira O, Izhaki I, Zemah-Shamir S, Malkinson D. How Pastoral Are Pastoral Landscapes? Scavenger Assemblage Structure in Human-Dominated Landscapes: A Case Study From Mediterranean Pastures. Ecology and Evolution. 2026 Jan;16(1):e72839.

They conduct an “in situ “cafeteria” field experiment” (awesome phrase) in Israel, to assess scavenger activity when domesticated carrion (cattle) and native carrion (boar) are placed in both pastoral and non-pastoral landscapes. Monitored predator activity with camera traps; found that foxes preferred native species, other predators exhibited no preference. Deployed 14 camera traps for up to 14 days, capturing one still plus a 30s video. Used MegaDetector with a 20% confidence threshold on images and videos, reviewed in Timelapse.

Hitchcock K, Tollington S, Yarnell R W, Williams LJ, Hamill K, Fergus P. Optimizing the Accuracy and Efficiency of Camera Trap Image Analysis: Evaluating AI Model Performance and a Semi-Automated Workflow. Remote Sensing, 18(3), 502. https://doi.org/10.3390/rs18030502. 2026.

Evaluate the Conservation AI UK mammals model, find that “Initial … model outputs demonstrated high precision (>0.80) for foxes and hedgehogs but low recall (<0.50) for hedgehogs. Following retraining, AI model performance improved substantially. However, discrepancies between AI and human classifications remained statistically significant, indicating that human accuracy still outperformed that of the AI model. Recall scores for hedgehogs also remained low despite these improvements.”

Work with data from 494 camera traps in the UK, totaling ~851k images. Used Timelapse to create ground truth for all the images that were sent to Conservation AI. Found that accuracy went up with site-specific training, though I couldn’t find details about how that training worked. Also propose a MegaDetector + Conservation AI workflow (using MD for blank elimination, not for cropping), but evaluating that workflow is outside the scope of this paper.

Gaya HE, Jorge MH, Jorge LA, Ruder MG, D’Angelo GJ, Chandler RB, Chamberlain MJ. White-tailed deer population declines in a high-prevalence chronic wasting disease region of Arkansas, USA. PloS one. 2026 Jan 7;21(1):e0340070.

“We collected data from 243 camera traps and deployed GPS-collars on 131 adult deer to monitor population dynamics. … Deer densities declined at all three study sites .. These findings suggest that CWD can negatively impact deer populations through direct reductions in density, but additional research is needed to determine if additional factors contributed to these declines. Furthermore, our findings suggest the populations we studied are not sustainable under current harvest regulations.”

Used MegaDetector to eliminate blanks, and used MegaClassifier (!) to identify species. Also trained a YOLO object detector to classify sex/age and the presence of tags/collars (details not provided, and it’s not made clear why they trained a detector for sex/age rather than a classifier).

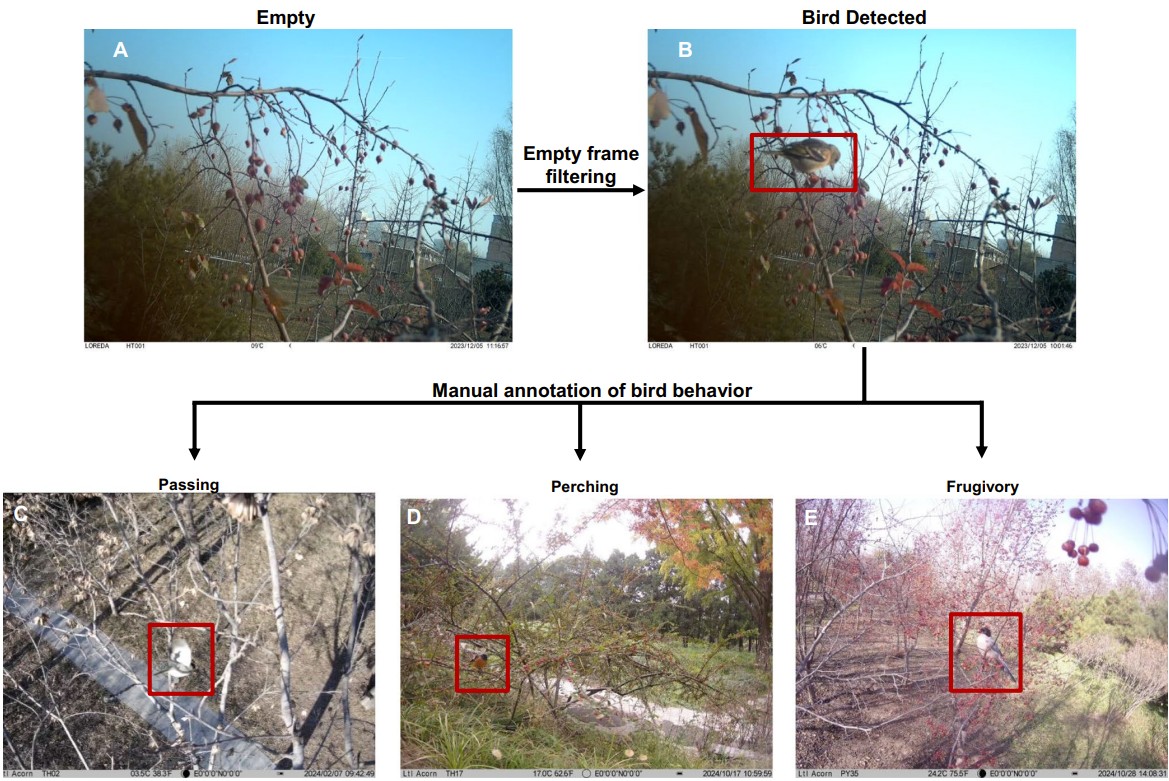

Liu X, Yang X, Zhou J, Li X, Guo Z, Yang J. Patterns of Avian Frugivory in Beijing’s Urban Forest Across Multiple Temporal Scales. Ecology and Evolution. 2025 Dec;15(12):e72699.

“…we employed arboreal camera trapping to investigate the temporal patterns of bird–fruiting tree interactions across hourly to annual time scales in three urban forest sites in Beijing, China. We captured 618 independent frugivory events involving 19 bird species and 12 fruiting tree species from 584,886 images recorded in 12 months. Large-scale temporal events like fruiting phenology and bird migration had primary effects on the seasonal patterns of frugivory events, while site conditions, including fruit tree diversity and human activities, significantly affected the monthly and diurnal patterns. The structure of the interaction network also reflected the effects of these factors. Our results show that maintaining consistent fruit availability and minimizing human disturbances are critical for sustaining avian frugivory in urban forests.”

35 cameras total across three sites, pointing inward at trees, captured three photos and a 10s video. Used MegaDetector for blank elimination, reviewed behaviors in videos in Timelapse. If I’m reading their description right, they manually reviewed 1% of images from each monitoring site, and adjusted their MD threshold to ensure 95% recall.

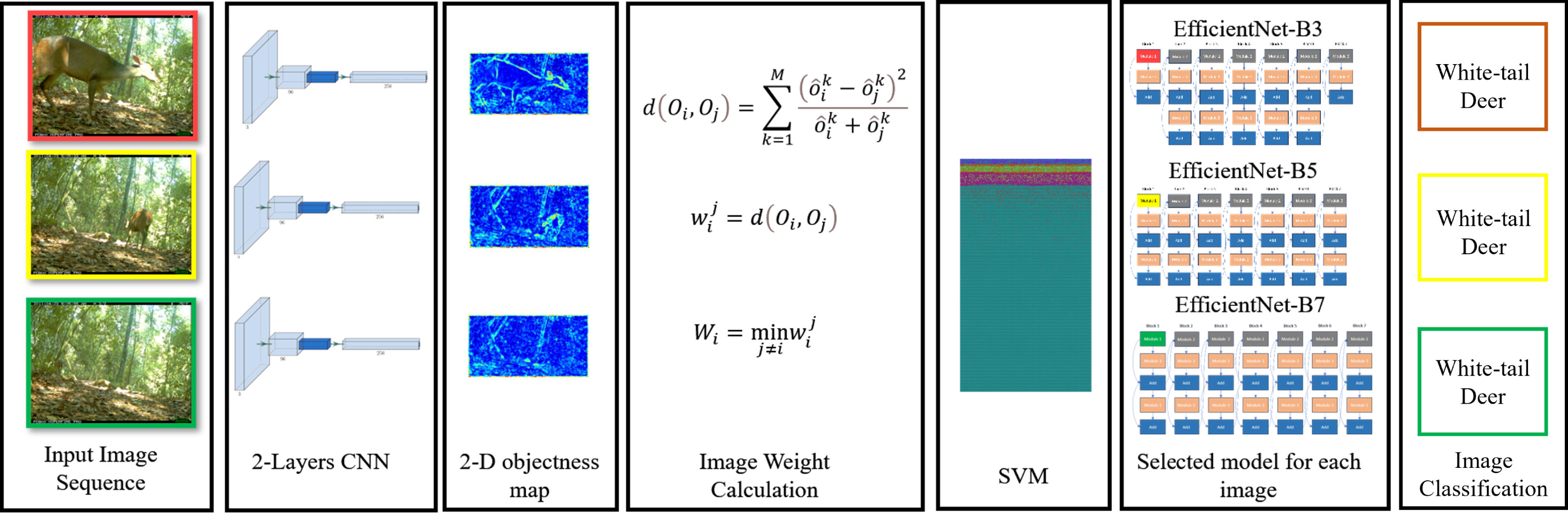

Yousif H, Al-Milaji Z. Image classification and object detection complexity optimization: Exploring deep learning models on camera trap and surveillance clips. Results in Control and Optimization. 2026 Jan 3:100654.

Propose the use of an ensemble of CNNs to classify images in a sequence, where different images in the sequence might be passed to different models based on image properties (particularly animal size). They don’t do an explicit detection step, rather they look at the statistics of an initial CNN to determine the appropriate resolution model for each image.

Evaluate on Missouri Camera Traps, as well as surveillance videos.

Their initial CNN (for choosing CNN resolution) is AlexNet trained on Snapshot Serengeti.

Sha L, Tian Y, Zhang J. GDP-CT: Grouped data pruning for camera trap. Expert Systems with Applications. 2026 Mar 1;299:130234.

Propose a data selection strategy for training camera trap AI models. Evaluate on Caltech Camera Traps and iWildCam. Remove blanks with MDv5a. Sample images for training based on both a maximum number of images per category and a desired distribution across locations. Train ViT-S/14 with DINOv2 weights, compare their pruning approach to baselines (at the same total data volume), e.g. random selection. Find substantial F1 improvement when using their image selection method.

Ploquin OA, Basso O, Ndlovu M, Prugnolle F, Caron A, Munzamba M, Porovha E, Nkomo K, Corbel G, Arnathau C, Boissière A. Using social network analysis and non-invasive antibody detection to explore pathogen exposure in wildlife communities. bioRxiv. 2025:2025-12.

Survey 14 water holes in Zimbabwe with camera traps, also collect fecal samples to monitor the presence of foot-and-mouth disease antibodies in herbivores. The conclusion is methodological, not ecological, i.e. their goal is to demonstrate that fecal sampling with antibody screening can facilitate disease monitoring.

Used two cameras at each site, operated 15 days per onth over a year, with 1-minute timelapse recordings during the day and motion triggering at night. Used MegaDetector in TrapTagger to eliminate blanks, reviewed images in TrapTagger.

Markoff M, Bengtson SH, Ørsted M. Vision Transformers for Zero-Shot Clustering of Animal Images: A Comparative Benchmarking Study. arXiv, February 2026.

Evaluate five ViT architectures for clustering; conclude that DINOv3 with t-SNE provides near-supervised-level performance in an unsupervised framework. Use MDv1000-redwood for cropping, then cluster crops. Used almost every camera trap dataset on LILA (23 projects), ended up with ~139k crops. Initially classified with SpeciesNet, though it’s not clear to me why, since they already had labels from LILA. An expert reviewed all the labels in any case.

Compared:

- CLIP, SigLIP, DINOv2, DINOv3, and BioCLIP 2 for feature generation

- UMAP, t-SNE, PCA, Isomap, and Kernel PCA for dimensionality reduction

- DBSCAN, HDBSCAN, Hierarchical Clustering, and GMMs for clustering

Found that DINOv3 outperformed other embedding spaces, t-SNE outperformed other dimensionality reduction approaches, and HDBSCAN outperforms DBSCAN.

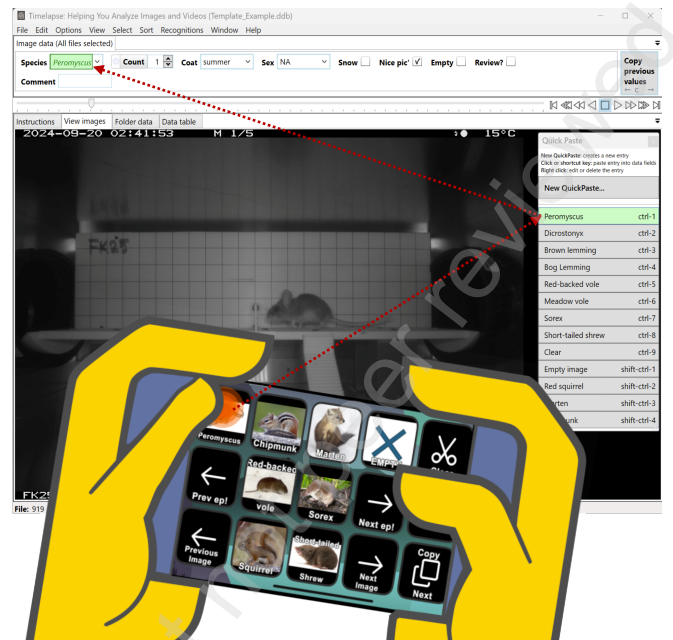

Bolduc D, Fauteux D, Genest MA, Legagneux P. Speeding up image annotation and AI-model training for wildlife images. Available at SSRN 6616203.

Propose a pipeline for rapid semi-automated labeling of camera trap images for training custom models. The pipeline uses MegaDetector to eliminate blanks and localize objects, Timelapse to label images, and - if you want to train a segmenter - generating bounding contours with SAM3. Evaluate on a small-animal dataset, which (breaking the fourth wall) is IMO a good use case for this, since MD is hit-or-miss on small animal cameras. They also specifically discuss the speedup they get from connecting a StreamDeck handheld media controller to Timelapse.

Code is here.

Zampetti A, Santini L, Baltzinger C, Beirne C, Bowler MT, Cedeño-Panchez BA, Ferreiro-Arias I, Forget P-M, Guilbert E, Kemp YJM, Paltrinieri L, Ortiz I, Peres CA, Scabin AB, Benítez-López A. Introducing TropiCam-AI: An automated classifier of Neotropical arboreal mammals and birds from camera-trap images. Methods in Ecology and Evolution. 2026.

Train ConvNeXt-Base on 84 taxa of arboreal taxa from South America. Incorporate taxonomic fallback into their analysis, analogous to SpeciesNet. Trained on ~85k images and ~26k videos from camera traps, and ~100k images from iNat (seven categories came only from iNat). Used MDv5 with a threshold of 0.8 for training (0.2 for inference), classified crops. Split at the sequence level, rather than the camera level. Used a rollup classification threshold of 0.75. Model is available in AddaxAI, code is here.

Granados A, McDonald Z, Le C, Cohen B, Stoner D. Illuminated landscapes: urbanization’s influence on predator and prey behavior. Urban Ecosystems. 2026 Apr;29(2):82.

“Using data from 61 camera trap stations in two contrasting urban landscapes in California, USA, we examined the influence of multiple anthropogenic factors on the diel activity of an apex predator (puma), a mesocarnivore (bobcat), and an ungulate prey species (mule deer). We quantified the effects of artificial light at night (ALAN), proximity to noise pollution, moonlight intensity, and co-occurring wildlife on nighttime habitat use.”

“Pumas and bobcats were less active in areas with more ALAN. Pumas also avoided areas of high human use but were more active where mule deer were present. In contrast, mule deer increased nighttime activity in artificially illuminated areas while avoiding noise and bright moonlight, consistent with predictions under the human shield hypothesis.”

Processed 35,416 trap days through MD via WildePod, reviewed non-empty images manually on WildePod.

Brundage D. Generating Synthetic Wildlife Health Data from Camera Trap Imagery: A Pipeline for Alopecia and Body Condition Training Data. arXiv preprint arXiv:2603.26754. 2026 Mar 23.

This paper’s goal is to use synthetic data to train an alopecia classification model for eight North American mammals. They use Gemini 3.1 Flash Image (aka Nano Banana 2) to generate images where body condition and alopecia state are modified from a real image of a healthy animal. They use MDv4 to detect the region of the image that should be modified, and if there is too much modification outside the mask, that’s considered a case that’s likely to be an incorrect scene modification.

They train a classifier using a frozen DINOv2 backbone, and they do a sim-to-real transfer experiment using 25 images from camera trap forums where participants identified likely disease states, and got good results (but YMMV, that’s a small dataset).

Synthetic data is here.

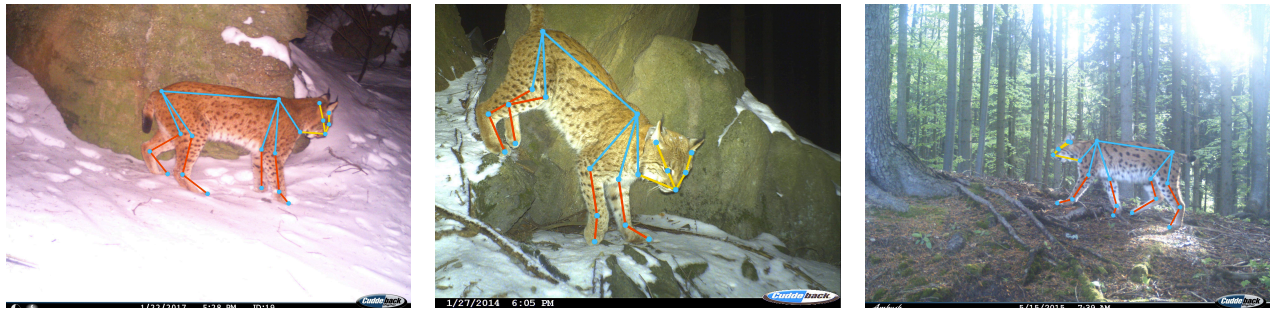

Picek L, Straka J, Jirik M, Belotti E, Duľa M, Krausová J, Bojda M, Cermak V, Bufka L, Dvořák R, Hrdý L. Czechlynx: A dataset for individual identification and pose estimation of the eurasian lynx. Scientific Data. 2026 Feb 24.

They describe a dataset of ~40k camera trap images of ~319 individual lynx with segmentation masks, identity labels, and skeletal decompositions.

Some of the original data was stills, some was video; they used MDv5 to choose the first and last frames with detections > 0.7 from each video, plus three frames in between with large boxes.

They also describe a pipeline for generating synthetic images (with Unity, not with LLMs).

Dataset is here.

Papers from 2025

Mason RT, Rendall AR, Sinclair RD, Pestell AJ, Ritchie EG. What’s on the menu? Examining native apex- and invasive meso-predator diets to understand impacts on ecosystems. Ecological Solutions and Evidence. 2025 Apr;6(2):e70032.

Assessed the impacts of dingo, fox, and domestic cat predation on native and invasive prey in a semi-arid ecosystem in Southeast Australia. Found that “dingoes … primarily consume large overabundant herbivores, while mesopredators, invasive foxes and cats, place disproportionate predatory pressure on smaller prey”. Collected scat along 451 transects in 2022/2023, placed camera traps at 160 of them.

Used MDv4 in Timelapse to remove blanks; species identification was manual.

Boyce PM, McLoughlin PD. Habitat selection and occupancy of feral horses in comparison to cattle and elk in the Rocky Mountain Foothills of Canada. Frontiers in Conservation Science. 2025 May 23;6:1585546.

Use 120 cameras in Alberta to monitor occupancy of horses, domestic cattle, and elk. Found that “trail-camera occupancy analyses … pointed to the presence of cattle as a potential modulator of horse habitat use” and that “elk summer occupancy increased with decreasing distance to conifer forest and increasing native rangeland”.

Had about 1/3rd of their images processed manually by students, the rest were initially processed by MD (version unspecified); all non-empty images from either batch were reviewed (for the manual first pass) or classified (for the MD first pass) by the authors.

Gurney SM, Christensen SA, Nichols MJ, Stewart CM, Williams DM, Mayhew SL, Gilbert NA, Etter DR. Harvest restrictions fail to influence population abundance. Ecosphere, August 2025.

Use camera traps to assess the impact of limiting hunting via antler point restrictions (prohibiting the hunting of antlers with less than four points on one antler) in Michigan. The goal of this restriction was to increase the population of legal-antlered deer (older males) while reducing the population overall. Found that the restriction did not influence overall population; population increased in all categories. It’s unknown whether that’s because enforcement was incomplete or because the strategy is ineffective.

Placed 144 cameras for two months each year for four years (2019-2022). Used MDv3 to remove blanks (removed 44% of their images).

Miller S, Kirkland M, Hart KM, McCleery RA. Object detection-assisted workflow facilitates cryptic snake monitoring. Remote Sensing in Ecology and Conservation. 2025.

Evaluate MDv5 on a snake dataset from Florida, where MD doesn’t typically do very well. They used a confidence threshold of 0.05 (appropriate, given how difficult snakes are for MDv5). Had data from 42 time-triggered cameras, yielding 1.5M pictures over four weeks. Reviewed images in Timelapse. Found that manual review found 378 snakes (i.e., sequences with snakes) across 2747 total images; AI-accelerated review found 217 snakes in 487 total images. Interesting the detected snakes didn’t overlap as much as one might expect; the total number of snake detections summed across the methods was 447, i.e. AI-accelerated review was worse overall, but notice (447-378) snakes that manual review missed. Hybrid review was 1672% (!) faster than manual processing (excluding compute time).

Overall this paper is optimistic about the role of AI-accelerated review, even for cases as difficult as this (this is close to a worse-case scenario for MDv5: relatively rare images of relatively small things that were not in represented well in training).

McCormack L, Hamilton G. Evaluating a popular open-source detection model for processing complex camera trap imagery. Available at SSRN 5510369.

Evaluate MDv5a on 6500 camera trap images from Queensland and Tasmania. Used confidence thresholds of 0.5 (!!!) and 0.9 (!!!!!), yielding 95-99% precision but recall values of 0.68 and 0.3, respectively. The discussion highlights poor recall on small animals in particular.

Breaking the fourth wall a little: the low recall is expected here, since those are very high confidence thresholds, higher than would ever be recommended for a production workload. In fact those are 2.5x and 4.5x the recommended threshold, respectively. It’s possible those were taken from previous MDv4 workloads, otherwise I don’t know of any sources of guidance that would have led to those thresholds. I hope the data is made available or P/R curves are published; it would be interesting to see what performance looks like closer to typical confidence threshold ranges.

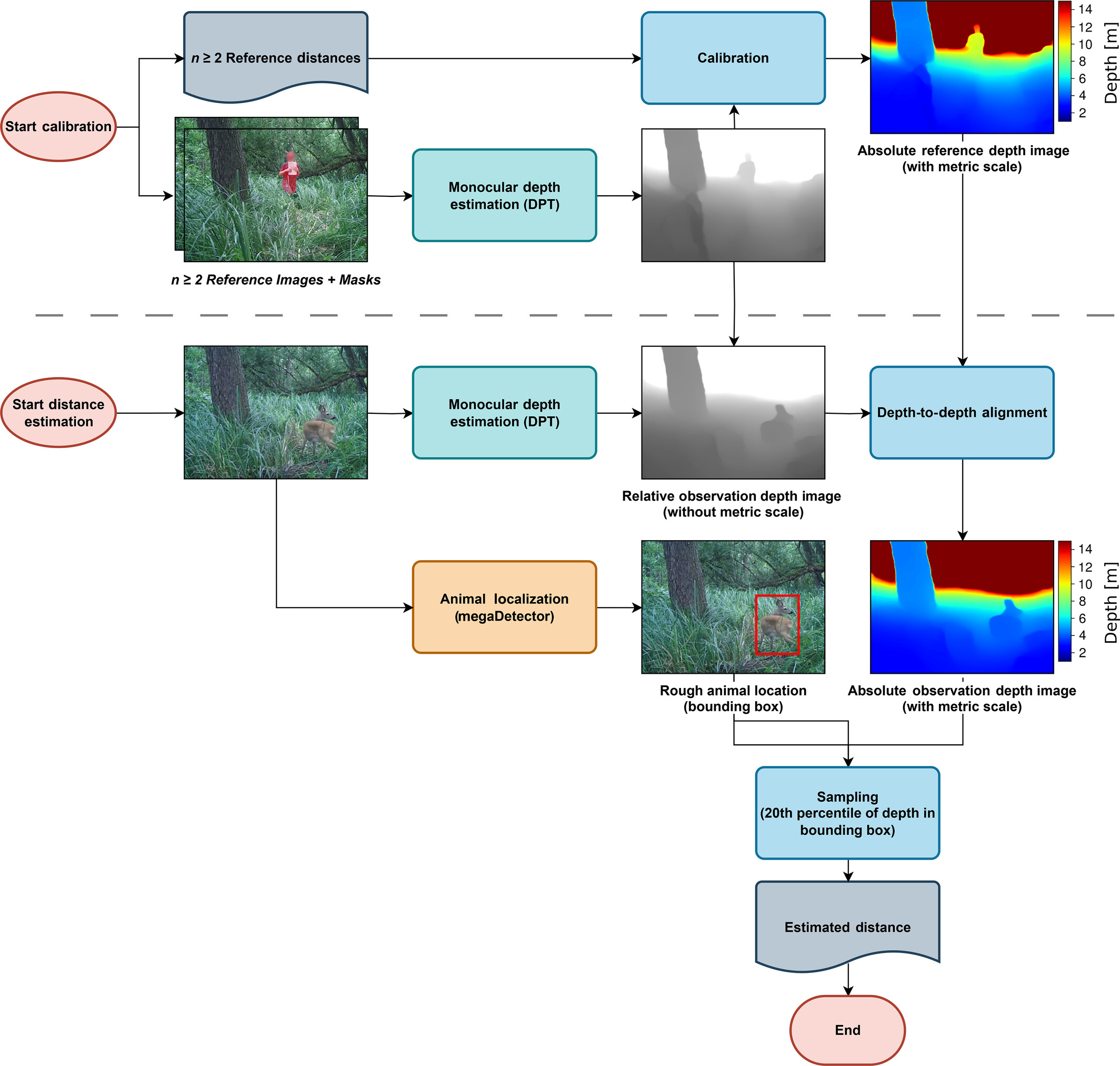

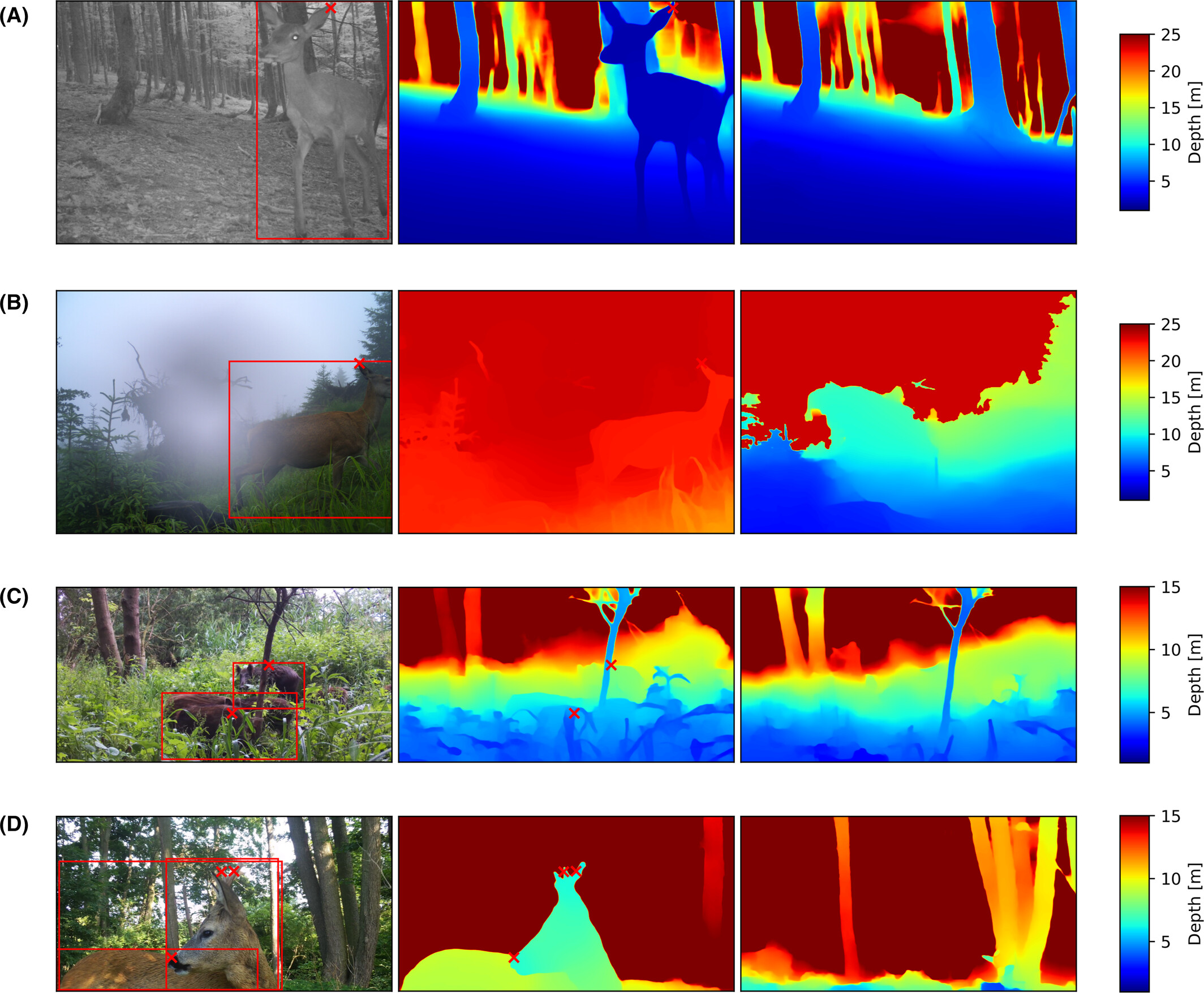

Mirka B, Lippitt CD, Harris GM, Converse R, Gurule M, Sesnie SE, Bulter MJ, Stewart DR, Rossman Z. A photogrammetric approach to the estimation of distance to animals in camera trap images. Ecological Informatics. 2025 Mar 26:103120.

Present and evaluate a pipeline for wildlife depth estimation with a single camera and a calibration step.

Deployed three camera traps in New Mexico, and placed 10 poles as distance markers/GCPs from 4m to 35m away from each camera. Took a radial pattern of pictures on an iPhone by walking a 20m-radius circle around the camera (not standing at the camera and spinning around, but walking a circle and taking pictures towards the camera). This was O(150) pictures per site. Used Metashape to compute a 3D scene model from images and GCPs.

Collect ~1500 images of wildlife, and manually put boxes on them (in Zooniverse), then used those boxes - along with the precomputed scene map - to estimate animal depth, using the minimum distance to the bottom of each box.

Data (including images and code) is here.

Labadie M, Morand S, Bourgarel M, Niama FR, Nguilili GF, Caron A, De Nys H. Habitat sharing and interspecies interactions in caves used by bats in the Republic of Congo. PeerJ. 2025 Jan 9;13:e18145.

Used camera traps to study two caves in the Republic of Congo known to be rich bat habitats. Used MegaDetector + Timelapse for image review; indicated that “MegaDetector falsely detected the presence of animals and humans in only 2.3% of all photos”. Reviewed all non-blanks manually for species identification and human behavior categorization. Used video for behavioral tagging of both humans and animals. Main findings include “greater species diversity and richness outside caves compared to inside”, “during wet seasons, bats tend to be more numerous”, frequent detection of rodents, and extensive use of the one of the caves by humans, for prayer activity and bat/guano harvesting.

They did not do a systematic recall analysis; and they highlight that “data processing with [MegaDetector] may also have had an impact on the detection of bats and insects, as it is not yet perfectly calibrated for this type of taxon”.

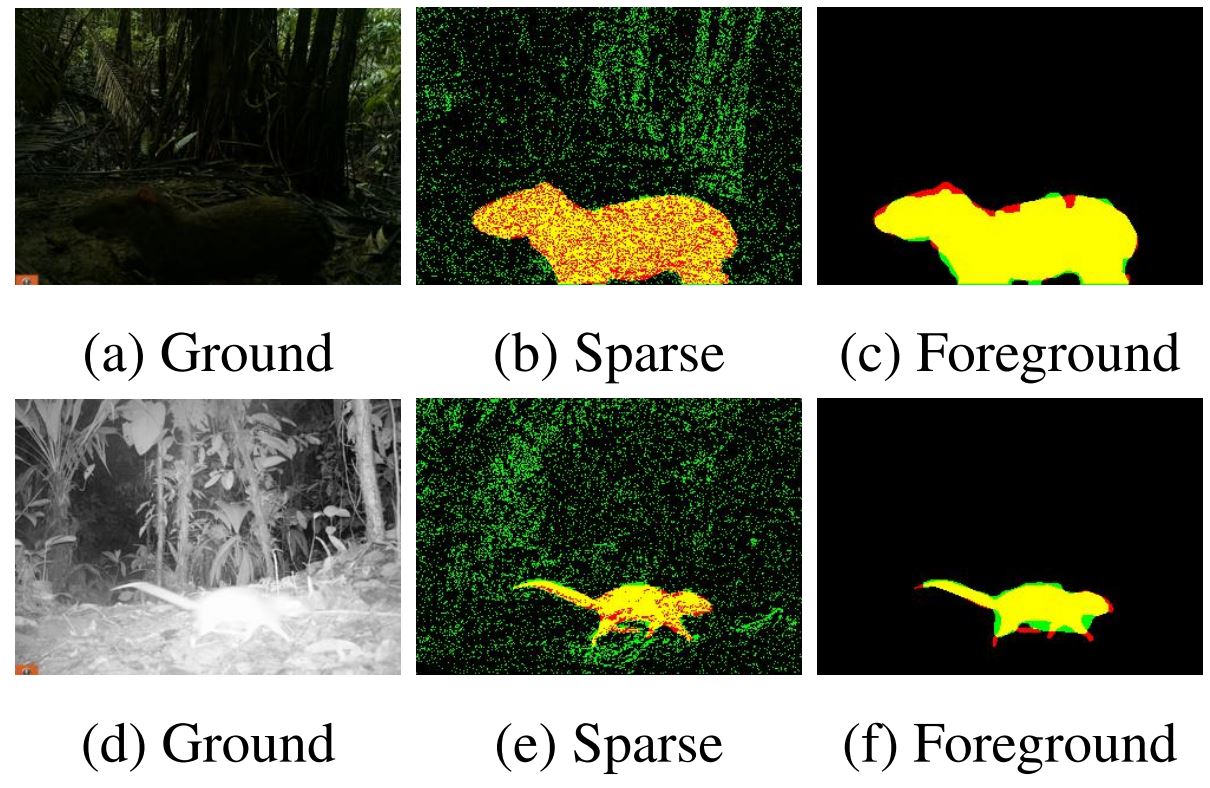

Pestell AJ, Rendall AR, Sinclair RD, Ritchie EG, Nguyen DT, Corva DM, Eichholtzer AC, Kouzani AZ, Driscoll DA. Smart camera traps and computer vision improve detections of small fauna. Ecosphere. 2025 Mar;16(3):e70220.

Compare AI performance of a smart camera (“DeakinCams”) to traditional cameras with MegaDetector, on 86k and 51k videos, respectively. Found that overall MD had higher accuracy (99% precision @ 98% recall compared to 98% precision @ 47% recall), but that only the DeakinCams system detected ectotherms/invertebrates.

Ran MDv5a via Camelot, reviewed images in Timelapse. Found that “MegaDetector performed just as well as manual classification”, but “some manual processing is necessary to validate model outputs, with the level of human intervention varying by model selection”.

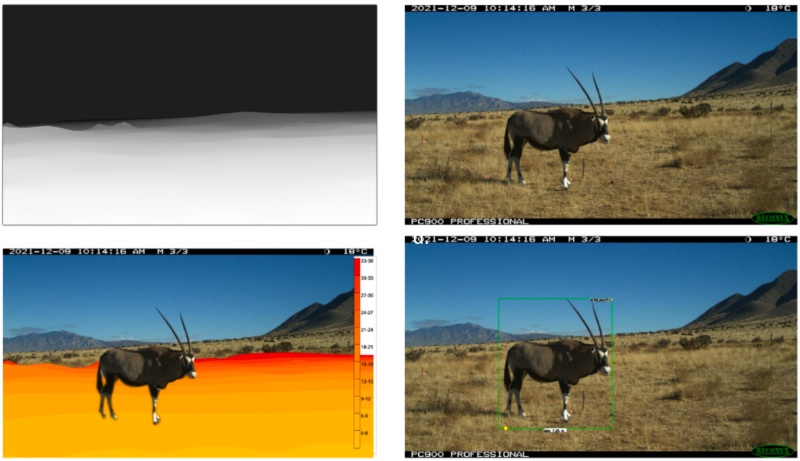

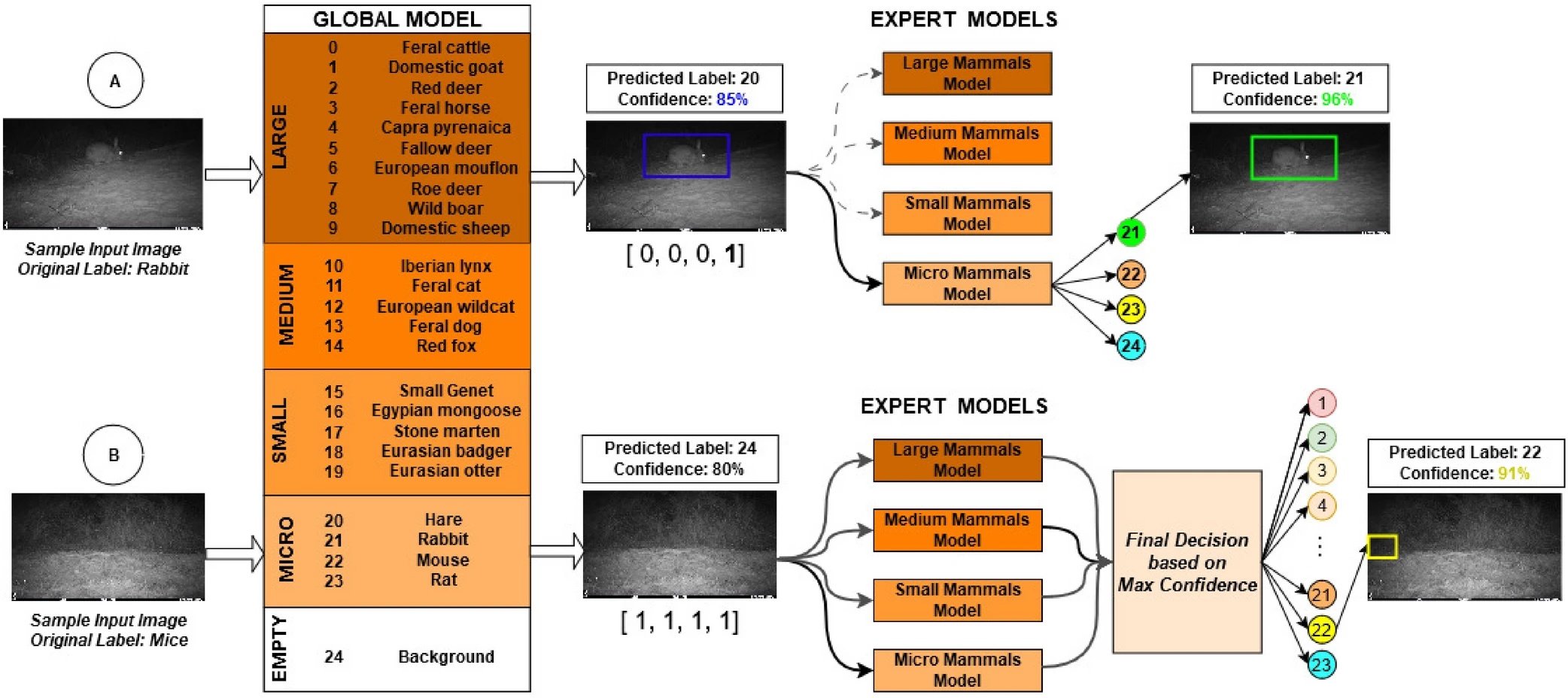

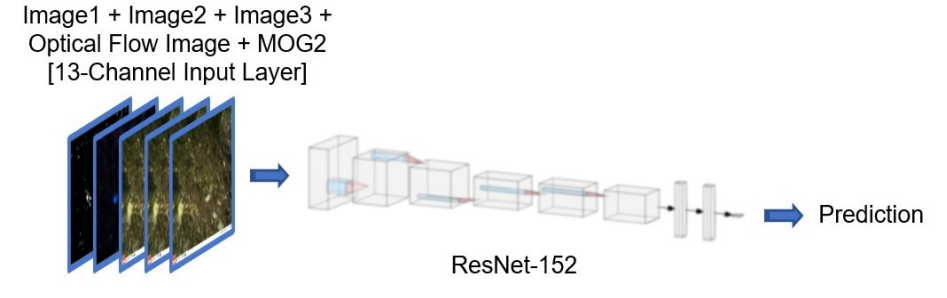

Mulero-Pázmány M, Hurtado S, Barba-González C, Antequera-Gómez ML, Díaz-Ruiz F, Real R, Navas-Delgado I, Aldana-Montes JF. Addressing significant challenges for animal detection in camera trap images: a novel deep learning-based approach. Scientific Reports. 2025 May 9;15(1):1-8.

They propose a two-model ensemble, where the first model classifies objects based on animal size (large/medium/small/micro/blank), and an “expert” model is trained for each coarse size category. Find that on a dataset of ~600k images from Spain, a two-stage approach raises accuracy from 92% to 96% on a test set of non-training cameras.

They used MDv5 for initial labeling, and after comparing other approaches, all models are also fine-tuned MDv5 instances. Specifically, they froze the first 10 layers of MDv5, and trained for 300 epochs.

Tjaden-McClement K, Gharajehdaghipour T, Shores C, White S, Steenweg R, Bourbonnais M, Konanz Z, Burton AC. Mixed evidence for disturbance-mediated apparent competition for declining caribou in western British Columbia, Canada. The Journal of Wildlife Management. 2025:e70040.

Evaluated the relationship between post-fire vegetation recovery and caribou predation risk; found mixed support for disturbance-mediated apparent competition.

179 cameras deployed in 2020-2023. Used MDv5 to streamline blank elimination; species classification was manual.

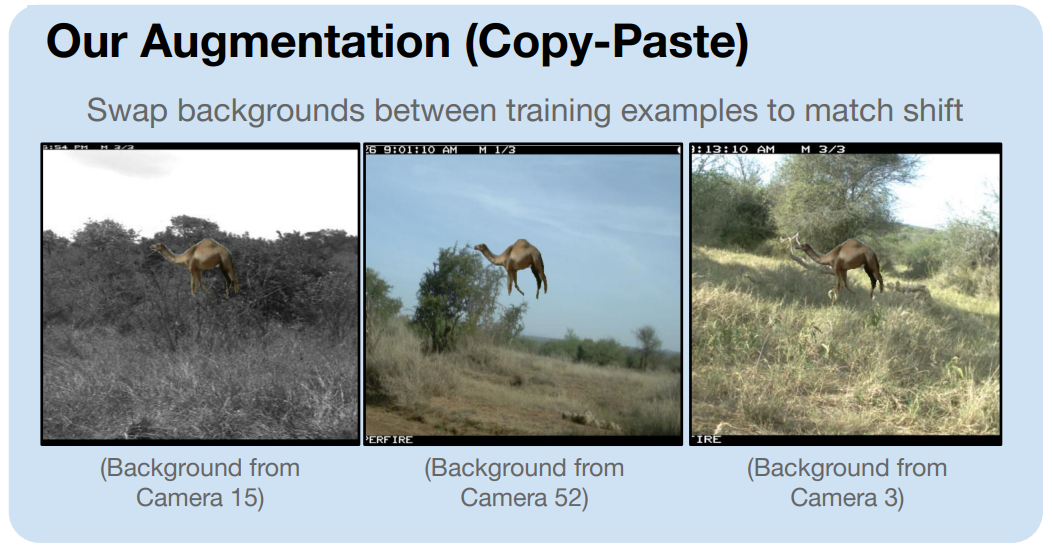

Rechter J. Improving the domain adaptation of camera trap image classifiers using inserted animal cutouts. Doctoral dissertation, University of Guelph, 2025.

Look at copy and paste augmentation for species classifier training. Work with two private datasets from Canada, with ~650k and 3.3M images. Restricted analysis to a small set of classes with large support in training. Used MegaDetector (MDv5a) to remove blanks, then to generate boxes for cutouts. Pixels within MD boxes were segmented with SAM prior to pasting. Trained DenseNet201 models.

Without copy and paste augmentation, replicated previous results showing substantial accuracy falloff in new cameras; they report ~30 percentage points lower accuracy and ~40 percentage points lower F1 for trans-tested models. Found that copy paste augmentation did not significantly improve cis-tested models. Found that synthetic images (i.e., copy-paste augmentation) improved performance in trans-tested models, and that class-balancing hurt rather than helped performance.

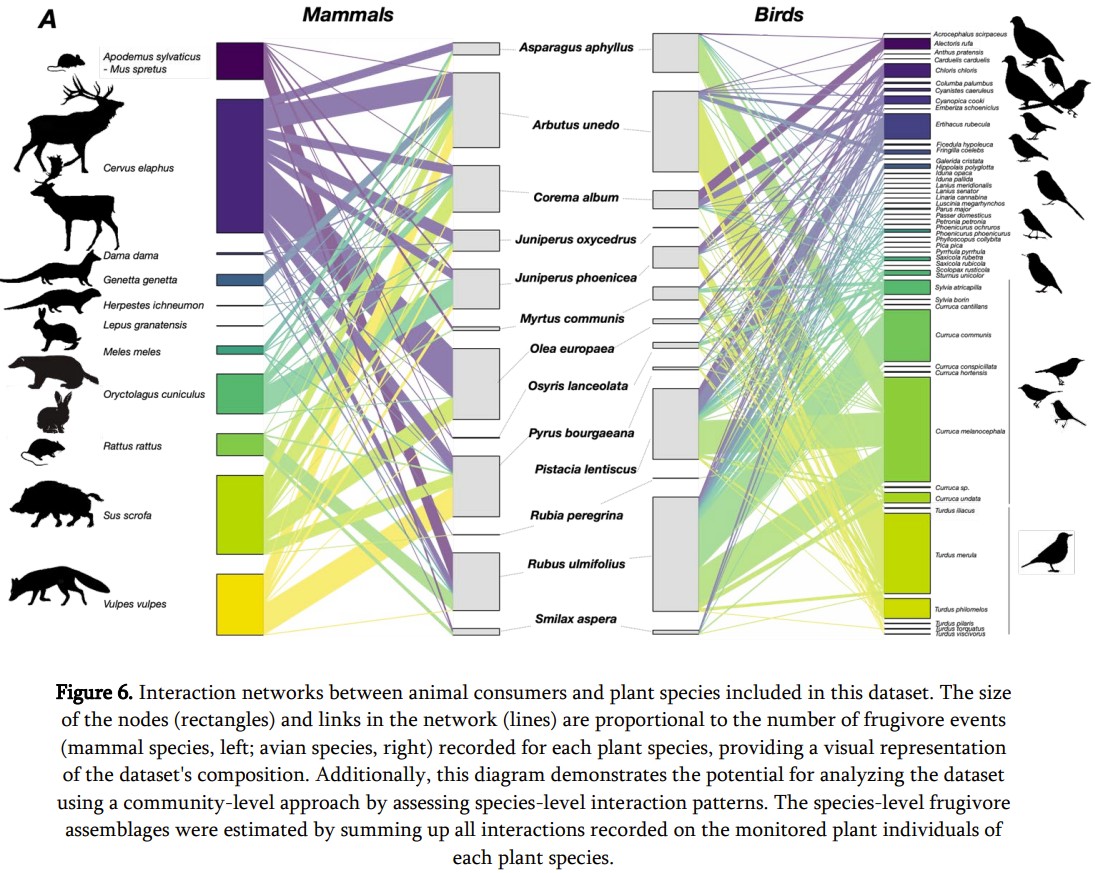

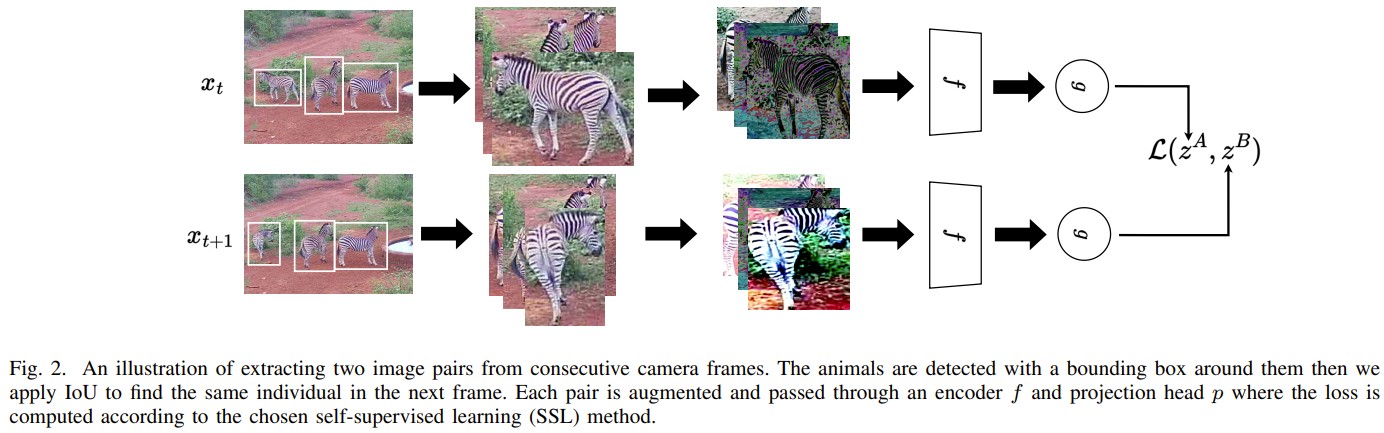

Villalva P, Jordano P. A Machine Learning Application to Camera-Traps: Robust Species Interactions Datasets for Analysis of Mutualistic Networks. Ecology and Evolution. 2026 Jan 21;16(1):e72584.

Introduce a computer-vision-based workflow for assessing species-species interactions, including animal-animal and animal-plant interactions (specifically fruit consumption).

They work with camera trap video; they use MD (version not specified, but the text implies MDv4) for blank video elimination, and review videos in Timelapse, for both species annotation and behavioral annotation (particularly fruit handling/consumption). Found that their workflow had an overall accuracy of 0.92 in terms of the presence of behaviors of interest, but they also find that a large number of videos with behaviors of interest were missed by MD. However, this analysis is only done at a confidence threshold of 0.8 (which is very high for MDv5, and even for MDv4, this is very high for difficult data with obstruction by leaves, so they were favoring precision over recall). They include a discussion of the impacts of confidence threshold later in the paper, so the decision to favor precision overall is likely a design choice.

Code is here.

Okuley OS, Aiello CM, Glad W, Perkins K, Ianniello R, Darby N, Epps CW. Improving AI performance in wildlife monitoring through species and environment-specific training: A case study on desert Bighorn sheep. Ecological Informatics. 2025 May 2:103179.

Compare species-specific (bespoke) and general (CameraTrapDetectoR) models for identifying bighorn sheep. Found that the bespoke model substantially outperforms the more general model with less training data; i.e., in this case, training data specificity beats training data volume.